Home / Guides / Citation Guides / How to Cite Sources

How to Cite Sources

Here is a complete list for how to cite sources. Most of these guides present citation guidance and examples in MLA, APA, and Chicago.

If you’re looking for general information on MLA or APA citations , the EasyBib Writing Center was designed for you! It has articles on what’s needed in an MLA in-text citation , how to format an APA paper, what an MLA annotated bibliography is, making an MLA works cited page, and much more!

MLA Format Citation Examples

The Modern Language Association created the MLA Style, currently in its 9th edition, to provide researchers with guidelines for writing and documenting scholarly borrowings. Most often used in the humanities, MLA style (or MLA format ) has been adopted and used by numerous other disciplines, in multiple parts of the world.

MLA provides standard rules to follow so that most research papers are formatted in a similar manner. This makes it easier for readers to comprehend the information. The MLA in-text citation guidelines, MLA works cited standards, and MLA annotated bibliography instructions provide scholars with the information they need to properly cite sources in their research papers, articles, and assignments.

- Book Chapter

- Conference Paper

- Documentary

- Encyclopedia

- Google Images

- Kindle Book

- Memorial Inscription

- Museum Exhibit

- Painting or Artwork

- PowerPoint Presentation

- Sheet Music

- Thesis or Dissertation

- YouTube Video

APA Format Citation Examples

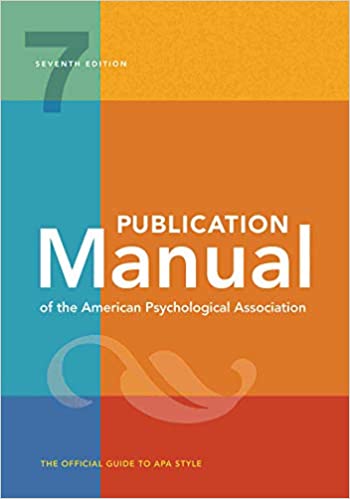

The American Psychological Association created the APA citation style in 1929 as a way to help psychologists, anthropologists, and even business managers establish one common way to cite sources and present content.

APA is used when citing sources for academic articles such as journals, and is intended to help readers better comprehend content, and to avoid language bias wherever possible. The APA style (or APA format ) is now in its 7th edition, and provides citation style guides for virtually any type of resource.

Chicago Style Citation Examples

The Chicago/Turabian style of citing sources is generally used when citing sources for humanities papers, and is best known for its requirement that writers place bibliographic citations at the bottom of a page (in Chicago-format footnotes ) or at the end of a paper (endnotes).

The Turabian and Chicago citation styles are almost identical, but the Turabian style is geared towards student published papers such as theses and dissertations, while the Chicago style provides guidelines for all types of publications. This is why you’ll commonly see Chicago style and Turabian style presented together. The Chicago Manual of Style is currently in its 17th edition, and Turabian’s A Manual for Writers of Research Papers, Theses, and Dissertations is in its 8th edition.

Citing Specific Sources or Events

- Declaration of Independence

- Gettysburg Address

- Martin Luther King Jr. Speech

- President Obama’s Farewell Address

- President Trump’s Inauguration Speech

- White House Press Briefing

Additional FAQs

- Citing Archived Contributors

- Citing a Blog

- Citing a Book Chapter

- Citing a Source in a Foreign Language

- Citing an Image

- Citing a Song

- Citing Special Contributors

- Citing a Translated Article

- Citing a Tweet

6 Interesting Citation Facts

The world of citations may seem cut and dry, but there’s more to them than just specific capitalization rules, MLA in-text citations , and other formatting specifications. Citations have been helping researches document their sources for hundreds of years, and are a great way to learn more about a particular subject area.

Ever wonder what sets all the different styles apart, or how they came to be in the first place? Read on for some interesting facts about citations!

1. There are Over 7,000 Different Citation Styles

You may be familiar with MLA and APA citation styles, but there are actually thousands of citation styles used for all different academic disciplines all across the world. Deciding which one to use can be difficult, so be sure to ask you instructor which one you should be using for your next paper.

2. Some Citation Styles are Named After People

While a majority of citation styles are named for the specific organizations that publish them (i.e. APA is published by the American Psychological Association, and MLA format is named for the Modern Language Association), some are actually named after individuals. The most well-known example of this is perhaps Turabian style, named for Kate L. Turabian, an American educator and writer. She developed this style as a condensed version of the Chicago Manual of Style in order to present a more concise set of rules to students.

3. There are Some Really Specific and Uniquely Named Citation Styles

How specific can citation styles get? The answer is very. For example, the “Flavour and Fragrance Journal” style is based on a bimonthly, peer-reviewed scientific journal published since 1985 by John Wiley & Sons. It publishes original research articles, reviews and special reports on all aspects of flavor and fragrance. Another example is “Nordic Pulp and Paper Research,” a style used by an international scientific magazine covering science and technology for the areas of wood or bio-mass constituents.

4. More citations were created on EasyBib.com in the first quarter of 2018 than there are people in California.

The US Census Bureau estimates that approximately 39.5 million people live in the state of California. Meanwhile, about 43 million citations were made on EasyBib from January to March of 2018. That’s a lot of citations.

5. “Citations” is a Word With a Long History

The word “citations” can be traced back literally thousands of years to the Latin word “citare” meaning “to summon, urge, call; put in sudden motion, call forward; rouse, excite.” The word then took on its more modern meaning and relevance to writing papers in the 1600s, where it became known as the “act of citing or quoting a passage from a book, etc.”

6. Citation Styles are Always Changing

The concept of citations always stays the same. It is a means of preventing plagiarism and demonstrating where you relied on outside sources. The specific style rules, however, can and do change regularly. For example, in 2018 alone, 46 new citation styles were introduced , and 106 updates were made to exiting styles. At EasyBib, we are always on the lookout for ways to improve our styles and opportunities to add new ones to our list.

Why Citations Matter

Here are the ways accurate citations can help your students achieve academic success, and how you can answer the dreaded question, “why should I cite my sources?”

They Give Credit to the Right People

Citing their sources makes sure that the reader can differentiate the student’s original thoughts from those of other researchers. Not only does this make sure that the sources they use receive proper credit for their work, it ensures that the student receives deserved recognition for their unique contributions to the topic. Whether the student is citing in MLA format , APA format , or any other style, citations serve as a natural way to place a student’s work in the broader context of the subject area, and serve as an easy way to gauge their commitment to the project.

They Provide Hard Evidence of Ideas

Having many citations from a wide variety of sources related to their idea means that the student is working on a well-researched and respected subject. Citing sources that back up their claim creates room for fact-checking and further research . And, if they can cite a few sources that have the converse opinion or idea, and then demonstrate to the reader why they believe that that viewpoint is wrong by again citing credible sources, the student is well on their way to winning over the reader and cementing their point of view.

They Promote Originality and Prevent Plagiarism

The point of research projects is not to regurgitate information that can already be found elsewhere. We have Google for that! What the student’s project should aim to do is promote an original idea or a spin on an existing idea, and use reliable sources to promote that idea. Copying or directly referencing a source without proper citation can lead to not only a poor grade, but accusations of academic dishonesty. By citing their sources regularly and accurately, students can easily avoid the trap of plagiarism , and promote further research on their topic.

They Create Better Researchers

By researching sources to back up and promote their ideas, students are becoming better researchers without even knowing it! Each time a new source is read or researched, the student is becoming more engaged with the project and is developing a deeper understanding of the subject area. Proper citations demonstrate a breadth of the student’s reading and dedication to the project itself. By creating citations, students are compelled to make connections between their sources and discern research patterns. Each time they complete this process, they are helping themselves become better researchers and writers overall.

When is the Right Time to Start Making Citations?

Make in-text/parenthetical citations as you need them.

As you are writing your paper, be sure to include references within the text that correspond with references in a works cited or bibliography. These are usually called in-text citations or parenthetical citations in MLA and APA formats. The most effective time to complete these is directly after you have made your reference to another source. For instance, after writing the line from Charles Dickens’ A Tale of Two Cities : “It was the best of times, it was the worst of times…,” you would include a citation like this (depending on your chosen citation style):

(Dickens 11).

This signals to the reader that you have referenced an outside source. What’s great about this system is that the in-text citations serve as a natural list for all of the citations you have made in your paper, which will make completing the works cited page a whole lot easier. After you are done writing, all that will be left for you to do is scan your paper for these references, and then build a works cited page that includes a citation for each one.

Need help creating an MLA works cited page ? Try the MLA format generator on EasyBib.com! We also have a guide on how to format an APA reference page .

2. Understand the General Formatting Rules of Your Citation Style Before You Start Writing

While reading up on paper formatting may not sound exciting, being aware of how your paper should look early on in the paper writing process is super important. Citation styles can dictate more than just the appearance of the citations themselves, but rather can impact the layout of your paper as a whole, with specific guidelines concerning margin width, title treatment, and even font size and spacing. Knowing how to organize your paper before you start writing will ensure that you do not receive a low grade for something as trivial as forgetting a hanging indent.

Don’t know where to start? Here’s a formatting guide on APA format .

3. Double-check All of Your Outside Sources for Relevance and Trustworthiness First

Collecting outside sources that support your research and specific topic is a critical step in writing an effective paper. But before you run to the library and grab the first 20 books you can lay your hands on, keep in mind that selecting a source to include in your paper should not be taken lightly. Before you proceed with using it to backup your ideas, run a quick Internet search for it and see if other scholars in your field have written about it as well. Check to see if there are book reviews about it or peer accolades. If you spot something that seems off to you, you may want to consider leaving it out of your work. Doing this before your start making citations can save you a ton of time in the long run.

Finished with your paper? It may be time to run it through a grammar and plagiarism checker , like the one offered by EasyBib Plus. If you’re just looking to brush up on the basics, our grammar guides are ready anytime you are.

How useful was this post?

Click on a star to rate it!

We are sorry that this post was not useful for you!

Let us improve this post!

Tell us how we can improve this post?

Citation Basics

Harvard Referencing

Plagiarism Basics

Plagiarism Checker

Upload a paper to check for plagiarism against billions of sources and get advanced writing suggestions for clarity and style.

Get Started

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Referencing

- Harvard In-Text Citation | A Complete Guide & Examples

Harvard In-Text Citation | A Complete Guide & Examples

Published on 30 April 2020 by Jack Caulfield . Revised on 5 May 2022.

An in-text citation should appear wherever you quote or paraphrase a source in your writing, pointing your reader to the full reference .

In Harvard style , citations appear in brackets in the text. An in-text citation consists of the last name of the author, the year of publication, and a page number if relevant.

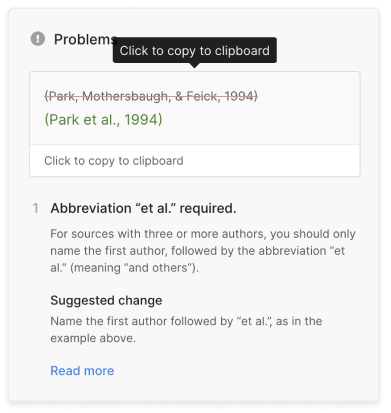

Up to three authors are included in Harvard in-text citations. If there are four or more authors, the citation is shortened with et al .

| 1 author | (Smith, 2014) |

|---|---|

| 2 authors | (Smith and Jones, 2014) |

| 3 authors | (Smith, Jones and Davies, 2014) |

| 4+ authors | (Smith , 2014) |

Instantly correct all language mistakes in your text

Be assured that you'll submit flawless writing. Upload your document to correct all your mistakes.

Table of contents

Including page numbers in citations, where to place harvard in-text citations, citing sources with missing information, frequently asked questions about harvard in-text citations.

When you quote directly from a source or paraphrase a specific passage, your in-text citation must include a page number to specify where the relevant passage is located.

Use ‘p.’ for a single page and ‘pp.’ for a page range:

- Meanwhile, another commentator asserts that the economy is ‘on the downturn’ (Singh, 2015, p. 13 ).

- Wilson (2015, pp. 12–14 ) makes an argument for the efficacy of the technique.

If you are summarising the general argument of a source or paraphrasing ideas that recur throughout the text, no page number is needed.

The only proofreading tool specialized in correcting academic writing

The academic proofreading tool has been trained on 1000s of academic texts and by native English editors. Making it the most accurate and reliable proofreading tool for students.

Correct my document today

When incorporating citations into your text, you can either name the author directly in the text or only include the author’s name in brackets.

Naming the author in the text

When you name the author in the sentence itself, the year and (if relevant) page number are typically given in brackets straight after the name:

Naming the author directly in your sentence is the best approach when you want to critique or comment on the source.

Naming the author in brackets

When you you haven’t mentioned the author’s name in your sentence, include it inside the brackets. The citation is generally placed after the relevant quote or paraphrase, or at the end of the sentence, before the full stop:

Multiple citations can be included in one place, listed in order of publication year and separated by semicolons:

This type of citation is useful when you want to support a claim or summarise the overall findings of sources.

Common mistakes with in-text citations

In-text citations in brackets should not appear as the subject of your sentences. Anything that’s essential to the meaning of a sentence should be written outside the brackets:

- (Smith, 2019) argues that…

- Smith (2019) argues that…

Similarly, don’t repeat the author’s name in the bracketed citation and in the sentence itself:

- As Caulfield (Caulfield, 2020) writes…

- As Caulfield (2020) writes…

Sometimes you won’t have access to all the source information you need for an in-text citation. Here’s what to do if you’re missing the publication date, author’s name, or page numbers for a source.

If a source doesn’t list a clear publication date, as is sometimes the case with online sources or historical documents, replace the date with the words ‘no date’:

When it’s not clear who the author of a source is, you’ll sometimes be able to substitute a corporate author – the group or organisation responsible for the publication:

When there’s no corporate author to cite, you can use the title of the source in place of the author’s name:

No page numbers

If you quote from a source without page numbers, such as a website, you can just omit this information if it’s a short text – it should be easy enough to find the quote without it.

If you quote from a longer source without page numbers, it’s best to find an alternate location marker, such as a paragraph number or subheading, and include that:

A Harvard in-text citation should appear in brackets every time you quote, paraphrase, or refer to information from a source.

The citation can appear immediately after the quotation or paraphrase, or at the end of the sentence. If you’re quoting, place the citation outside of the quotation marks but before any other punctuation like a comma or full stop.

In Harvard referencing, up to three author names are included in an in-text citation or reference list entry. When there are four or more authors, include only the first, followed by ‘ et al. ’

| In-text citation | Reference list | |

|---|---|---|

| 1 author | (Smith, 2014) | Smith, T. (2014) … |

| 2 authors | (Smith and Jones, 2014) | Smith, T. and Jones, F. (2014) … |

| 3 authors | (Smith, Jones and Davies, 2014) | Smith, T., Jones, F. and Davies, S. (2014) … |

| 4+ authors | (Smith , 2014) | Smith, T. (2014) … |

In Harvard style , when you quote directly from a source that includes page numbers, your in-text citation must include a page number. For example: (Smith, 2014, p. 33).

You can also include page numbers to point the reader towards a passage that you paraphrased . If you refer to the general ideas or findings of the source as a whole, you don’t need to include a page number.

When you want to use a quote but can’t access the original source, you can cite it indirectly. In the in-text citation , first mention the source you want to refer to, and then the source in which you found it. For example:

It’s advisable to avoid indirect citations wherever possible, because they suggest you don’t have full knowledge of the sources you’re citing. Only use an indirect citation if you can’t reasonably gain access to the original source.

In Harvard style referencing , to distinguish between two sources by the same author that were published in the same year, you add a different letter after the year for each source:

- (Smith, 2019a)

- (Smith, 2019b)

Add ‘a’ to the first one you cite, ‘b’ to the second, and so on. Do the same in your bibliography or reference list .

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

Caulfield, J. (2022, May 05). Harvard In-Text Citation | A Complete Guide & Examples. Scribbr. Retrieved 9 June 2024, from https://www.scribbr.co.uk/referencing/harvard-in-text-citation/

Is this article helpful?

Jack Caulfield

Other students also liked, a quick guide to harvard referencing | citation examples, harvard style bibliography | format & examples, referencing books in harvard style | templates & examples, scribbr apa citation checker.

An innovative new tool that checks your APA citations with AI software. Say goodbye to inaccurate citations!

APA (7th Edition) Referencing Guide

- Information for EndNote Users

- Authors - Numbers, Rules and Formatting

Everything must match!

Types of citations, in-text citations, quoting, summarising and paraphrasing, example text with in-text referencing, slightly tricky in-text citations, organisation as an author, secondary citation (works referred to in other works), what do i do if there are no page numbers.

- Reference List

- Books & eBooks

- Book chapters

- Journal Articles

- Conference Papers

- Newspaper Articles

- Web Pages & Documents

- Specialised Health Databases

- Using Visual Works in Assignments & Class Presentations

- Using Visual Works in Theses and Publications

- Using Tables in Assignments & Class Presentations

- Custom Textbooks & Books of Readings

- ABS AND AIHW

- Videos (YouTube), Podcasts & Webinars

- Blog Posts and Social Media

- First Nations Works

- Dictionary and Encyclopedia Entries

- Personal Communication

- Theses and Dissertations

- Film / TV / DVD

- Miscellaneous (Generic Reference)

- AI software

- APA Format for Assignments

- What If...?

- Other Guides

There are two basic ways to cite someone's work in text.

In narrative citations , the authors are part of the sentence - you are referring to them by name. For example:

Becker (2013) defined gamification as giving the mechanics of principles of a game to other activities.

Cho and Castañeda (2019) noted that game-like activities are frequently used in language classes that adopt mobile and computer technologies.

In parenthetical citations , the authors are not mentioned in the sentence, just the content of their work. Place the citation at the end of the sentence or clause where you have used their information. The author's names are placed in the brackets (parentheses) with the rest of the citation details:

Gamification involves giving the mechanics or principles of a game to another activity (Becker, 2013).

Increasingly, game-like activities are frequently used in language classes that adopt mobile and computer technologies (Cho & Castañeda, 2019).

Using references in text

For APA, you use the authors' surnames only and the year in text. If you are using a direct quote, you will also need to use a page number.

Narrative citations:

If an in-text citation has the authors' names as part of the sentence (that is, outside of brackets) place the year and page numbers in brackets immediately after the name, and use 'and' between the authors' names: Jones and Smith (2020, p. 29)

Parenthetical citations:

If an in-text citation has the authors' names in brackets use "&" between the authors' names : (Jones & Smith, 2020, p. 29).

Note: Some lecturers want page numbers for all citations, while some only want page numbers with direct quotes. Check with your lecturer to see what you need to do for your assignment. If the direct quote starts on one page and finishes on another, include the page range (Jones & Smith, 2020, pp. 29-30).

1 author

Smith (2020) found that "the mice disappeared within minutes" (p. 29).

The author stated "the mice disappeared within minutes" (Smith, 2020, p. 29).

Jones and Smith (2020) found that "the mice disappeared within minutes" (p. 29).

The authors stated "the mice disappeared within minutes" (Jones & Smith, 2020, p. 29).

For 3 or more authors , use the first author and "et al." for all in-text citations

Green et al.'s (2019) findings indicated that the intervention was not based on evidence from clinical trials.

It appears the intervention was not based on evidence from clinical trials (Green et al., 2019).

If you cite more than one work in the same set of brackets in text , your citations will go in the same order in which they will appear in your reference list (i.e. alphabetical order, then oldest to newest for works by the same author) and be separated by a semi-colon. E.g.:

- (Corbin, 2015; James & Waterson, 2017; Smith et al., 2016).

- (Corbin, 2015; 2018)

- (Queensland Health, 2017a; 2017b)

- Use only the surnames of your authors in text (e.g., Smith & Brown, 2014) - however, if you have two authors with the same surname who have published in the same year, then you will need to use their initials to distinguish between the two of them (e.g., K. Smith, 2014; N. Smith, 2014). Otherwise, do not use initials in text .

If your author isn't an "author".

Whoever is in the "author" position of the refence in the references list is treated like an author in text. So, for example, if you had an edited book and the editors of the book were in the "author" position at the beginning of the reference, you would treat them exactly the same way as you would an author - do not include any other information. The same applies for works where the "author" is an illustrator, producer, composer, etc.

- Summarising

- Paraphrasing

It is always a good idea to keep direct quotes to a minimum. Quoting doesn't showcase your writing ability - all it shows is that you can read (plus, lecturers hate reading assignments with a lot of quotes).

You should only use direct quotes if the exact wording is important , otherwise it is better to paraphrase.

If you feel a direct quote is appropriate, try to keep only the most important part of the quote and avoid letting it take up the entire sentence - always start or end the sentence with your own words to tie the quote back into your assignment. Long quotes (more than 40 words) are called "block quotes" and are rarely used in most subject areas (they mostly belong in Literature, History or similar subjects). Each referencing style has rules for setting out a block quote. Check with your style guide .

It has been observed that "pink fairy armadillos seem to be extremely susceptible to stress" (Superina, 2011, p. 6).

NB! Most referencing styles will require a page number to tell readers where to find the original quote.

It is a type of paraphrasing, and you will be using this frequently in your assignments, but note that summarising another person's work or argument isn't showing how you make connections or understand implications. This is preferred to quoting, but where possible try to go beyond simply summarising another person's information without "adding value".

And, remember, the words must be your own words . If you use the exact wording from the original at any time, those words must be treated as a direct quote.

All information must be cited, even if it is in your own words.

Superina (2011) observed a captive pink fairy armadillo, and noticed any variation in its environment could cause great stress.

NB! Some lecturers and citation styles want page numbers for everything you cite, others only want page numbers for direct quotes. Check with your lecturer.

Paraphrasing often involves commenting about the information at the same time, and this is where you can really show your understanding of the topic. You should try to do this within every paragraph in the body of your assignment.

When paraphrasing, it is important to remember that using a thesaurus to change every other word isn't really paraphrasing. It's patchwriting , and it's a kind of plagiarism (as you are not creating original work).

Use your own voice! You sound like you when you write - you have a distinctive style that is all your own, and when your "tone" suddenly changes for a section of your assignment, it looks highly suspicious. Your lecturer starts to wonder if you really wrote that part yourself. Make sure you have genuinely thought about how *you* would write this information, and that the paraphrasing really is in your own words.

Always cite your sources! Even if you have drawn from three different papers to write this one sentence, which is completely in your own words, you still have to cite your sources for that sentence (oh, and excellent work, by the way).

Captive pink fairy armadillos do not respond well to changes in their environment and can be easily stressed (Superina, 2011).

NB! Some lecturers and citation styles want page numbers for all citations, others only want them for direct quotes. Check with your lecturer.

This example paragraph contains mouse-over text. Run your mouse over the paragraph to see notes on formatting.

Excerpt from "The Big Fake Essay"

You can read the entire Big Fake Essay on the Writing Guide. It includes more details about academic writing and the formatting of essays.

- The Big Fake Essay

- Academic Writing Workshop

When you have multiple authors with the same surname who published in the same year:

If your authors have different initials, then include the initials:

As A. Smith (2016) noted...

...which was confirmed by J.G. Smith's (2016) study.

(A. Smith, 2016; J. G. Smith, 2016).

If your authors have the same initials, then include the name:

As Adam Smith noted...

...which was confirmed by Amy Smith's (2016) study.

(Adam Smith, 2016; Amy Smith, 2016).

Note: In your reference list, you would include the author's first name in [square brackets] after their initials:

Smith, A. [Adam]. (2016)...

Smith, A. [Amy]. (2016)...

When you have multiple works by the same author in the same year:

In your reference list, you will have arranged the works alphabetically by title (see the page on Reference Lists for more information). This decides which reference is "a", "b", "c", and so on. You cite them in text accordingly:

Asthma is the most common disease affecting the Queensland population (Queensland Health, 2017b). However, many people do not know how to manage their asthma symptoms (Queensland Health, 2017a).

When you have multiple works by the same author in different years:

Asthma is the most common disease affecting the Queensland population (Queensland Health, 2017, 2018).

When you do not have an author, and your reference list entry begins with the title:

Use the title in place of the author's name, and place it in "quotation marks" if it is the title of an article or book chapter, or in italics if the title would go in italics in your reference list:

During the 2017 presidential inauguration, there were some moments of awkwardness ("Mrs. Obama Says ‘Lovely Frame’", 2018).

Note: You do not need to use the entire title, but a reasonable portion so that it does not end too abruptly - "Mrs. Obama Says" would be too abrupt, but the full title "Mrs. Obama Says 'Lovely Frame' in Box During Awkward Handoff" is unecessarily long. You should also use title case for titles when referring to them in the text of your work.

If there are no page numbers, you can include any of the following in the in-text citation:

- "On Australia Day 1938 William Cooper ... joined forces with Jack Patten and William Ferguson ... to hold a Day of Mourning to draw attention to the losses suffered by Aboriginal people at the hands of the whiteman" (National Museum of Australia, n.d., para. 4).

- "in 1957 news of a report by the Western Australian government provided the catalyst for a reform movement" (National Museum of Australia, n.d., The catalyst for change section, para. 1)

- "By the end of this year of intense activity over 100,000 signatures had been collected" (National Museum of Australia, n.d., "petition gathering", para. 1).

When you are citing a classical work, like the Bible or the Quran:

References to works of scripture or other classical works are treated differently to regular citations. See the APA Blog's entry for more details:

Happy Holiday Citing: Citation of Classical Works . (Please note, this document is from the 6th edition of APA).

In text citation:

If the name of the organisation first appears in a narrative citation, include the abbreviation before the year in brackets, separated with a comma. Use the official acronym/abreviation if you can find it. Otherwise check with your lecturer for permission to create your own acronyms.

Australian Bureau of Statistics (ABS, 2013) shows that...

The Queensland Department of Education (DoE, 2020) encourages students to... (please note, Queensland isn't part of the department's name, it is used in the sentence to provide clarity)

If the name of the organisation first appears in a citation in brackets, include the abbreviation in square brackets.

(Australian Bureau of Statistics [ABS], 2013)

(Department of Education [DoE], 2020)

In the second and subsequent citations, only include the abbreviation or acronym

ABS (2013) found that ...

DoE (2020) instructs teachers to...

This is disputed ( ABS , 2013).

Resources are designed to support "emotional learning pedagogy" (DoE, 2020)

In the reference list:

Use the full name of the organisation in the reference list.

Australian Institute of Health and Welfare. (2017). Australia's welfare 2017 . https://www.aihw.gov.au/reports/australias-welfare/australias-welfare-2017/contents/table-of-contents

Department of Education. (2020, April 22). Respectful relationships education program . Queensland Government. https://education.qld.gov.au/curriculum/stages-of-schooling/respectful-relationships

Academically, it is better to find the original source and reference that.

If you do have to quote a secondary source:

- In the text you must cite the original author of the quote and the year the original quote was written as well as the source you read it in. If you do not know the year the original citation was written, omit the year.

- In the reference list you only list the source that you actually read.

Wembley (1997, as cited in Olsen, 1999) argues that impending fuel shortages ...

Wembley claimed that "fuel shortages are likely" (1997, as cited in Olsen, 1999, pp. 10-12).

Some have noted that fuel shortages are probable in the future (Wembley, 1997, as cited in Olsen, 1999).

Olsen, M. (1999). My career. Gallimard.

- << Previous: Dates

- Next: Reference List >>

- Last Updated: May 22, 2024 11:40 AM

- URL: https://libguides.jcu.edu.au/apa

Essay Writing: In-Text Citations

- Essay Writing Basics

- Purdue OWL Page on Writing Your Thesis This link opens in a new window

- Paragraphs and Transitions

- How to Tell if a Website is Legitimate This link opens in a new window

- Formatting Your References Page

- Cite a Website

- Common Grammatical and Mechanical Errors

- Additional Resources

- Proofread Before You Submit Your Paper

- Structuring the 5-Paragraph Essay

In-text Citations

What are In-Text Citations?

You must cite (give credit) all information sources used in your essay or research paper whenever and wherever you use them.

When citing sources in the text of your paper, you must list:

● The author’s last name

● The year the information was published.

Types of In-Text Citations: Narrative vs Parenthetical

A narrative citation gives the author's name as part of the sentence .

- Example of a Narrative Citation: According to Edwards (2017) , a lthough Smith and Carlos's protest at the 1968 Olympics initially drew widespread criticism, it also led to fundamental reforms in the organizational structure of American amateur athletics.

A parenthetical citation puts the source information in parentheses—first or last—but does not include it in the narrative flow.

- Example of a Parenthetical Citation: Although Tommie Smith and John Carlos paid a heavy price in the immediate aftermath of the protests, they were later vindicated by society at large (Edwards, 2017) .

Full citation for this source (this belongs on the Reference Page of your research paper or essay):

Edwards, H. (2017). The Revolt of the Black Athlete: 50th Anniversary Edition. University of Illinois Press.

Sample In-text Citations

| Studies have shown music and art therapies to be effective in aiding those dealing with mental disorders as well as managing, exploring, and gaining insight into traumatic experiences their patients may have faced. (Stuckey & Nobel, 2010). |

| - FIRST INITIAL, ARTICLE TITLE -- |

| Hint: (Use an when they appear in parenthetical citations.) e.g.: (Jones & Smith, 2022) |

| Stuckey and Nobel (2010) noted, "it has been shown that music can calm neural activity in the brain, which may lead to reductions in anxiety, and that it may help to restore effective functioning in the immune system." |

|

|

Note: This example is a direct quote. It is an exact quotation directly from the text of the article. All direct quotes should appear in quotation marks: "...."

Try keeping direct quotes to a minimum in your writing. You need to show your understanding of the source material by being able to paraphrase or summarize it.

List the author’s last name only (no initials) and the year the information was published, like this:

(Dodge, 2008 ). ( Author , Date).

IF you use a direct quote, add the page number to your citation, like this:

( Dodge , 2008 , p. 125 ).

( Author , Date , page number )

What information should I cite in my paper/essay?

Credit these sources when you mention their information in any way: direct quotation, paraphrase, or summarize.

What should you credit?

Any information that you learned from another source, including:

● statistics

EXCEPTION: Information that is common knowledge: e.g., The Bronx is a borough of New York City.

Quick Sheet: APA 7 Citations

Quick help with apa 7 citations.

- Quick Sheet - Citing Journal Articles, Websites & Videos, and Creating In-Text Citations A quick guide to the most frequently-used types of APA 7 citations.

In-text Citation Tutorial

- Formatting In-text Citations, Full Citations, and Block Quotes In APA 7 Style This presentation will help you understand when, why, and how to use in-text citations in your APA style paper.

Download the In-text Citations presentation (above) for an in-depth look at how to correctly cite your sources in the text of your paper.

SIgnal Phrase Activity

Paraphrasing activity from the excelsior owl, in-text citation quiz.

- << Previous: Formatting Your References Page

- Next: Cite a Website >>

- Last Updated: May 23, 2024 11:09 AM

- URL: https://monroecollege.libguides.com/essaywriting

- Research Guides |

- Databases |

- PRO Courses Guides New Tech Help Pro Expert Videos About wikiHow Pro Upgrade Sign In

- EDIT Edit this Article

- EXPLORE Tech Help Pro About Us Random Article Quizzes Request a New Article Community Dashboard This Or That Game Popular Categories Arts and Entertainment Artwork Books Movies Computers and Electronics Computers Phone Skills Technology Hacks Health Men's Health Mental Health Women's Health Relationships Dating Love Relationship Issues Hobbies and Crafts Crafts Drawing Games Education & Communication Communication Skills Personal Development Studying Personal Care and Style Fashion Hair Care Personal Hygiene Youth Personal Care School Stuff Dating All Categories Arts and Entertainment Finance and Business Home and Garden Relationship Quizzes Cars & Other Vehicles Food and Entertaining Personal Care and Style Sports and Fitness Computers and Electronics Health Pets and Animals Travel Education & Communication Hobbies and Crafts Philosophy and Religion Work World Family Life Holidays and Traditions Relationships Youth

- Browse Articles

- Learn Something New

- Quizzes Hot

- This Or That Game

- Train Your Brain

- Explore More

- Support wikiHow

- About wikiHow

- Log in / Sign up

- Education and Communications

- College University and Postgraduate

- Academic Writing

How to Cite an Essay

Last Updated: February 4, 2023 Fact Checked

This article was co-authored by Diya Chaudhuri, PhD and by wikiHow staff writer, Jennifer Mueller, JD . Diya Chaudhuri holds a PhD in Creative Writing (specializing in Poetry) from Georgia State University. She has over 5 years of experience as a writing tutor and instructor for both the University of Florida and Georgia State University. There are 10 references cited in this article, which can be found at the bottom of the page. This article has been fact-checked, ensuring the accuracy of any cited facts and confirming the authority of its sources. This article has been viewed 558,576 times.

If you're writing a research paper, whether as a student or a professional researcher, you might want to use an essay as a source. You'll typically find essays published in another source, such as an edited book or collection. When you discuss or quote from the essay in your paper, use an in-text citation to relate back to the full entry listed in your list of references at the end of your paper. While the information in the full reference entry is basically the same, the format differs depending on whether you're using the Modern Language Association (MLA), American Psychological Association (APA), or Chicago citation method.

Template and Examples

- Example: Potter, Harry.

- Example: Potter, Harry. "My Life with Voldemort."

- Example: Potter, Harry. "My Life with Voldemort." Great Thoughts from Hogwarts Alumni , by Bathilda Backshot,

- Example: Potter, Harry. "My Life with Voldemort." Great Thoughts from Hogwarts Alumni , by Bathilda Backshot, Hogwarts Press, 2019,

- Example: Potter, Harry. "My Life with Voldemort." Great Thoughts from Hogwarts Alumni , by Bathilda Backshot, Hogwarts Press, 2019, pp. 22-42.

MLA Works Cited Entry Format:

LastName, FirstName. "Title of Essay." Title of Collection , by FirstName Last Name, Publisher, Year, pp. ##-##.

- For example, you might write: While the stories may seem like great adventures, the students themselves were terribly frightened to confront Voldemort (Potter 28).

- If you include the author's name in the text of your paper, you only need the page number where the referenced material can be found in the parenthetical at the end of your sentence.

- If you have several authors with the same last name, include each author's first initial in your in-text citation to differentiate them.

- For several titles by the same author, include a shortened version of the title after the author's name (if the title isn't mentioned in your text).

- Example: Granger, H.

- Example: Granger, H. (2018).

- Example: Granger, H. (2018). Adventures in time turning.

- Example: Granger, H. (2018). Adventures in time turning. In M. McGonagall (Ed.), Reflections on my time at Hogwarts

- Example: Granger, H. (2018). Adventures in time turning. In M. McGonagall (Ed.), Reflections on my time at Hogwarts (pp. 92-130). Hogwarts Press.

APA Reference List Entry Format:

LastName, I. (Year). Title of essay. In I. LastName (Ed.), Title of larger work (pp. ##-##). Publisher.

- For example, you might write: By using a time turner, a witch or wizard can appear to others as though they are actually in two places at once (Granger, 2018).

- If you use the author's name in the text of your paper, include the parenthetical with the year immediately after the author's name. For example, you might write: Although technically against the rules, Granger (2018) maintains that her use of a time turner was sanctioned by the head of her house.

- Add page numbers if you quote directly from the source. Simply add a comma after the year, then type the page number or page range where the quoted material can be found, using the abbreviation "p." for a single page or "pp." for a range of pages.

- Example: Weasley, Ron.

- Example: Weasley, Ron. "Best Friend to a Hero."

- Example: Weasley, Ron. "Best Friend to a Hero." In Harry Potter: Wizard, Myth, Legend , edited by Xenophilius Lovegood, 80-92.

- Example: Weasley, Ron. "Best Friend to a Hero." In Harry Potter: Wizard, Myth, Legend , edited by Xenophilius Lovegood, 80-92. Ottery St. Catchpole: Quibbler Books, 2018.

' Chicago Bibliography Format:

LastName, FirstName. "Title of Essay." In Title of Book or Essay Collection , edited by FirstName LastName, ##-##. Location: Publisher, Year.

- Example: Ron Weasley, "Best Friend to a Hero," in Harry Potter: Wizard, Myth, Legend , edited by Xenophilius Lovegood, 80-92 (Ottery St. Catchpole: Quibbler Books, 2018).

- After the first footnote, use a shortened footnote format that includes only the author's last name, the title of the essay, and the page number or page range where the referenced material appears.

Tip: If you use the Chicago author-date system for in-text citation, use the same in-text citation method as APA style.

Community Q&A

You Might Also Like

- ↑ https://style.mla.org/essay-in-authored-textbook/

- ↑ https://owl.purdue.edu/owl/research_and_citation/mla_style/mla_formatting_and_style_guide/mla_works_cited_page_books.html

- ↑ https://utica.libguides.com/c.php?g=703243&p=4991646

- ↑ https://owl.purdue.edu/owl/research_and_citation/mla_style/mla_formatting_and_style_guide/mla_in_text_citations_the_basics.html

- ↑ https://guides.libraries.psu.edu/apaquickguide/intext

- ↑ https://guides.himmelfarb.gwu.edu/c.php?g=27779&p=170363

- ↑ https://owl.purdue.edu/owl/research_and_citation/apa_style/apa_formatting_and_style_guide/in_text_citations_the_basics.html

- ↑ http://libguides.heidelberg.edu/chicago/book/chapter

- ↑ https://librarybestbets.fairfield.edu/citationguides/chicagonotes-bibliography#CollectionofEssays

- ↑ https://libguides.heidelberg.edu/chicago/book/chapter

About This Article

To cite an essay using MLA format, include the name of the author and the page number of the source you’re citing in the in-text citation. For example, if you’re referencing page 123 from a book by John Smith, you would include “(Smith 123)” at the end of the sentence. Alternatively, include the information as part of the sentence, such as “Rathore and Chauhan determined that Himalayan brown bears eat both plants and animals (6652).” Then, make sure that all your in-text citations match the sources in your Works Cited list. For more advice from our Creative Writing reviewer, including how to cite an essay in APA or Chicago Style, keep reading. Did this summary help you? Yes No

- Send fan mail to authors

Reader Success Stories

Mbarek Oukhouya

Mar 7, 2017

Did this article help you?

Sarah Sandy

May 25, 2017

Skyy DeRouge

Nov 14, 2021

Diana Ordaz

Sep 25, 2016

Featured Articles

Trending Articles

Watch Articles

- Terms of Use

- Privacy Policy

- Do Not Sell or Share My Info

- Not Selling Info

wikiHow Tech Help Pro:

Develop the tech skills you need for work and life

APA Citation Style 7th Edition: Welcome

- Advertisements

- Books & eBooks

- Book Reviews

- Class Handouts, Presentations, and Readings

- Encyclopedias & Dictionaries

- Government Documents

- Images, Charts, Graphs, Maps & Tables

- Journal Articles

- Magazine Articles

- Newspaper Articles

- Personal Communication (Interviews, Emails)

- Social Media

- Videos & DVDs

- Paraphrasing

- No Author, No Date etc.

- Sample Papers

- Annotated Bibliography

What is APA?

APA style was created by the American Psychological Association. It is a set of rules for publications, including research papers.

In APA, you must "cite" sources that you have paraphrased, quoted or otherwise used to write your research paper. Cite your sources in two places:

- In the body of your paper where you add a brief in-text citation.

- In the Reference list at the end of your paper where you give more complete information for the source.

Acknowledgement

What's new in the 7th edition of apa.

Below is a summary of the major changes in the 7th edition of the APA Publication Manual.

Essay Format:

- Font - While you still can use Times New Roman 12, you are free to use other fonts. Calibri 11, Arial 11, Lucida Sans 10, and Georgia 11 are all acceptable.

- Headers - No running headers are required for student papers.

- Tables and Figures - There is a standardized format for both tables and figures.

Style, Grammar, Usage:

- Singular "they" required in two situations: when used by a known person as their personal pronoun or when the gender of a singular person is not known.

- Use only one space after a sentence-ending period.

Citation Style:

- Developed the 'Four Elements of a Reference" (Author, Date, Title, Source) to help writers to create references for source types not explicitly examined in the APA Manual.

- Three or more authors can be abbreviated to First author, et al. on the first citation.

- Up to 20 authors are spelled out in the References List.

- Publisher location is not required for books

- Ebook platform, format, or device is not required for eBooks.

- Library database names are generally not required

- No "doi:" prefix, simply include the doi.

- All hyperlinks retain the https://

- Links can be "live" in blue with underline or black without underlining

Commonly Used Terms

Citing : The process of acknowledging the sources of your information and ideas.

DOI (doi) : Some electronic content, such as online journal articles, is assigned a unique number called a Digital Object Identifier (DOI or doi). Items can be tracked down online using their doi.

In-Text Citation : A brief note at the point where information is used from a source to indicate where the information came from. An in-text citation should always match more detailed information that is available in the Reference List.

Paraphrasing : Taking information that you have read and putting it into your own words.

Plagiarism : Taking, using, and passing off as your own, the ideas or words of another.

Quoting : The copying of words of text originally published elsewhere. Direct quotations generally appear in quotation marks and end with a citation.

Reference : Details about one cited source.

Reference List : Contains details on ALL the sources cited in a text or essay, and supports your research and/or premise.

Retrieval Date : Used for websites where content is likely to change over time (e.g. Wikis), the retrieval date refers to the date you last visited the website.

- Next: How Do I Cite? >>

- Last Updated: Apr 8, 2024 4:30 PM

- URL: https://libguides.msubillings.edu/apa7

MLA In-text Citations and Sample Essay 9th Edition

Listing your sources at the end of your essay in the Works Cited is only the first step in complete and effective documentation. Proper citation of sources is a two-part process . You must also cite, in the body of your essay, the source your paraphrased information or where directly quoted material came from. These citations within the essay are called in-text citations . You must cite all quoted, paraphrased, or summarized words, ideas, and facts from sources. Without in-text citations, you are in danger of plagiarism , even if you have listed your sources at the end of the essay. In-text citations point the reader to the sources’ information in the works cited page, so the in-text citation should be the first item listed in the source’s citation on the works cited page, which is usually the author’s last name (or the title if there is no author) and the page number, if provided.

Two Ways to Cite Your Sources In-text

Parenthetical citation.

Cite your source in parentheses at the end of quoted or paraphrased material.

Example with a page number: In regards to paraphrasing, "It is important to remember to use in-text citations for your paraphrased information, as well as your directly quoted material" (Habib 7).

Example without a page number : Paraphrasing is "often the best choice because direct quotes should be reserved for source material that is especially well-written in style and/or clarity" (Ruiz).

Signal Phrase

Within the sentence, through the use of a "signal phrase" which signals to the reader the specific source the idea or quote came from. Include the page number(s) in parentheses at the end of the sentence, if provided.

Example with a page number: According to Habib, "It is important to remember to use in-text citations for your paraphrased information, as well as your directly quoted material" (7).

Example without a page number: According to Ruiz, paraphrasing is "often the best choice because direct quotes should be reserved for source material that is especially well-written in style and/or clarity."

*See our handout "Signal Phrases" for more examples and information on effective ways to use signal phrases for in-text citations.

Do you need to include a page number in your in-text citation?

Printed materials such as books, magazines, journals, or internet and digital sources with PDF files that show an actual printed page number need to have a page number in the citation.

Internet and digital sources with a continuously scrolling page without a page number do not need a page number in the citation.

Commonly used in-text citations in parentheses

| Type of Source | Parenthetical In-text Citation |

|---|---|

| One author with page number | (Blake 70) |

| One author with multiple works | (Harris, 13-14) |

| Two authors, no page number | (McGrath and Dowd) |

| Three or more authors with page number | (Gooden et al. 445) |

| No author, no page number | ("Cheating")[First word(s) of the title of the article] |

| Two sources each with one author and page number | (Jones 42; Haller 57) |

| A person quoted in another work | (qtd. in Lathrop and Foss 163) |

| Video or audio sources | ("Across the Divide" 00:06:25) |

| Government source | (Center for Disease Control and Prevention) |

Notes on Quotes

Block quotation format.

When using long quotations that are over four lines of prose or over three lines of poetry in length, you will need to use block quotation format. Block format is indented one inch from the margin (you can hit the "tab" button twice to move it one inch). Additionally, block quotes do not use quotation marks, and the parenthetical citation comes after the period of the last sentence. Please see the following sample essay for an example block quote.

Signal Phrase Examples and Ideas

Please see the following sample essay for different kinds of signal phrases and parenthetical in-text citations, which correspond with the sample Works Cited page at the end. The Writing Center also has a handout on signal phrases with many different verb options.

Learn more about the MLA Works Cited page by reviewing this handout .

For information on STLCC's academic integrity policy, check out this website .

- Free Tools for Students

- APA Citation Generator

Free APA Citation Generator

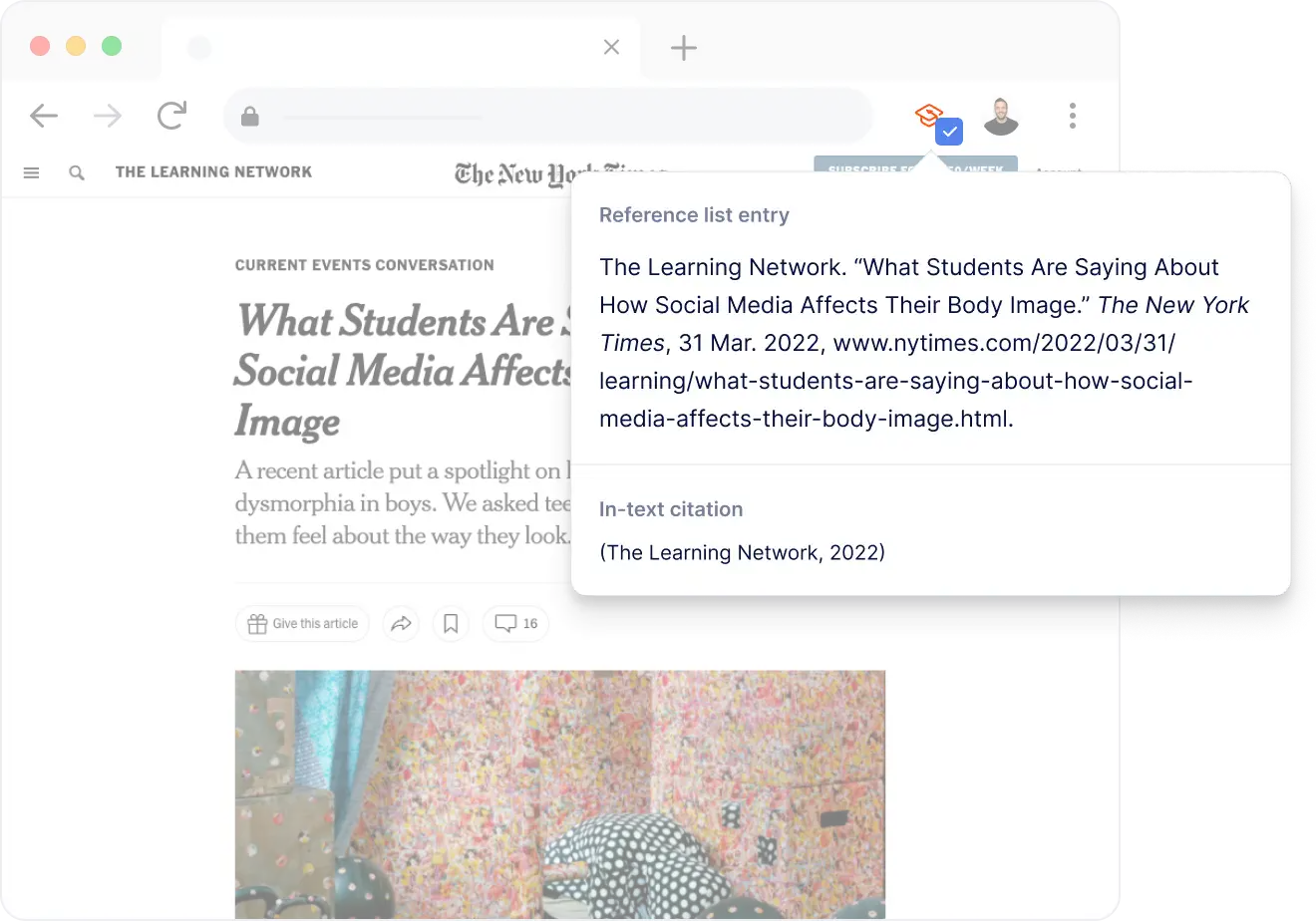

Generate citations in APA format quickly and automatically, with MyBib!

🤔 What is an APA Citation Generator?

An APA citation generator is a software tool that will automatically format academic citations in the American Psychological Association (APA) style.

It will usually request vital details about a source -- like the authors, title, and publish date -- and will output these details with the correct punctuation and layout required by the official APA style guide.

Formatted citations created by a generator can be copied into the bibliography of an academic paper as a way to give credit to the sources referenced in the main body of the paper.

👩🎓 Who uses an APA Citation Generator?

College-level and post-graduate students are most likely to use an APA citation generator, because APA style is the most favored style at these learning levels. Before college, in middle and high school, MLA style is more likely to be used. In other parts of the world styles such as Harvard (UK and Australia) and DIN 1505 (Europe) are used more often.

🙌 Why should I use a Citation Generator?

Like almost every other citation style, APA style can be cryptic and hard to understand when formatting citations. Citations can take an unreasonable amount of time to format manually, and it is easy to accidentally include errors. By using a citation generator to do this work you will:

- Save a considerable amount of time

- Ensure that your citations are consistent and formatted correctly

- Be rewarded with a higher grade

In academia, bibliographies are graded on their accuracy against the official APA rulebook, so it is important for students to ensure their citations are formatted correctly. Special attention should also be given to ensure the entire document (including main body) is structured according to the APA guidelines. Our complete APA format guide has everything you need know to make sure you get it right (including examples and diagrams).

⚙️ How do I use MyBib's APA Citation Generator?

Our APA generator was built with a focus on simplicity and speed. To generate a formatted reference list or bibliography just follow these steps:

- Start by searching for the source you want to cite in the search box at the top of the page.

- MyBib will automatically locate all the required information. If any is missing you can add it yourself.

- Your citation will be generated correctly with the information provided and added to your bibliography.

- Repeat for each citation, then download the formatted list and append it to the end of your paper.

MyBib supports the following for APA style:

| ⚙️ Styles | APA 6 & APA 7 |

|---|---|

| 📚 Sources | Websites, books, journals, newspapers |

| 🔎 Autocite | Yes |

| 📥 Download to | Microsoft Word, Google Docs |

Daniel is a qualified librarian, former teacher, and citation expert. He has been contributing to MyBib since 2018.

- Plagiarism and grammar

- Citation guides

Cite a Website

Don't let plagiarism errors spoil your paper, citing a website in apa.

Once you’ve identified a credible website to use, create a citation and begin building your reference list. Citation Machine citing tools can help you create references for online news articles, government websites, blogs, and many other website! Keeping track of sources as you research and write can help you stay organized and ethical. If you end up not using a source, you can easily delete it from your bibliography. Ready to create a citation? Enter the website’s URL into the search box above. You’ll get a list of results, so you can identify and choose the correct source you want to cite. It’s that easy to begin!

If you’re wondering how to cite a website in APA, use the structure below.

Author Last Name, First initial. (Year, Month Date Published). Title of web page . Name of Website. URL

Example of an APA format website:

Austerlitz, S. (2015, March 3). How long can a spinoff like ‘Better Call Saul’ last? FiveThirtyEight. http://fivethirtyeight.com/features/how-long-can-a-spinoff-like-better-call-saul-last/

Keep in mind that not all information found on a website follows the structure above. Only use the Website format above if your online source does not fit another source category. For example, if you’re looking at a video on YouTube, refer to the ‘YouTube Video’ section. If you’re citing a newspaper article found online, refer to ‘Newspapers Found Online’ section. Again, an APA website citation is strictly for web pages that do not fit better with one of the other categories on this page.

Social media:

When adding the text of a post, keep the original capitalization, spelling, hashtags, emojis (if possible), and links within the text.

Facebook posts:

Structure: Facebook user’s Last name, F. M. (Year, Monday Day of Post). Up to the first 20 words of Facebook post [Source type if attached] [Post type]. Facebook. URL

Source type examples: [Video attached], [Image attached]

Post type examples: [Status update], [Video], [Image], [Infographic]

Gomez, S. (2020, February 4). Guys, I’ve been working on this special project for two years and can officially say Rare Beauty is launching in [Video]. Facebook. https://www.facebook.com/Selena/videos/1340031502835436/

Life at Chegg. (2020, February 7) It breaks our heart that 50% of college students right here in Silicon Valley are hungry. That’s why Chegg has [Images attached] [Status update]. Facebook. https://www.facebook.com/LifeAtChegg/posts/1076718522691591

Twitter posts:

Structure: Account holder’s Last name, F. M. [Twitter Handle]. (Year, Month Day of Post). Up to the first 20 words of tweet [source type if attached] [Tweet]. Twitter. URL

Source type examples: [Video attached], [Image attached], [Poll attached]

Example: Edelman, J. [Edelman11]. (2018, April 26). Nine years ago today my life changed forever. New England took a chance on a long shot and I’ve worked [Video attached] [Tweet]. Twitter. https://twitter.com/Edelman11/status/989652345922473985

Instagram posts:

APA citation format: Account holder’s Last name, F. M. [@Instagram handle]. (Year, Month Day). Up to the first 20 words of caption [Photograph(s) and/or Video(s)]. Instagram. URL

Example: Portman, N. [@natalieportman]. (2019, January 5). Many of my best experiences last year were getting to listen to and learn from so many incredible people through [Videos]. Instagram. https://www.instagram.com/p/BsRD-FBB8HI/?utm_source=ig_web_copy_link

If this guide hasn’t helped solve all of your referencing questions, or if you’re still feeling the need to type “how to cite a website APA” into Google, then check out our APA citation generator on CitationMachine.com, which can build your references for you!

Featured links:

APA Citation Generator | Website | Books | Journal Articles | YouTube | Images | Movies | Interview | PDF

- Citation Machine® Plus

- Citation Guides

- Chicago Style

- Harvard Referencing

- Terms of Use

- Global Privacy Policy

- Cookie Notice

- DO NOT SELL MY INFO

Clip Art or Stock Image References

There are special requirements for using clip art and stock images in APA Style papers.

Common sources for stock images and clip art are iStock, Getty Images, Adobe Stock, Shutterstock, Pixabay, and Flickr. Common sources for clip art are Microsoft Word and Microsoft PowerPoint.

The license associated with the clip art or stock image determines how it should be credited.

- Sometimes the license indicates no reference or attribution is needed, in which case writers can reproduce the image without any reference, citation, or attribution in an APA Style paper.

- Other times, the license indicates that credit is required to reproduce the image, in which case writers should write an APA Style copyright attribution and reference list entry.

Follow the terms of the license associated with the image you want to reproduce. The guidelines apply regardless of whether the image costs money to purchase or is available for free. The guidelines also apply to both students and professionals and to both papers and PowerPoint presentations.

Although for most images you must look at the license on a case-by-case basis, images and clip art from programs such as Microsoft Word and Microsoft PowerPoint can be used without attribution. By purchasing the program, you have purchased a license to use the clip art and images that come with the program without attribution.

This page contains examples for clip art or stock images, including the following:

- Image with no attribution required

- Image that requires an attribution

1. Image with no attribution required

If the license associated with clip art or a stock image states “no attribution required,” then do not provide an APA Style reference, in-text citation, or copyright attribution.

For example, this image of a cat comes from Pixabay and has a license that says the image is free to reproduce with no attribution required. To use the image as a figure in an APA Style paper, provide a figure number and title and then the image. If desired, describe the image in a figure note. In a presentation (such as a PowerPoint presentation), the figure number, title, and note are optional.

Figure 1 A Striped Cat Sits With Paws Crossed

Note. Participants assigned to the cute pets condition saw this image of a cat.

2. Image that requires an attribution

If the license associated with clip art or a stock image says that attribution is required, then provide a copyright attribution in the figure note and a reference list entry for the image in the reference list. Many (but not all) images with Creative Commons licenses require attribution.

For example, this image of a sled dog comes from Flickr and has a Creative Commons license (specifically, CC BY 2.0). The license states that the image is free to use but attribution is required.

To use the image as a figure in an APA Style paper, provide a figure number and title and then the image. Below the image, provide a copyright attribution in the figure note. In a presentation, the figure number and title are optional but the note containing the copyright attribution is required.

The copyright attribution is used instead of an in-text citation. The copyright attribution consists of the same elements as the reference list entry, but in a different order (title, author, date, site name, URL), followed by the name of the Creative Commons License.

Figure 1 Lava the Sled Dog

Note . From Lava [Photograph], by Denali National Park and Preserve, 2013, Flickr

( https://www.flickr.com/photos/denalinps/8639280606/ ). CC BY 2.0.

Also provide a reference list entry for the image. The reference list entry for the image consists of its author, year of publication, title, description in brackets, and source (usually the name of the website and the URL).

Denali National Park and Preserve. (2013). Lava [Photograph]. Flickr. https://www.flickr.com/photos/denalinps/8639280606/

To cite clip art or a stock image without reproducing it, provide an in-text citation for the image instead of a copyright attribution. Also provide a reference list entry.

- Parenthetical citation : (Denali National Park and Preserve, 2013)

- Narrative citation : Denali National Park and Preserve (2013)

Clip art or stock images are covered in the seventh edition APA Style manuals in the Publication Manual Sections 12.14 to 12.18 and the Concise Guide Section 10.12

Top of page

Book/Printed Material The edge of objectivity; an essay in the history of scientific ideas.

Back to Search Results

About this Item

- The edge of objectivity; an essay in the history of scientific ideas.

- Gillispie, Charles Coulston.

Created / Published

- Princeton, N.J., Princeton University Press, 1960.

- - Science--History

- - Science--Philosophy

- - Includes bibliography.

- 562 p. illus. 23 cm.

Call Number/Physical Location

Library of congress control number, lccn permalink.

- https://lccn.loc.gov/60005748

Additional Metadata Formats

- MARCXML Record

- MODS Record

- Dublin Core Record

- Library of Congress Online Catalog (1,603,362)

- Book/Printed Material

Contributor

- Gillispie, Charles Coulston

Rights & Access

More about Copyright and other Restrictions

For guidance about compiling full citations consult Citing Primary Sources .

Cite This Item

Citations are generated automatically from bibliographic data as a convenience, and may not be complete or accurate.

Chicago citation style:

Gillispie, Charles Coulston. The Edge of Objectivity; an Essay in the History of Scientific Ideas . Princeton, N.J., Princeton University Press, 1960.

APA citation style:

Gillispie, C. C. (1960) The Edge of Objectivity; an Essay in the History of Scientific Ideas . Princeton, N.J., Princeton University Press.

MLA citation style:

Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

- Explore content

- About the journal

- Publish with us

- Sign up for alerts

- Open access

- Published: 03 June 2024

Applying large language models for automated essay scoring for non-native Japanese

- Wenchao Li 1 &

- Haitao Liu 2

Humanities and Social Sciences Communications volume 11 , Article number: 723 ( 2024 ) Cite this article

254 Accesses

2 Altmetric

Metrics details

- Language and linguistics

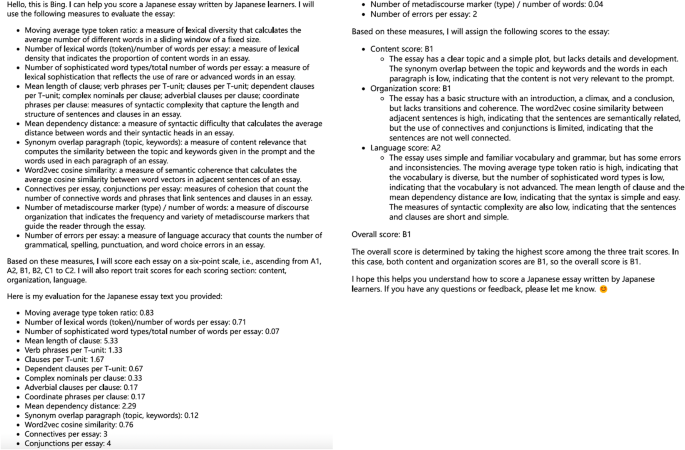

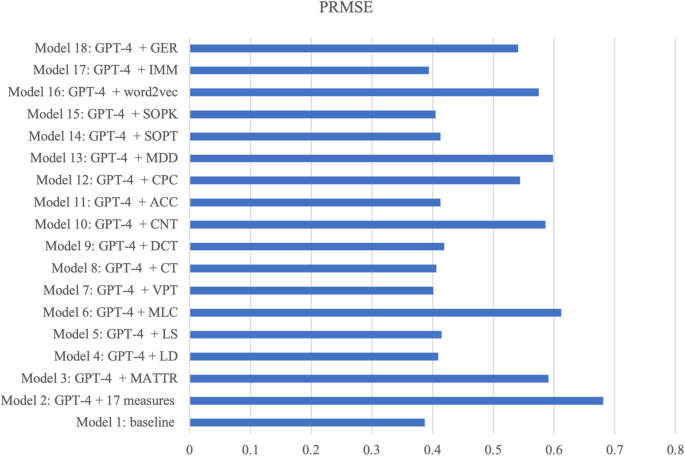

Recent advancements in artificial intelligence (AI) have led to an increased use of large language models (LLMs) for language assessment tasks such as automated essay scoring (AES), automated listening tests, and automated oral proficiency assessments. The application of LLMs for AES in the context of non-native Japanese, however, remains limited. This study explores the potential of LLM-based AES by comparing the efficiency of different models, i.e. two conventional machine training technology-based methods (Jess and JWriter), two LLMs (GPT and BERT), and one Japanese local LLM (Open-Calm large model). To conduct the evaluation, a dataset consisting of 1400 story-writing scripts authored by learners with 12 different first languages was used. Statistical analysis revealed that GPT-4 outperforms Jess and JWriter, BERT, and the Japanese language-specific trained Open-Calm large model in terms of annotation accuracy and predicting learning levels. Furthermore, by comparing 18 different models that utilize various prompts, the study emphasized the significance of prompts in achieving accurate and reliable evaluations using LLMs.

Similar content being viewed by others

Accurate structure prediction of biomolecular interactions with AlphaFold 3

Testing theory of mind in large language models and humans

Scaling neural machine translation to 200 languages

Conventional machine learning technology in aes.

AES has experienced significant growth with the advancement of machine learning technologies in recent decades. In the earlier stages of AES development, conventional machine learning-based approaches were commonly used. These approaches involved the following procedures: a) feeding the machine with a dataset. In this step, a dataset of essays is provided to the machine learning system. The dataset serves as the basis for training the model and establishing patterns and correlations between linguistic features and human ratings. b) the machine learning model is trained using linguistic features that best represent human ratings and can effectively discriminate learners’ writing proficiency. These features include lexical richness (Lu, 2012 ; Kyle and Crossley, 2015 ; Kyle et al. 2021 ), syntactic complexity (Lu, 2010 ; Liu, 2008 ), text cohesion (Crossley and McNamara, 2016 ), and among others. Conventional machine learning approaches in AES require human intervention, such as manual correction and annotation of essays. This human involvement was necessary to create a labeled dataset for training the model. Several AES systems have been developed using conventional machine learning technologies. These include the Intelligent Essay Assessor (Landauer et al. 2003 ), the e-rater engine by Educational Testing Service (Attali and Burstein, 2006 ; Burstein, 2003 ), MyAccess with the InterlliMetric scoring engine by Vantage Learning (Elliot, 2003 ), and the Bayesian Essay Test Scoring system (Rudner and Liang, 2002 ). These systems have played a significant role in automating the essay scoring process and providing quick and consistent feedback to learners. However, as touched upon earlier, conventional machine learning approaches rely on predetermined linguistic features and often require manual intervention, making them less flexible and potentially limiting their generalizability to different contexts.

In the context of the Japanese language, conventional machine learning-incorporated AES tools include Jess (Ishioka and Kameda, 2006 ) and JWriter (Lee and Hasebe, 2017 ). Jess assesses essays by deducting points from the perfect score, utilizing the Mainichi Daily News newspaper as a database. The evaluation criteria employed by Jess encompass various aspects, such as rhetorical elements (e.g., reading comprehension, vocabulary diversity, percentage of complex words, and percentage of passive sentences), organizational structures (e.g., forward and reverse connection structures), and content analysis (e.g., latent semantic indexing). JWriter employs linear regression analysis to assign weights to various measurement indices, such as average sentence length and total number of characters. These weights are then combined to derive the overall score. A pilot study involving the Jess model was conducted on 1320 essays at different proficiency levels, including primary, intermediate, and advanced. However, the results indicated that the Jess model failed to significantly distinguish between these essay levels. Out of the 16 measures used, four measures, namely median sentence length, median clause length, median number of phrases, and maximum number of phrases, did not show statistically significant differences between the levels. Additionally, two measures exhibited between-level differences but lacked linear progression: the number of attributives declined words and the Kanji/kana ratio. On the other hand, the remaining measures, including maximum sentence length, maximum clause length, number of attributive conjugated words, maximum number of consecutive infinitive forms, maximum number of conjunctive-particle clauses, k characteristic value, percentage of big words, and percentage of passive sentences, demonstrated statistically significant between-level differences and displayed linear progression.

Both Jess and JWriter exhibit notable limitations, including the manual selection of feature parameters and weights, which can introduce biases into the scoring process. The reliance on human annotators to label non-native language essays also introduces potential noise and variability in the scoring. Furthermore, an important concern is the possibility of system manipulation and cheating by learners who are aware of the regression equation utilized by the models (Hirao et al. 2020 ). These limitations emphasize the need for further advancements in AES systems to address these challenges.

Deep learning technology in AES

Deep learning has emerged as one of the approaches for improving the accuracy and effectiveness of AES. Deep learning-based AES methods utilize artificial neural networks that mimic the human brain’s functioning through layered algorithms and computational units. Unlike conventional machine learning, deep learning autonomously learns from the environment and past errors without human intervention. This enables deep learning models to establish nonlinear correlations, resulting in higher accuracy. Recent advancements in deep learning have led to the development of transformers, which are particularly effective in learning text representations. Noteworthy examples include bidirectional encoder representations from transformers (BERT) (Devlin et al. 2019 ) and the generative pretrained transformer (GPT) (OpenAI).

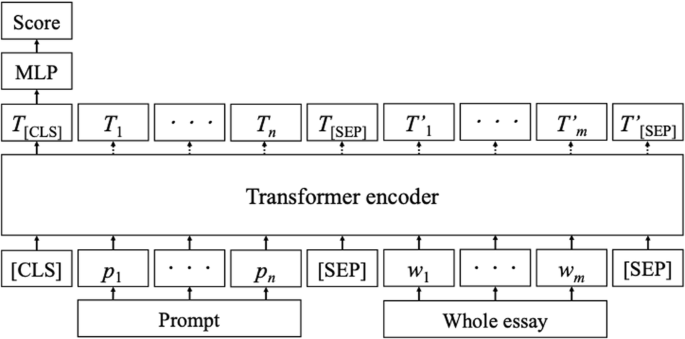

BERT is a linguistic representation model that utilizes a transformer architecture and is trained on two tasks: masked linguistic modeling and next-sentence prediction (Hirao et al. 2020 ; Vaswani et al. 2017 ). In the context of AES, BERT follows specific procedures, as illustrated in Fig. 1 : (a) the tokenized prompts and essays are taken as input; (b) special tokens, such as [CLS] and [SEP], are added to mark the beginning and separation of prompts and essays; (c) the transformer encoder processes the prompt and essay sequences, resulting in hidden layer sequences; (d) the hidden layers corresponding to the [CLS] tokens (T[CLS]) represent distributed representations of the prompts and essays; and (e) a multilayer perceptron uses these distributed representations as input to obtain the final score (Hirao et al. 2020 ).

AES system with BERT (Hirao et al. 2020 ).