Essay Papers Writing Online

A comprehensive guide to crafting a successful comparison essay.

Comparison essays are a common assignment in academic settings, requiring students to analyze and contrast two or more subjects, concepts, or ideas. Writing a comparison essay can be challenging, but with the right approach and guidance, you can craft a compelling and informative piece of writing.

In this comprehensive guide, we will provide you with valuable tips and examples to help you master the art of comparison essay writing. Whether you’re comparing two literary works, historical events, scientific theories, or any other topics, this guide will equip you with the tools and strategies needed to create a well-structured and persuasive essay.

From choosing a suitable topic and developing a strong thesis statement to organizing your arguments and incorporating effective evidence, this guide will walk you through each step of the writing process. By following the advice and examples provided here, you’ll be able to produce a top-notch comparison essay that showcases your analytical skills and critical thinking abilities.

Understanding the Basics

Before diving into writing a comparison essay, it’s essential to understand the basics of comparison writing. A comparison essay, also known as a comparative essay, requires you to analyze two or more subjects by highlighting their similarities and differences. This type of essay aims to show how these subjects are similar or different in various aspects.

When writing a comparison essay, you should have a clear thesis statement that identifies the subjects you are comparing and the main points of comparison. It’s essential to structure your essay effectively by organizing your ideas logically. You can use different methods of organization, such as the block method or point-by-point method, to present your comparisons.

Additionally, make sure to include evidence and examples to support your comparisons. Use specific details and examples to strengthen your arguments and clarify the similarities and differences between the subjects. Lastly, remember to provide a strong conclusion that summarizes your main points and reinforces the significance of your comparison.

Choosing a Topic for Comparison Essay

When selecting a topic for your comparison essay, it’s essential to choose two subjects that have some similarities and differences to explore. You can compare two books, two movies, two historical figures, two theories, or any other pair of related subjects.

Consider selecting topics that interest you or that you are familiar with to make the writing process more engaging and manageable. Additionally, ensure that the subjects you choose are suitable for comparison and have enough material for analysis.

It’s also helpful to brainstorm ideas and create a list of potential topics before making a final decision. Once you have a few options in mind, evaluate them based on the relevance of the comparison, the availability of credible sources, and your own interest in the subjects.

Remember that a well-chosen topic is one of the keys to writing a successful comparison essay, so take your time to select subjects that will allow you to explore meaningful connections and differences in a compelling way.

Finding the Right Pairing

When writing a comparison essay, it’s crucial to find the right pairing of subjects to compare. Choose subjects that have enough similarities and differences to make a meaningful comparison. Consider the audience and purpose of your essay to determine what pairing will be most effective.

Look for subjects that you are passionate about or have a deep understanding of. This will make the writing process easier and more engaging. Additionally, consider choosing subjects that are relevant and timely, as this will make your essay more interesting to readers.

Don’t be afraid to think outside the box when finding the right pairing. Sometimes unexpected combinations can lead to the most compelling comparisons. Conduct thorough research on both subjects to ensure you have enough material to work with and present a balanced comparison.

Structuring Your Comparison Essay

When writing a comparison essay, it is essential to organize your ideas in a clear and logical manner. One effective way to structure your essay is to use a point-by-point comparison or a block comparison format.

| Point-by-Point Comparison | Block Comparison |

|---|---|

| In this format, you will discuss one point of comparison between the two subjects before moving on to the next point. | In this format, you will discuss all the points related to one subject before moving on to the next subject. |

| Allows for a more detailed analysis of each point of comparison. | Provides a clear and structured comparison of the two subjects. |

| Can be helpful when the subjects have multiple similarities and differences to explore. | May be easier to follow for readers who prefer a side-by-side comparison of the subjects. |

Whichever format you choose, make sure to introduce your subjects, present your points of comparison, provide evidence or examples to support your comparisons, and conclude by summarizing the main points and highlighting the significance of your comparison.

Creating a Clear Outline

Before you start writing your comparison essay, it’s essential to create a clear outline. An outline serves as a roadmap that helps you stay organized and focused throughout the writing process. Here are some steps to create an effective outline:

1. Identify the subjects of comparison: Start by determining the two subjects you will be comparing in your essay. Make sure they have enough similarities and differences to make a meaningful comparison.

2. Brainstorm key points: Once you have chosen the subjects, brainstorm the key points you want to compare and contrast. These could include characteristics, features, themes, or arguments related to each subject.

3. Organize your points: Arrange your key points in a logical order. You can choose to compare similar points side by side or alternate between the two subjects to highlight differences.

4. Develop a thesis statement: Based on your key points, develop a clear thesis statement that states the main purpose of your comparison essay. This statement should guide the rest of your writing and provide a clear direction for your argument.

5. Create a structure: Divide your essay into introduction, body paragraphs, and conclusion. Each section should serve a specific purpose and contribute to the overall coherence of your essay.

By creating a clear outline, you can ensure that your comparison essay flows smoothly and effectively communicates your ideas to the reader.

Engaging the Reader

When writing a comparison essay, it is crucial to engage the reader right from the beginning. You want to hook their attention and make them want to keep reading. Here are some tips to engage your reader:

- Start with a strong opening statement or question that entices the reader to continue reading.

- Use vivid language and descriptive imagery to paint a clear picture in the reader’s mind.

- Provide interesting facts or statistics that pique the reader’s curiosity.

- Create a compelling thesis statement that outlines the purpose of your comparison essay.

By engaging the reader from the start, you set the stage for a successful and impactful comparison essay that keeps the reader engaged until the very end.

Point-by-Point vs Block Method

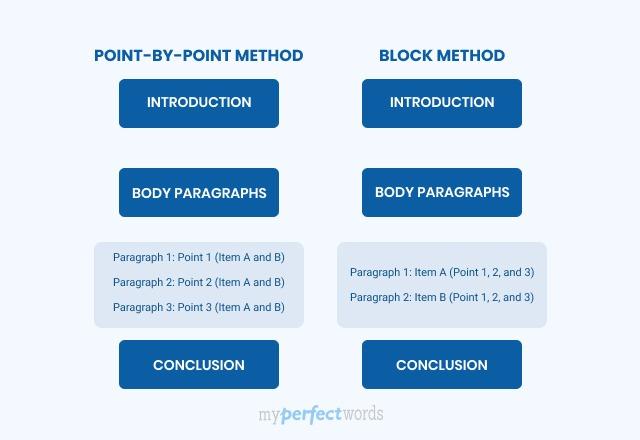

When writing a comparison essay, you have two main options for structuring your content: the point-by-point method and the block method. Each method has its own advantages and may be more suitable depending on the type of comparison you are making.

- Point-by-Point Method: This method involves discussing one point of comparison at a time between the two subjects. You will go back and forth between the subjects, highlighting similarities and differences for each point. This method allows for a more detailed and nuanced analysis of the subjects.

- Block Method: In contrast, the block method involves discussing all the points related to one subject first, followed by all the points related to the second subject. This method provides a more straightforward and organized comparison but may not delve as deeply into the individual points of comparison.

Ultimately, the choice between the point-by-point and block methods depends on the complexity of your comparison and the level of detail you want to explore. Experiment with both methods to see which one best suits your writing style and the specific requirements of your comparison essay.

Selecting the Best Approach

When it comes to writing a comparison essay, selecting the best approach is crucial to ensure a successful and effective comparison. There are several approaches you can take when comparing two subjects, including the block method and the point-by-point method.

The block method: This approach involves discussing all the similarities and differences of one subject first, followed by a thorough discussion of the second subject. This method is useful when the two subjects being compared are quite different or when the reader may not be familiar with one of the subjects.

The point-by-point method: This approach involves alternating between discussing the similarities and differences of the two subjects in each paragraph. This method allows for a more in-depth comparison of specific points and is often preferred when the two subjects have many similarities and differences.

Before selecting an approach, consider the nature of the subjects being compared and the purpose of your comparison essay. Choose the approach that will best serve your purpose and allow for a clear, organized, and engaging comparison.

Related Post

How to master the art of writing expository essays and captivate your audience, convenient and reliable source to purchase college essays online, step-by-step guide to crafting a powerful literary analysis essay, unlock success with a comprehensive business research paper example guide, unlock your writing potential with writers college – transform your passion into profession, “unlocking the secrets of academic success – navigating the world of research papers in college”, master the art of sociological expression – elevate your writing skills in sociology.

Comparing and Contrasting

What this handout is about.

This handout will help you first to determine whether a particular assignment is asking for comparison/contrast and then to generate a list of similarities and differences, decide which similarities and differences to focus on, and organize your paper so that it will be clear and effective. It will also explain how you can (and why you should) develop a thesis that goes beyond “Thing A and Thing B are similar in many ways but different in others.”

Introduction

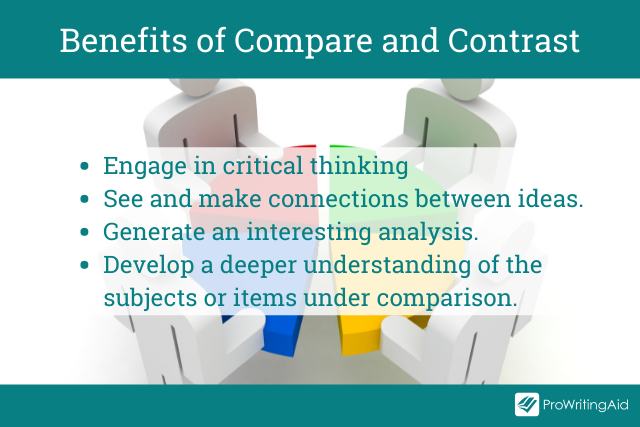

In your career as a student, you’ll encounter many different kinds of writing assignments, each with its own requirements. One of the most common is the comparison/contrast essay, in which you focus on the ways in which certain things or ideas—usually two of them—are similar to (this is the comparison) and/or different from (this is the contrast) one another. By assigning such essays, your instructors are encouraging you to make connections between texts or ideas, engage in critical thinking, and go beyond mere description or summary to generate interesting analysis: when you reflect on similarities and differences, you gain a deeper understanding of the items you are comparing, their relationship to each other, and what is most important about them.

Recognizing comparison/contrast in assignments

Some assignments use words—like compare, contrast, similarities, and differences—that make it easy for you to see that they are asking you to compare and/or contrast. Here are a few hypothetical examples:

- Compare and contrast Frye’s and Bartky’s accounts of oppression.

- Compare WWI to WWII, identifying similarities in the causes, development, and outcomes of the wars.

- Contrast Wordsworth and Coleridge; what are the major differences in their poetry?

Notice that some topics ask only for comparison, others only for contrast, and others for both.

But it’s not always so easy to tell whether an assignment is asking you to include comparison/contrast. And in some cases, comparison/contrast is only part of the essay—you begin by comparing and/or contrasting two or more things and then use what you’ve learned to construct an argument or evaluation. Consider these examples, noticing the language that is used to ask for the comparison/contrast and whether the comparison/contrast is only one part of a larger assignment:

- Choose a particular idea or theme, such as romantic love, death, or nature, and consider how it is treated in two Romantic poems.

- How do the different authors we have studied so far define and describe oppression?

- Compare Frye’s and Bartky’s accounts of oppression. What does each imply about women’s collusion in their own oppression? Which is more accurate?

- In the texts we’ve studied, soldiers who served in different wars offer differing accounts of their experiences and feelings both during and after the fighting. What commonalities are there in these accounts? What factors do you think are responsible for their differences?

You may want to check out our handout on understanding assignments for additional tips.

Using comparison/contrast for all kinds of writing projects

Sometimes you may want to use comparison/contrast techniques in your own pre-writing work to get ideas that you can later use for an argument, even if comparison/contrast isn’t an official requirement for the paper you’re writing. For example, if you wanted to argue that Frye’s account of oppression is better than both de Beauvoir’s and Bartky’s, comparing and contrasting the main arguments of those three authors might help you construct your evaluation—even though the topic may not have asked for comparison/contrast and the lists of similarities and differences you generate may not appear anywhere in the final draft of your paper.

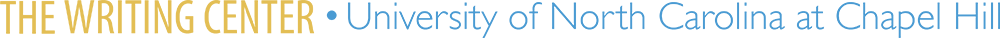

Discovering similarities and differences

Making a Venn diagram or a chart can help you quickly and efficiently compare and contrast two or more things or ideas. To make a Venn diagram, simply draw some overlapping circles, one circle for each item you’re considering. In the central area where they overlap, list the traits the two items have in common. Assign each one of the areas that doesn’t overlap; in those areas, you can list the traits that make the things different. Here’s a very simple example, using two pizza places:

To make a chart, figure out what criteria you want to focus on in comparing the items. Along the left side of the page, list each of the criteria. Across the top, list the names of the items. You should then have a box per item for each criterion; you can fill the boxes in and then survey what you’ve discovered.

Here’s an example, this time using three pizza places:

| Pepper’s | Amante | Papa John’s | |

|---|---|---|---|

| Location | |||

| Price | |||

| Delivery | |||

| Ingredients | |||

| Service | |||

| Seating/eating in | |||

| Coupons |

As you generate points of comparison, consider the purpose and content of the assignment and the focus of the class. What do you think the professor wants you to learn by doing this comparison/contrast? How does it fit with what you have been studying so far and with the other assignments in the course? Are there any clues about what to focus on in the assignment itself?

Here are some general questions about different types of things you might have to compare. These are by no means complete or definitive lists; they’re just here to give you some ideas—you can generate your own questions for these and other types of comparison. You may want to begin by using the questions reporters traditionally ask: Who? What? Where? When? Why? How? If you’re talking about objects, you might also consider general properties like size, shape, color, sound, weight, taste, texture, smell, number, duration, and location.

Two historical periods or events

- When did they occur—do you know the date(s) and duration? What happened or changed during each? Why are they significant?

- What kinds of work did people do? What kinds of relationships did they have? What did they value?

- What kinds of governments were there? Who were important people involved?

- What caused events in these periods, and what consequences did they have later on?

Two ideas or theories

- What are they about?

- Did they originate at some particular time?

- Who created them? Who uses or defends them?

- What is the central focus, claim, or goal of each? What conclusions do they offer?

- How are they applied to situations/people/things/etc.?

- Which seems more plausible to you, and why? How broad is their scope?

- What kind of evidence is usually offered for them?

Two pieces of writing or art

- What are their titles? What do they describe or depict?

- What is their tone or mood? What is their form?

- Who created them? When were they created? Why do you think they were created as they were? What themes do they address?

- Do you think one is of higher quality or greater merit than the other(s)—and if so, why?

- For writing: what plot, characterization, setting, theme, tone, and type of narration are used?

- Where are they from? How old are they? What is the gender, race, class, etc. of each?

- What, if anything, are they known for? Do they have any relationship to each other?

- What are they like? What did/do they do? What do they believe? Why are they interesting?

- What stands out most about each of them?

Deciding what to focus on

By now you have probably generated a huge list of similarities and differences—congratulations! Next you must decide which of them are interesting, important, and relevant enough to be included in your paper. Ask yourself these questions:

- What’s relevant to the assignment?

- What’s relevant to the course?

- What’s interesting and informative?

- What matters to the argument you are going to make?

- What’s basic or central (and needs to be mentioned even if obvious)?

- Overall, what’s more important—the similarities or the differences?

Suppose that you are writing a paper comparing two novels. For most literature classes, the fact that they both use Caslon type (a kind of typeface, like the fonts you may use in your writing) is not going to be relevant, nor is the fact that one of them has a few illustrations and the other has none; literature classes are more likely to focus on subjects like characterization, plot, setting, the writer’s style and intentions, language, central themes, and so forth. However, if you were writing a paper for a class on typesetting or on how illustrations are used to enhance novels, the typeface and presence or absence of illustrations might be absolutely critical to include in your final paper.

Sometimes a particular point of comparison or contrast might be relevant but not terribly revealing or interesting. For example, if you are writing a paper about Wordsworth’s “Tintern Abbey” and Coleridge’s “Frost at Midnight,” pointing out that they both have nature as a central theme is relevant (comparisons of poetry often talk about themes) but not terribly interesting; your class has probably already had many discussions about the Romantic poets’ fondness for nature. Talking about the different ways nature is depicted or the different aspects of nature that are emphasized might be more interesting and show a more sophisticated understanding of the poems.

Your thesis

The thesis of your comparison/contrast paper is very important: it can help you create a focused argument and give your reader a road map so they don’t get lost in the sea of points you are about to make. As in any paper, you will want to replace vague reports of your general topic (for example, “This paper will compare and contrast two pizza places,” or “Pepper’s and Amante are similar in some ways and different in others,” or “Pepper’s and Amante are similar in many ways, but they have one major difference”) with something more detailed and specific. For example, you might say, “Pepper’s and Amante have similar prices and ingredients, but their atmospheres and willingness to deliver set them apart.”

Be careful, though—although this thesis is fairly specific and does propose a simple argument (that atmosphere and delivery make the two pizza places different), your instructor will often be looking for a bit more analysis. In this case, the obvious question is “So what? Why should anyone care that Pepper’s and Amante are different in this way?” One might also wonder why the writer chose those two particular pizza places to compare—why not Papa John’s, Dominos, or Pizza Hut? Again, thinking about the context the class provides may help you answer such questions and make a stronger argument. Here’s a revision of the thesis mentioned earlier:

Pepper’s and Amante both offer a greater variety of ingredients than other Chapel Hill/Carrboro pizza places (and than any of the national chains), but the funky, lively atmosphere at Pepper’s makes it a better place to give visiting friends and family a taste of local culture.

You may find our handout on constructing thesis statements useful at this stage.

Organizing your paper

There are many different ways to organize a comparison/contrast essay. Here are two:

Subject-by-subject

Begin by saying everything you have to say about the first subject you are discussing, then move on and make all the points you want to make about the second subject (and after that, the third, and so on, if you’re comparing/contrasting more than two things). If the paper is short, you might be able to fit all of your points about each item into a single paragraph, but it’s more likely that you’d have several paragraphs per item. Using our pizza place comparison/contrast as an example, after the introduction, you might have a paragraph about the ingredients available at Pepper’s, a paragraph about its location, and a paragraph about its ambience. Then you’d have three similar paragraphs about Amante, followed by your conclusion.

The danger of this subject-by-subject organization is that your paper will simply be a list of points: a certain number of points (in my example, three) about one subject, then a certain number of points about another. This is usually not what college instructors are looking for in a paper—generally they want you to compare or contrast two or more things very directly, rather than just listing the traits the things have and leaving it up to the reader to reflect on how those traits are similar or different and why those similarities or differences matter. Thus, if you use the subject-by-subject form, you will probably want to have a very strong, analytical thesis and at least one body paragraph that ties all of your different points together.

A subject-by-subject structure can be a logical choice if you are writing what is sometimes called a “lens” comparison, in which you use one subject or item (which isn’t really your main topic) to better understand another item (which is). For example, you might be asked to compare a poem you’ve already covered thoroughly in class with one you are reading on your own. It might make sense to give a brief summary of your main ideas about the first poem (this would be your first subject, the “lens”), and then spend most of your paper discussing how those points are similar to or different from your ideas about the second.

Point-by-point

Rather than addressing things one subject at a time, you may wish to talk about one point of comparison at a time. There are two main ways this might play out, depending on how much you have to say about each of the things you are comparing. If you have just a little, you might, in a single paragraph, discuss how a certain point of comparison/contrast relates to all the items you are discussing. For example, I might describe, in one paragraph, what the prices are like at both Pepper’s and Amante; in the next paragraph, I might compare the ingredients available; in a third, I might contrast the atmospheres of the two restaurants.

If I had a bit more to say about the items I was comparing/contrasting, I might devote a whole paragraph to how each point relates to each item. For example, I might have a whole paragraph about the clientele at Pepper’s, followed by a whole paragraph about the clientele at Amante; then I would move on and do two more paragraphs discussing my next point of comparison/contrast—like the ingredients available at each restaurant.

There are no hard and fast rules about organizing a comparison/contrast paper, of course. Just be sure that your reader can easily tell what’s going on! Be aware, too, of the placement of your different points. If you are writing a comparison/contrast in service of an argument, keep in mind that the last point you make is the one you are leaving your reader with. For example, if I am trying to argue that Amante is better than Pepper’s, I should end with a contrast that leaves Amante sounding good, rather than with a point of comparison that I have to admit makes Pepper’s look better. If you’ve decided that the differences between the items you’re comparing/contrasting are most important, you’ll want to end with the differences—and vice versa, if the similarities seem most important to you.

Our handout on organization can help you write good topic sentences and transitions and make sure that you have a good overall structure in place for your paper.

Cue words and other tips

To help your reader keep track of where you are in the comparison/contrast, you’ll want to be sure that your transitions and topic sentences are especially strong. Your thesis should already have given the reader an idea of the points you’ll be making and the organization you’ll be using, but you can help them out with some extra cues. The following words may be helpful to you in signaling your intentions:

- like, similar to, also, unlike, similarly, in the same way, likewise, again, compared to, in contrast, in like manner, contrasted with, on the contrary, however, although, yet, even though, still, but, nevertheless, conversely, at the same time, regardless, despite, while, on the one hand … on the other hand.

For example, you might have a topic sentence like one of these:

- Compared to Pepper’s, Amante is quiet.

- Like Amante, Pepper’s offers fresh garlic as a topping.

- Despite their different locations (downtown Chapel Hill and downtown Carrboro), Pepper’s and Amante are both fairly easy to get to.

You may reproduce it for non-commercial use if you use the entire handout and attribute the source: The Writing Center, University of North Carolina at Chapel Hill

Make a Gift

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

10.7 Comparison and Contrast

Learning objectives.

- Determine the purpose and structure of comparison and contrast in writing.

- Explain organizational methods used when comparing and contrasting.

- Understand how to write a compare-and-contrast essay.

The Purpose of Comparison and Contrast in Writing

Comparison in writing discusses elements that are similar, while contrast in writing discusses elements that are different. A compare-and-contrast essay , then, analyzes two subjects by comparing them, contrasting them, or both.

The key to a good compare-and-contrast essay is to choose two or more subjects that connect in a meaningful way. The purpose of conducting the comparison or contrast is not to state the obvious but rather to illuminate subtle differences or unexpected similarities. For example, if you wanted to focus on contrasting two subjects you would not pick apples and oranges; rather, you might choose to compare and contrast two types of oranges or two types of apples to highlight subtle differences. For example, Red Delicious apples are sweet, while Granny Smiths are tart and acidic. Drawing distinctions between elements in a similar category will increase the audience’s understanding of that category, which is the purpose of the compare-and-contrast essay.

Similarly, to focus on comparison, choose two subjects that seem at first to be unrelated. For a comparison essay, you likely would not choose two apples or two oranges because they share so many of the same properties already. Rather, you might try to compare how apples and oranges are quite similar. The more divergent the two subjects initially seem, the more interesting a comparison essay will be.

Writing at Work

Comparing and contrasting is also an evaluative tool. In order to make accurate evaluations about a given topic, you must first know the critical points of similarity and difference. Comparing and contrasting is a primary tool for many workplace assessments. You have likely compared and contrasted yourself to other colleagues. Employee advancements, pay raises, hiring, and firing are typically conducted using comparison and contrast. Comparison and contrast could be used to evaluate companies, departments, or individuals.

Brainstorm an essay that leans toward contrast. Choose one of the following three categories. Pick two examples from each. Then come up with one similarity and three differences between the examples.

- Romantic comedies

- Internet search engines

- Cell phones

Brainstorm an essay that leans toward comparison. Choose one of the following three items. Then come up with one difference and three similarities.

- Department stores and discount retail stores

- Fast food chains and fine dining restaurants

- Dogs and cats

The Structure of a Comparison and Contrast Essay

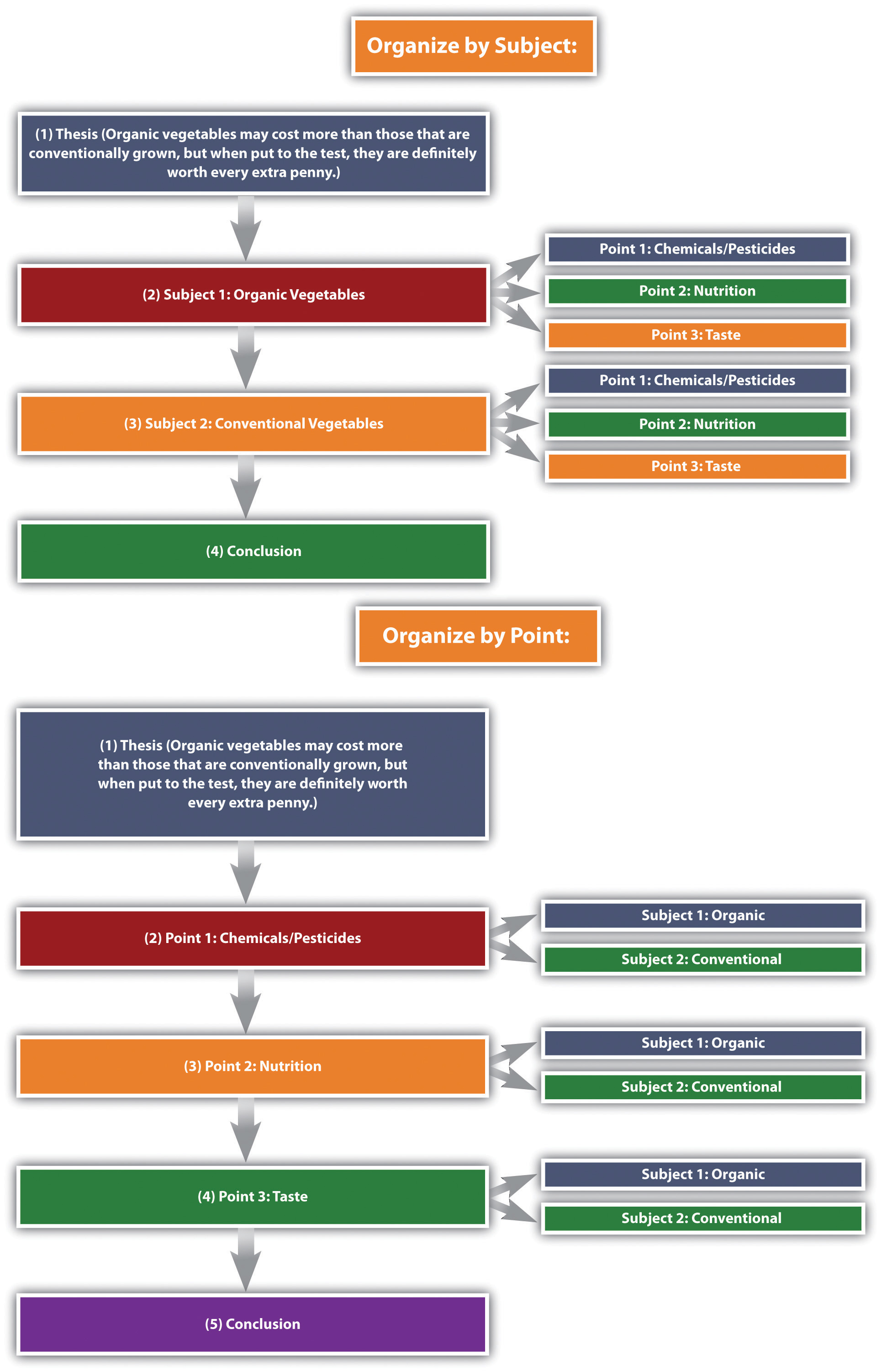

The compare-and-contrast essay starts with a thesis that clearly states the two subjects that are to be compared, contrasted, or both and the reason for doing so. The thesis could lean more toward comparing, contrasting, or both. Remember, the point of comparing and contrasting is to provide useful knowledge to the reader. Take the following thesis as an example that leans more toward contrasting.

Thesis statement: Organic vegetables may cost more than those that are conventionally grown, but when put to the test, they are definitely worth every extra penny.

Here the thesis sets up the two subjects to be compared and contrasted (organic versus conventional vegetables), and it makes a claim about the results that might prove useful to the reader.

You may organize compare-and-contrast essays in one of the following two ways:

- According to the subjects themselves, discussing one then the other

- According to individual points, discussing each subject in relation to each point

See Figure 10.1 “Comparison and Contrast Diagram” , which diagrams the ways to organize our organic versus conventional vegetables thesis.

Figure 10.1 Comparison and Contrast Diagram

The organizational structure you choose depends on the nature of the topic, your purpose, and your audience.

Given that compare-and-contrast essays analyze the relationship between two subjects, it is helpful to have some phrases on hand that will cue the reader to such analysis. See Table 10.3 “Phrases of Comparison and Contrast” for examples.

Table 10.3 Phrases of Comparison and Contrast

| Comparison | Contrast |

|---|---|

| one similarity | one difference |

| another similarity | another difference |

| both | conversely |

| like | in contrast |

| likewise | unlike |

| similarly | while |

| in a similar fashion | whereas |

Create an outline for each of the items you chose in Note 10.72 “Exercise 1” and Note 10.73 “Exercise 2” . Use the point-by-point organizing strategy for one of them, and use the subject organizing strategy for the other.

Writing a Comparison and Contrast Essay

First choose whether you want to compare seemingly disparate subjects, contrast seemingly similar subjects, or compare and contrast subjects. Once you have decided on a topic, introduce it with an engaging opening paragraph. Your thesis should come at the end of the introduction, and it should establish the subjects you will compare, contrast, or both as well as state what can be learned from doing so.

The body of the essay can be organized in one of two ways: by subject or by individual points. The organizing strategy that you choose will depend on, as always, your audience and your purpose. You may also consider your particular approach to the subjects as well as the nature of the subjects themselves; some subjects might better lend themselves to one structure or the other. Make sure to use comparison and contrast phrases to cue the reader to the ways in which you are analyzing the relationship between the subjects.

After you finish analyzing the subjects, write a conclusion that summarizes the main points of the essay and reinforces your thesis. See Chapter 15 “Readings: Examples of Essays” to read a sample compare-and-contrast essay.

Many business presentations are conducted using comparison and contrast. The organizing strategies—by subject or individual points—could also be used for organizing a presentation. Keep this in mind as a way of organizing your content the next time you or a colleague have to present something at work.

Choose one of the outlines you created in Note 10.75 “Exercise 3” , and write a full compare-and-contrast essay. Be sure to include an engaging introduction, a clear thesis, well-defined and detailed paragraphs, and a fitting conclusion that ties everything together.

Key Takeaways

- A compare-and-contrast essay analyzes two subjects by either comparing them, contrasting them, or both.

- The purpose of writing a comparison or contrast essay is not to state the obvious but rather to illuminate subtle differences or unexpected similarities between two subjects.

- The thesis should clearly state the subjects that are to be compared, contrasted, or both, and it should state what is to be learned from doing so.

There are two main organizing strategies for compare-and-contrast essays.

- Organize by the subjects themselves, one then the other.

- Organize by individual points, in which you discuss each subject in relation to each point.

- Use phrases of comparison or phrases of contrast to signal to readers how exactly the two subjects are being analyzed.

Writing for Success Copyright © 2015 by University of Minnesota is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

- Features for Creative Writers

- Features for Work

- Features for Higher Education

- Features for Teachers

- Features for Non-Native Speakers

- Learn Blog Grammar Guide Community Events FAQ

- Grammar Guide

Comparing and Contrasting: A Guide to Improve Your Essays

Walter Akolo

Essays that require you to compare and contrast two or more subjects, ideas, places, or items are common.

They call for you to highlight the key similarities (compare) and differences (contrast) between them.

This guide contains all the information you need to become better at writing comparing and contrasting essays.

This includes: how to structure your essay, how to decide on the content, and some examples of essay questions.

Let’s dive in.

What Is Comparing and Contrasting?

Is compare and contrast the same as similarities and differences, what is the purpose of comparing and contrasting, can you compare and contrast any two items, how do you compare and contrast in writing, what are some comparing and contrasting techniques, how do you compare and contrast in college level writing, the four essentials of compare and contrast essays, what can you learn from a compare and contrast essay.

At their most basic, both comparing and contrasting base their evaluation on two or more subjects that share a connection.

The subjects could have similar characteristics, features, or foundations.

But while a comparison discusses the similarities of the two subjects, e.g. a banana and a watermelon are both fruit, contrasting highlights how the subjects or items differ from each other, e.g. a watermelon is around 10 times larger than a banana.

Any question that you are asked in education will have a variety of interesting comparisons and deductions that you can make.

Compare is the same as similarities.

Contrast is the same as differences.

This is because comparing identifies the likeness between two subjects, items, or categories, while contrasting recognizes disparities between them.

When you compare things, you represent them regarding their similarity, but when you contrast things, you define them in reference to their differences.

As a result, if you are asked to discuss the similarities and differences between two subjects, you can take an identical approach to if you are writing a compare and contrast essay.

In writing, the purpose of comparing and contrasting is to highlight subtle but important differences or similarities that might not be immediately obvious.

By illustrating the differences between elements in a similar category, you help heighten readers’ understanding of the subject or topic of discussion.

For instance, you might choose to compare and contrast red wine and white wine by pointing out the subtle differences. One of these differences is that red wine is best served at room temperature while white is best served chilled.

Also, comparing and contrasting helps to make abstract ideas more definite and minimizes the confusion that might exist between two related concepts.

Can Comparing and Contrasting Be Useful Outside of Academia?

Comparing enables you to see the pros and cons, allowing you to have a better understanding of the things under discussion. In an essay, this helps you demonstrate that you understand the nuances of your topic enough to draw meaningful conclusions from them.

Let's use a real-word example to see the benefits. Imagine you're contrasting two dresses you could buy. You might think:

- Dress A is purple, my favorite color, but it has a difficult zip and is practically impossible to match a jacket to.

- Dress B is more expensive but I already have a suitable pair of shoes and jacket and it is easier to move in.

You're linking the qualities of each dress to the context of the decision you're making. This is the same for your essay. Your comparison and contrast points will be in relation to the question you need to answer.

Comparing and contrasting is only a useful technique when applied to two related concepts.

To effectively compare two or more things, they must feature characteristics similar enough to warrant comparison.

In addition to this they must also feature a similarity that generates an interesting discussion. But what do I mean by “interesting” here?

Let’s look at two concepts, the Magna Carta and my third grade poetry competition entry.

They are both text, written on paper by a person so they fulfil the first requirement, they have a similarity. But this comparison clearly would not fulfil the second requirement, you would not be able to draw any interesting conclusions.

However, if we compare the Magna Carta to the Bill of Rights, you would be able to come to some very interesting conclusions concerning the history of world politics.

To write a good compare and contrast essay, it’s best to pick two or more topics that share a meaningful connection .

The aim of the essay would be to show the subtle differences or unforeseen similarities.

By highlighting the distinctions between elements in a similar category you can increase your readers’ understanding.

Alternatively, you could choose to focus on a comparison between two subjects that initially appear unrelated.

The more dissimilar they seem, the more interesting the comparison essay will turn out.

For instance, you could compare and contrast professional rugby players with marathon runners.

Can You Compare and Contrast in an Essay That Does Not Specifically Require It?

As a writer, you can employ comparing and contrasting techniques in your writing, particularly when looking for ideas you can later apply in your argument.

You can do this even when the comparison or contrast is not a requirement for the topic or argument you are presenting. Doing so could enable you to build your evaluation and develop a stronger argument.

Note that the similarities and differences you come up with might not even show up in the final draft.

While the use of compare and contrast can be neutral, you can also use it to highlight one option under discussion. When used this way, you can influence the perceived advantages of your preferred option.

As a writing style, comparing and contrasting can encompass an entire essay. However, it could also appear in some select paragraphs within the essay, where making some comparisons serves to better illustrate a point.

What Should You Do First?

Before you compare two things, always start by deciding on the reason for your comparison, then outline the criteria you will use to compare them.

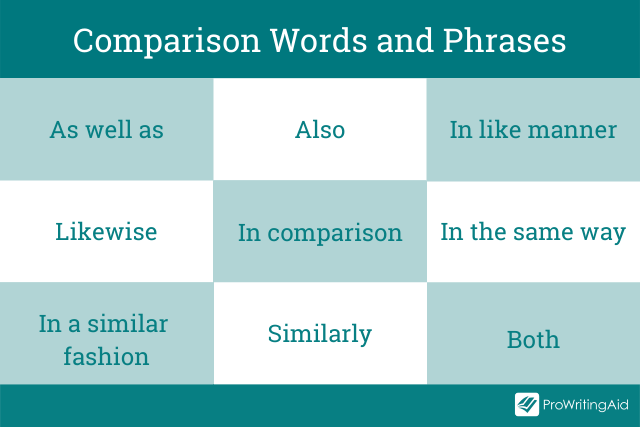

Words and phrases commonly used for comparison include:

In writing, these words and phrases are called transitions . They help readers to understand or make the connection between sentences, paragraphs, and ideas.

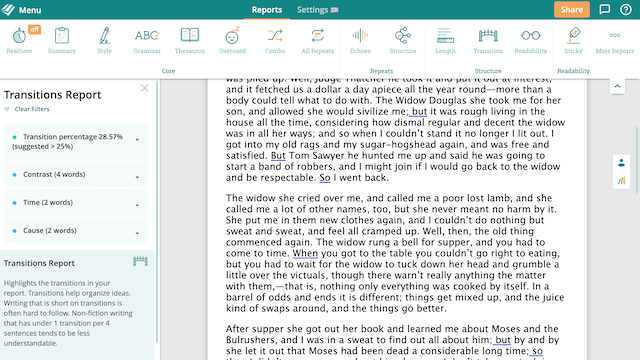

Without transition words writing can feel clumsy and disjointed making it difficult to read. ProWritingAid’s transition report highlights all of a documents transitions and suggests that 25% of any sentences in a piece include a transition.

Sign up for a free ProWritingAid account to use the Transitions Report.

So, how do you form all of this into a coherent essay? It's a good idea to plan first, then decide what your paragraph layout will look like.

Venn diagrams are useful tool to start generating ideas. The, for your essay, you need to choose between going idea by idea and going point by point.

Using a Venn Diagram

A Venn diagram helps you to clearly see the similarities and differences between multiple objects, things, or subjects.

The writing tool comprises two, or more, simple, overlapping circles in which you list down the things that are alike (within the overlapping area) and those that differ (outside the overlapping area).

It’s great for brainstorming ideas and for creating your essay’s outline. You could even use it in an exam setting because it is quick and simple.

Going Subject by Subject

Going subject by subject is a structural choice for your essay.

Start by saying all you have to say on the first subject, then proceed to do the same about the second subject.

Depending on the length of your essay, you can fit the points about each subject into one paragraph or have several sections per each subject, ending with a conclusion.

This method is best for short essays on simple topics. Most university-level essays will go point by point instead.

Going Point by Point

Going point by point, or alternating, is the opposite essay structure from going subject by subject. This is ideal when you want to do more direct comparing and contrasting. It entails discussing one comparison point at a time. It allows you to use a paragraph to talk about how a certain comparing/contrasting point relates to the subjects or items you are discussing.

Alternatively, if you have lots of details about the subject, you might decide to use a paragraph for each point.

An academic compare and contrast essay looks at two or more subjects, ideas, people, or objects, compares their likeness, and contrasts their differences.

It’s an informative essay that provides insights on what is similar and different between the two items.

Depending on the essay’s instructions, you can focus solely on comparing or contrasting, or a combination of the two.

Examples of College Level Compare and Contrast Essay Questions

Here are eleven examples of compare and contrast essay questions that you might encounter at university:

- Archaeology: Compare and contrast the skulls of homo habilis, homo erectus, and homo sapiens.

- Art: Compare and contrast the working styles of any two Neoclassic artists.

- Astrophysics: Compare and contrast the chemical composition of Venus and Neptune.

- Biology: Compare and contrast the theories of Lamarck and Darwin.

- Business: Compare and contrast 2 or more business models within the agricultural industry.

- Creative writing: Compare and contrast free indirect discourse with epistolary styles.

- English Literature: Compare and contrast William Wordsworth with Robert Browning.

- Geography: Compare and contrast the benefit of solar panels with the benefit of wind turbines.

- History: Compare and contrast WWI to WWII with specific reference to the causes and outcomes.

- Medicine: Compare and contrast England’s health service with America’s health service.

- Psychology: Compare and contrast the behaviorist theory with the psychodynamic theory.

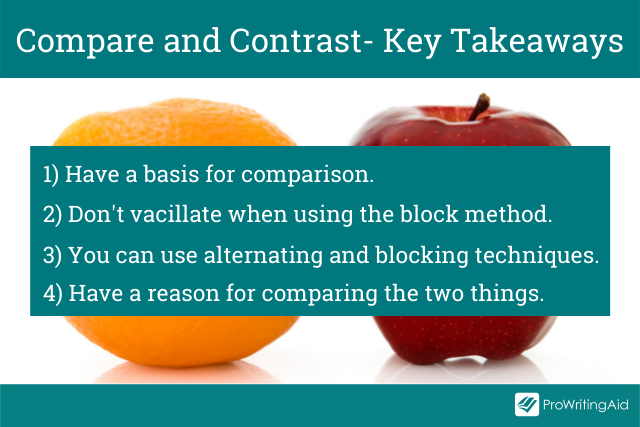

So, the key takeaways to keep in mind are:

Have a basis for comparison. The two things need to have enough in common to justify a discussion about their similarities and disparities.

Don’t go back and forth when using the block method. The best way to write your essay is to begin with a paragraph discussing all the facets of the first topic. Then, move on to another paragraph and talk through all the aspects of the second subject.

You can use both alternating and blocking techniques. Combining the two approaches is also an option. You can apply the alternating method in some paragraphs, then switch and use the block method. This method will help you offer a much deeper analysis of the subjects.

Have a reason for comparing the two things. Only select the points of comparison that resonate with your purpose.

Comparing and contrasting are essential analytical skills in academic writing. When your professor issues you with such an essay, their primary goal is to teach you how to:

- Engage in critical thinking

- See and make connections between words or ideas

- Move beyond mere descriptions or summaries to developing interesting analysis

- Get a deeper understanding of the subjects or items under comparison, their key features, and their interrelationships with each other.

Ultimately, your essay should enlighten readers by providing useful information.

Want to use ProWritingAid with your classroom? Download this free book now:

ProWritingAid Teacher’s Manual

Editing technology like prowritingaid provides immediate, personalized feedback that will help students to better understand grammar and writing techniques., in this guide , we walk you through exactly how to use prowritingaid in your classroom and give you tools and templates for creating a rigorous, effective independent writing practice with your students..

Be confident about grammar

Check every email, essay, or story for grammar mistakes. Fix them before you press send.

Walter Akolo is a freelance writer, internet marketer, trainer, and blogger for hire. He loves helping businesses increase their reach and conversion through excellent and engaging content. He has gotten millions of pageviews on his blog, FreelancerKenya, where he mentors writers. Check out his website walterakolo.com.

Get started with ProWritingAid

Drop us a line or let's stay in touch via :

- Writing for Success: Compare/Contrast

LEARNING OBJECTIVES

This section will help you determine the purpose and structure of comparison/contrast in writing.

The Purpose of Compare/Contrast in Writing

Comparison in writing discusses elements that are similar, while contrast in writing discusses elements that are different. A compare-and-contrast essay, then, analyzes two subjects by comparing them, contrasting them, or both.

The key to a good compare-and-contrast essay is to choose two or more subjects that connect in a meaningful way. The purpose of conducting the comparison or contrast is not to state the obvious but rather to illuminate subtle differences or unexpected similarities. For example, if you wanted to focus on contrasting two subjects you would not pick apples and oranges; rather, you might choose to compare and contrast two types of oranges or two types of apples to highlight subtle differences. For example, Red Delicious apples are sweet, while Granny Smiths are tart and acidic. Drawing distinctions between elements in a similar category will increase the audience’s understanding of that category, which is the purpose of the compare-and-contrast essay.

Similarly, to focus on comparison, choose two subjects that seem at first to be unrelated. For a comparison essay, you likely would not choose two apples or two oranges because they share so many of the same properties already. Rather, you might try to compare how apples and oranges are quite similar. The more divergent the two subjects initially seem, the more interesting a comparison essay will be.

The Structure of a Compare/Contrast Essay

The compare-and-contrast essay starts with a thesis that clearly states the two subjects that are to be compared, contrasted, or both and the reason for doing so. The thesis could lean more toward comparing, contrasting, or both. Remember, the point of comparing and contrasting is to provide useful knowledge to the reader. Take the following thesis as an example that leans more toward contrasting:

Thesis Statement: Organic vegetables may cost more than those that are conventionally grown, but when put to the test, they are definitely worth every extra penny.

Here the thesis sets up the two subjects to be compared and contrasted (organic versus conventional vegetables), and it makes a claim about the results that might prove useful to the reader.

You may organize compare-and-contrast essays in one of the following two ways:

- According to the subjects themselves, discussing one then the other

- According to individual points, discussing each subject in relation to each point

The organizational structure you choose depends on the nature of the topic, your purpose, and your audience.

Given that compare-and-contrast essays analyze the relationship between two subjects, it is helpful to have some phrases on hand that will cue the reader to such analysis.

Phrases of Comparison and Contrast

| one similarity | one difference |

| another similarity | another difference |

| both | conversely |

| like | in contrast |

| likewise | unlike |

| similarly | while |

| in a similar fashion | whereas |

Writing an Compare/Contrast Essay

First choose whether you want to compare seemingly disparate subjects, contrast seemingly similar subjects, or compare and contrast subjects. Once you have decided on a topic, introduce it with an engaging opening paragraph. Your thesis should come at the end of the introduction, and it should establish the subjects you will compare, contrast, or both as well as state what can be learned from doing so.

The body of the essay can be organized in one of two ways: by subject or by individual points. The organizing strategy that you choose will depend on, as always, your audience and your purpose. You may also consider your particular approach to the subjects as well as the nature of the subjects themselves; some subjects might better lend themselves to one structure or the other. Make sure to use comparison and contrast phrases to cue the reader to the ways in which you are analyzing the relationship between the subjects.

After you finish analyzing the subjects, write a conclusion that summarizes the main points of the essay and reinforces your thesis.

Compare/Contrast Essay Example

Comparing and Contrasting London and Washington, DC

By Scott McLean in Writing for Success

Both Washington, DC, and London are capital cities of English-speaking countries, and yet they offer vastly different experiences to their residents and visitors. Comparing and contrasting the two cities based on their history, their culture, and their residents show how different and similar the two are.

Both cities are rich in world and national history, though they developed on very different time lines. London, for example, has a history that dates back over two thousand years. It was part of the Roman Empire and known by the similar name, Londinium. It was not only one of the northernmost points of the Roman Empire but also the epicenter of the British Empire where it held significant global influence from the early sixteenth century on through the early twentieth century. Washington, DC, on the other hand, has only formally existed since the late eighteenth century. Though Native Americans inhabited the land several thousand years earlier, and settlers inhabited the land as early as the sixteenth century, the city did not become the capital of the United States until the 1790s. From that point onward to today, however, Washington, DC, has increasingly maintained significant global influence. Even though both cities have different histories, they have both held, and continue to hold, significant social influence in the economic and cultural global spheres.

Both Washington, DC, and London offer a wide array of museums that harbor many of the world’s most prized treasures. While Washington, DC, has the National Gallery of Art and several other Smithsonian galleries, London’s art scene and galleries have a definite edge in this category. From the Tate Modern to the British National Gallery, London’s art ranks among the world’s best. This difference and advantage has much to do with London and Britain’s historical depth compared to that of the United States. London has a much richer past than Washington, DC, and consequently has a lot more material to pull from when arranging its collections. Both cities have thriving theater districts, but again, London wins this comparison, too, both in quantity and quality of theater choices. With regard to other cultural places like restaurants, pubs, and bars, both cities are very comparable. Both have a wide selection of expensive, elegant restaurants as well as a similar amount of global and national chains. While London may be better known for its pubs and taste in beer, DC offers a different bar-going experience. With clubs and pubs that tend to stay open later than their British counterparts, the DC night life tend to be less reserved overall.

Both cities also share and differ in cultural diversity and cost of living. Both cities share a very expensive cost of living—both in terms of housing and shopping. A downtown one-bedroom apartment in DC can easily cost $1,800 per month, and a similar “flat” in London may double that amount. These high costs create socioeconomic disparity among the residents. Although both cities’ residents are predominantly wealthy, both have a significantly large population of poor and homeless. Perhaps the most significant difference between the resident demographics is the racial makeup. Washington, DC, is a “minority majority” city, which means the majority of its citizens are races other than white. In 2009, according to the US Census, 55 percent of DC residents were classified as “Black or African American” and 35 percent of its residents were classified as “white.” London, by contrast, has very few minorities—in 2006, 70 percent of its population was “white,” while only 10 percent was “black.” The racial demographic differences between the cities is drastic.

Even though Washington, DC, and London are major capital cities of English-speaking countries in the Western world, they have many differences along with their similarities. They have vastly different histories, art cultures, and racial demographics, but they remain similar in their cost of living and socioeconomic disparity.

KEY TAKEAWAYS

- A compare-and-contrast essay analyzes two subjects by either comparing them, contrasting them, or both.

- The purpose of writing a comparison or contrast essay is not to state the obvious but rather to illuminate subtle differences or unexpected similarities between two subjects.

- The thesis should clearly state the subjects that are to be compared, contrasted, or both, and it should state what is to be learned from doing so.

- There are two main organizing strategies for compare-and-contrast essays.

- Organize by the subjects themselves, one then the other.

- Organize by individual points, in which you discuss each subject in relation to each point.

- Use phrases of comparison or phrases of contrast to signal to readers how exactly the two subjects are being analyzed.

- Provided by : Lumen Learning. Located at : http://lumenlearning.com/ . License : CC BY-NC-SA: Attribution-NonCommercial-ShareAlike

- Successful Writing. Provided by : Anonymous. Located at : http://2012books.lardbucket.org/books/successful-writing/s14-07-comparison-and-contrast.html . License : CC BY-NC-SA: Attribution-NonCommercial-ShareAlike

- Comparing and Contrasting London and Washington, DC. Authored by : Scott McLean. Located at : http://2012books.lardbucket.org/books/successful-writing/s14-07-comparison-and-contrast.html . License : CC BY-NC-SA: Attribution-NonCommercial-ShareAlike

- Table of Contents

Instructor Resources (Access Requires Login)

- Overview of Instructor Resources

An Overview of the Writing Process

- Introduction to the Writing Process

- Introduction to Writing

- Your Role as a Learner

- What is an Essay?

- Reading to Write

- Defining the Writing Process

- Videos: Prewriting Techniques

- Thesis Statements

- Organizing an Essay

- Creating Paragraphs

- Conclusions

- Editing and Proofreading

- Matters of Grammar, Mechanics, and Style

- Peer Review Checklist

- Comparative Chart of Writing Strategies

Using Sources

- Quoting, Paraphrasing, and Avoiding Plagiarism

- Formatting the Works Cited Page (MLA)

- Citing Paraphrases and Summaries (APA)

- APA Citation Style, 6th edition: General Style Guidelines

Definition Essay

- Definitional Argument Essay

- How to Write a Definition Essay

- Critical Thinking

- Video: Thesis Explained

- Effective Thesis Statements

- Student Sample: Definition Essay

Narrative Essay

- Introduction to Narrative Essay

- Student Sample: Narrative Essay

- "Shooting an Elephant" by George Orwell

- "Sixty-nine Cents" by Gary Shteyngart

- Video: The Danger of a Single Story

- How to Write an Annotation

- How to Write a Summary

- Writing for Success: Narration

Illustration/Example Essay

- Introduction to Illustration/Example Essay

- "She's Your Basic L.O.L. in N.A.D" by Perri Klass

- "April & Paris" by David Sedaris

- Writing for Success: Illustration/Example

- Student Sample: Illustration/Example Essay

Compare/Contrast Essay

- Introduction to Compare/Contrast Essay

- "Disability" by Nancy Mairs

- "Friending, Ancient or Otherwise" by Alex Wright

- "A South African Storm" by Allison Howard

- Student Sample: Compare/Contrast Essay

Cause-and-Effect Essay

- Introduction to Cause-and-Effect Essay

- "Cultural Baggage" by Barbara Ehrenreich

- "Women in Science" by K.C. Cole

- Writing for Success: Cause and Effect

- Student Sample: Cause-and-Effect Essay

Argument Essay

- Introduction to Argument Essay

- Rogerian Argument

- "The Case Against Torture," by Alisa Soloman

- "The Case for Torture" by Michael Levin

- How to Write a Summary by Paraphrasing Source Material

- Writing for Success: Argument

- Student Sample: Argument Essay

- Grammar/Mechanics Mini-lessons

- Mini-lesson: Subjects and Verbs, Irregular Verbs, Subject Verb Agreement

- Mini-lesson: Sentence Types

- Mini-lesson: Fragments I

- Mini-lesson: Run-ons and Comma Splices I

- Mini-lesson: Comma Usage

- Mini-lesson: Parallelism

- Mini-lesson: The Apostrophe

- Mini-lesson: Capital Letters

- Grammar Practice - Interactive Quizzes

- De Copia - Demonstration of the Variety of Language

- Style Exercise: Voice

- Our Writers

- How to Order

- Assignment Writing Service

- Report Writing Service

- Buy Coursework

- Dissertation Writing Service

- Research Paper Writing Service

- All Essay Services

- Buy Research Paper

- Buy Term Paper

- Buy Dissertation

- Buy Case study

- Buy Presentation

- Buy Personal statement

Comparative Essay

How to Write a Comparative Essay – A Complete Guide

10 min read

People also read

Learn How to Write an Editorial on Any Topic

Best Tips on How to Avoid Plagiarism

How to Write a Movie Review - Guide & Examples

A Complete Guide on How to Write a Summary for Students

Write Opinion Essay Like a Pro: A Detailed Guide

Evaluation Essay - Definition, Examples, and Writing Tips

How to Write a Thematic Statement - Tips & Examples

How to Write a Bio - Quick Tips, Structure & Examples

How to Write a Synopsis – A Simple Format & Guide

Visual Analysis Essay - A Writing Guide with Format & Sample

List of Common Social Issues Around the World

Writing Character Analysis - Outline, Steps, and Examples

11 Common Types of Plagiarism Explained Through Examples

Article Review Writing: A Complete Step-by-Step Guide with Examples

A Detailed Guide on How to Write a Poem Step by Step

Detailed Guide on Appendix Writing: With Tips and Examples

Comparative essay is a common assignment for school and college students. Many students are not aware of the complexities of crafting a strong comparative essay.

If you too are struggling with this, don't worry!

In this blog, you will get a complete writing guide for comparative essay writing. From structuring formats to creative topics, this guide has it all.

So, keep reading!

- 1. What is a Comparative Essay?

- 2. Comparative Essay Structure

- 3. How to Start a Comparative Essay?

- 4. How to Write a Comparative Essay?

- 5. Comparative Essay Examples

- 6. Comparative Essay Topics

- 7. Tips for Writing A Good Comparative Essay

- 8. Transition Words For Comparative Essays

What is a Comparative Essay?

A comparative essay is a type of essay in which an essay writer compares at least two or more items. The author compares two subjects with the same relation in terms of similarities and differences depending on the assignment.

The main purpose of the comparative essay is to:

- Highlight the similarities and differences in a systematic manner.

- Provide great clarity of the subject to the readers.

- Analyze two things and describe their advantages and drawbacks.

A comparative essay is also known as compare and contrast essay or a comparison essay. It analyzes two subjects by either comparing them, contrasting them, or both. The Venn diagram is the best tool for writing a paper about the comparison between two subjects.

Moreover, a comparative analysis essay discusses the similarities and differences of themes, items, events, views, places, concepts, etc. For example, you can compare two different novels (e.g., The Adventures of Huckleberry Finn and The Red Badge of Courage).

However, a comparative essay is not limited to specific topics. It covers almost every topic or subject with some relation.

Comparative Essay Structure

A good comparative essay is based on how well you structure your essay. It helps the reader to understand your essay better.

The structure is more important than what you write. This is because it is necessary to organize your essay so that the reader can easily go through the comparisons made in an essay.

The following are the two main methods in which you can organize your comparative essay.

Point-by-Point Method

The point-by-point or alternating method provides a detailed overview of the items that you are comparing. In this method, organize items in terms of similarities and differences.

This method makes the writing phase easy for the writer to handle two completely different essay subjects. It is highly recommended where some depth and detail are required.

Below given is the structure of the point-by-point method.

|

Block Method

The block method is the easiest as compared to the point-by-point method. In this method, you divide the information in terms of parameters. It means that the first paragraph compares the first subject and all their items, then the second one compares the second, and so on.

However, make sure that you write the subject in the same order. This method is best for lengthy essays and complicated subjects.

Here is the structure of the block method.

Therefore, keep these methods in mind and choose the one according to the chosen subject.

Mixed Paragraphs Method

In this method, one paragraph explains one aspect of the subject. As a writer, you will handle one point at a time and one by one. This method is quite beneficial as it allows you to give equal weightage to each subject and help the readers identify the point of comparison easily.

How to Start a Comparative Essay?

Here, we have gathered some steps that you should follow to start a well-written comparative essay.

Choose a Topic

The foremost step in writing a comparative essay is to choose a suitable topic.

Choose a topic or theme that is interesting to write about and appeals to the reader.

An interesting essay topic motivates the reader to know about the subject. Also, try to avoid complicated topics for your comparative essay.

Develop a List of Similarities and Differences

Create a list of similarities and differences between two subjects that you want to include in the essay. Moreover, this list helps you decide the basis of your comparison by constructing your initial plan.

Evaluate the list and establish your argument and thesis statement .

Establish the Basis for Comparison

The basis for comparison is the ground for you to compare the subjects. In most cases, it is assigned to you, so check your assignment or prompt.

Furthermore, the main goal of the comparison essay is to inform the reader of something interesting. It means that your subject must be unique to make your argument interesting.

Do the Research

In this step, you have to gather information for your subject. If your comparative essay is about social issues, historical events, or science-related topics, you must do in-depth research.

However, make sure that you gather data from credible sources and cite them properly in the essay.

Create an Outline

An essay outline serves as a roadmap for your essay, organizing key elements into a structured format.

With your topic, list of comparisons, basis for comparison, and research in hand, the next step is to create a comprehensive outline.

Here is a standard comparative essay outline:

Subject B Analysis Subject B Analysis

|

How to Write a Comparative Essay?

Now that you have the basic information organized in an outline, you can get started on the writing process.

Here are the essential parts of a comparative essay:

Comparative Essay Introduction

Start off by grabbing your reader's attention in the introduction . Use something catchy, like a quote, question, or interesting fact about your subjects.

Then, give a quick background so your reader knows what's going on.

The most important part is your thesis statement, where you state the main argument , the basis for comparison, and why the comparison is significant.

This is what a typical thesis statement for a comparative essay looks like:

Comparative Essay Body Paragraphs

The body paragraphs are where you really get into the details of your subjects. Each paragraph should focus on one thing you're comparing.

Start by talking about the first point of comparison. Then, go on to the next points. Make sure to talk about two to three differences to give a good picture.

After that, switch gears and talk about the things they have in common. Just like you discussed three differences, try to cover three similarities.

This way, your essay stays balanced and fair. This approach helps your reader understand both the ways your subjects are different and the ways they are similar. Keep it simple and clear for a strong essay.

Comparative Essay Conclusion

In your conclusion , bring together the key insights from your analysis to create a strong and impactful closing.

Consider the broader context or implications of the subjects' differences and similarities. What do these insights reveal about the broader themes or ideas you're exploring?

Discuss the broader implications of these findings and restate your thesis. Avoid introducing new information and end with a thought-provoking statement that leaves a lasting impression.

Below is the detailed comparative essay template format for you to understand better.

Comparative Essay Format

Comparative Essay Examples

Have a look at these comparative essay examples pdf to get an idea of the perfect essay.

Comparative Essay on Summer and Winter

Comparative Essay on Books vs. Movies

Comparative Essay Sample

Comparative Essay Thesis Example

Comparative Essay on Football vs Cricket

Comparative Essay on Pet and Wild Animals

Comparative Essay Topics

Comparative essay topics are not very difficult or complex. Check this list of essay topics and pick the one that you want to write about.

- How do education and employment compare?

- Living in a big city or staying in a village.

- The school principal or college dean.

- New Year vs. Christmas celebration.

- Dried Fruit vs. Fresh. Which is better?

- Similarities between philosophy and religion.

- British colonization and Spanish colonization.

- Nuclear power for peace or war?

- Bacteria or viruses.

- Fast food vs. homemade food.

Tips for Writing A Good Comparative Essay

Writing a compelling comparative essay requires thoughtful consideration and strategic planning. Here are some valuable tips to enhance the quality of your comparative essay:

- Clearly define what you're comparing, like themes or characters.

- Plan your essay structure using methods like point-by-point or block paragraphs.

- Craft an introduction that introduces subjects and states your purpose.

- Ensure an equal discussion of both similarities and differences.

- Use linking words for seamless transitions between paragraphs.

- Gather credible information for depth and authenticity.

- Use clear and simple language, avoiding unnecessary jargon.

- Dedicate each paragraph to a specific point of comparison.

- Summarize key points, restate the thesis, and emphasize significance.

- Thoroughly check for clarity, coherence, and correct any errors.

Transition Words For Comparative Essays

Transition words are crucial for guiding your reader through the comparative analysis. They help establish connections between ideas and ensure a smooth flow in your essay.

Here are some transition words and phrases to improve the flow of your comparative essay:

Transition Words for Similarities

- Correspondingly

- In the same vein

- In like manner

- In a similar fashion

- In tandem with

Transition Words for Differences

- On the contrary

- In contrast

- Nevertheless

- In spite of

- Notwithstanding

- On the flip side

- In contradistinction

Check out this blog listing more transition words that you can use to enhance your essay’s coherence!

In conclusion, now that you have the important steps and helpful tips to write a good comparative essay, you can start working on your own essay.

However, if you find it tough to begin, you can always hire our college paper writing service .

Our skilled writers can handle any type of essay or assignment you need. So, don't wait—place your order now and make your academic journey easier!

Frequently Asked Question

How long is a comparative essay.

A comparative essay is 4-5 pages long, but it depends on your chosen idea and topic.

How do you end a comparative essay?

Here are some tips that will help you to end the comparative essay.

- Restate the thesis statement

- Wrap up the entire essay

- Highlight the main points

Write Essay Within 60 Seconds!

Dr. Barbara is a highly experienced writer and author who holds a Ph.D. degree in public health from an Ivy League school. She has worked in the medical field for many years, conducting extensive research on various health topics. Her writing has been featured in several top-tier publications.

Paper Due? Why Suffer? That’s our Job!

Keep reading

- I nfographics

- Show AWL words

- Subscribe to newsletter

- What is academic writing?

- Academic Style

- What is the writing process?

- Understanding the title

- Brainstorming

- Researching

- First draft

- Proofreading

- Report writing

- Compare & contrast

- Cause & effect

- Problem-solution

- Classification

- Essay structure

- Introduction

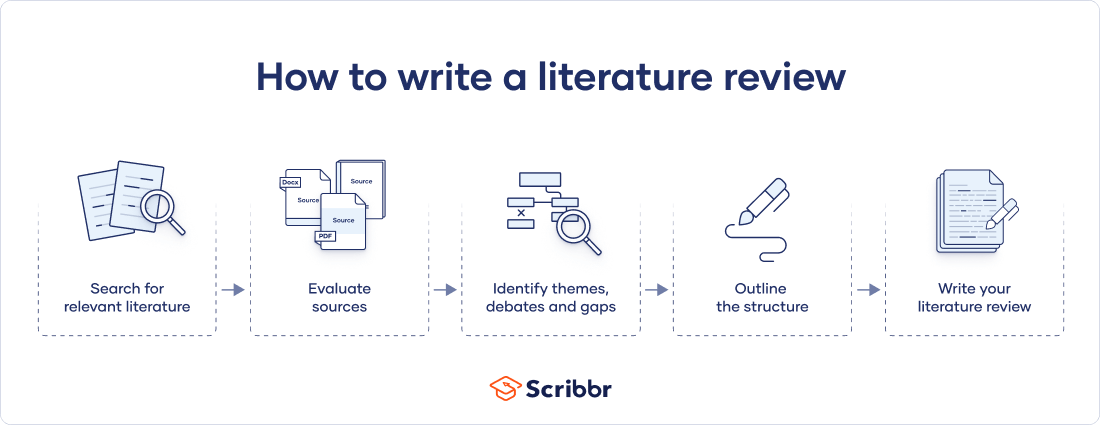

- Literature review

- Book review

- Research proposal

- Thesis/dissertation

- What is cohesion?

- Cohesion vs coherence

- Transition signals

- What are references?

- In-text citations

- Reference sections

- Reporting verbs

- Band descriptors

Show AWL words on this page.

Levels 1-5: grey Levels 6-10: orange

Show sorted lists of these words.

| --> |

Any words you don't know? Look them up in the website's built-in dictionary .

|

|

Choose a dictionary . Wordnet OPTED both

Compare & Contrast Essays How things are similar or different

Compare and contrast is a common form of academic writing, either as an essay type on its own, or as part of a larger essay which includes one or more paragraphs which compare or contrast. This page gives information on what a compare and contrast essay is , how to structure this type of essay, how to use compare and contrast structure words , and how to make sure you use appropriate criteria for comparison/contrast . There is also an example compare and contrast essay on the topic of communication technology, as well as some exercises to help you practice this area.

What are compare & contrast essays?

For another look at the same content, check out YouTube » or Youku » , or this infographic » .

To compare is to examine how things are similar, while to contrast is to see how they differ. A compare and contrast essay therefore looks at the similarities of two or more objects, and the differences. This essay type is common at university, where lecturers frequently test your understanding by asking you to compare and contrast two theories, two methods, two historical periods, two characters in a novel, etc. Sometimes the whole essay will compare and contrast, though sometimes the comparison or contrast may be only part of the essay. It is also possible, especially for short exam essays, that only the similarities or the differences, not both, will be discussed. See the examples below.

- Compare and contrast Newton's ideas of gravity with those proposed by Einstein ['compare and contrast' essay]

- Examine how the economies of Spain and China are similar ['compare' only essay]

- Explain the differences between Achaemenid Empire and Parthian Empire ['contrast' only essay]

There are two main ways to structure a compare and contrast essay, namely using a block or a point-by-point structure. For the block structure, all of the information about one of the objects being compared/contrasted is given first, and all of the information about the other object is listed afterwards. This type of structure is similar to the block structure used for cause and effect and problem-solution essays. For the point-by-point structure, each similarity (or difference) for one object is followed immediately by the similarity (or difference) for the other. Both types of structure have their merits. The former is easier to write, while the latter is generally clearer as it ensures that the similarities/differences are more explicit.

The two types of structure, block and point-by-point , are shown in the diagram below.

| |

| |

Compare and Contrast Structure Words

Compare and contrast structure words are transition signals which show the similarities or differences. Below are some common examples.

- both... and...

- not only... but also...

- neither... nor...

- just like (+ noun)

- similar to (+ noun)

- to be similar (to)

- to be the same as

- to be alike

- to compare (to/with)

- Computers can be used to communicate easily, for example via email. Similarly/Likewise , the mobile phone is a convenient tool for communication.

- Both computers and mobile phones can be used to communicate easily with other people.

- Just like the computer, the mobile phone can be used to communicate easily with other people.

- The computer is similar to the mobile phone in the way it can be used for easy communication.

- In contrast