Deep-Learning-Specialization-Coursera

This repo contains the updated version of all the assignments/labs (done by me) of deep learning specialization on coursera by andrew ng. it includes building various deep learning models from scratch and implementing them for object detection, facial recognition, autonomous driving, neural machine translation, trigger word detection, etc., deep learning specialization coursera [updated version 2021].

Announcement

[!IMPORTANT] Check our latest paper (accepted in ICDAR’23) on Urdu OCR

This repo contains all of the solved assignments of Coursera’s most famous Deep Learning Specialization of 5 courses offered by deeplearning.ai

Instructor: Prof. Andrew Ng

This Specialization was updated in April 2021 to include developments in deep learning and programming frameworks. One of the most major changes was shifting from Tensorflow 1 to Tensorflow 2. Also, new materials were added. However, Most of the old online repositories still don’t have old codes. This repo contains updated versions of the assignments. Happy Learning :)

Programming Assignments

Course 1: Neural Networks and Deep Learning

- W2A1 - Logistic Regression with a Neural Network mindset

- W2A2 - Python Basics with Numpy

- W3A1 - Planar data classification with one hidden layer

- W3A1 - Building your Deep Neural Network: Step by Step¶

- W3A2 - Deep Neural Network for Image Classification: Application

Course 2: Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization

- W1A1 - Initialization

- W1A2 - Regularization

- W1A3 - Gradient Checking

- W2A1 - Optimization Methods

- W3A1 - Introduction to TensorFlow

Course 3: Structuring Machine Learning Projects

- There were no programming assignments in this course. It was completely thoeretical.

- Here is a link to the course

Course 4: Convolutional Neural Networks

- W1A1 - Convolutional Model: step by step

- W1A2 - Convolutional Model: application

- W2A1 - Residual Networks

- W2A2 - Transfer Learning with MobileNet

- W3A1 - Autonomous Driving - Car Detection

- W3A2 - Image Segmentation - U-net

- W4A1 - Face Recognition

- W4A2 - Neural Style transfer

Course 5: Sequence Models

- W1A1 - Building a Recurrent Neural Network - Step by Step

- W1A2 - Character level language model - Dinosaurus land

- W1A3 - Improvise A Jazz Solo with an LSTM Network

- W2A1 - Operations on word vectors

- W2A2 - Emojify

- W3A1 - Neural Machine Translation With Attention

- W3A2 - Trigger Word Detection

- W4A1 - Transformer Network

- W4A2 - Named Entity Recognition - Transformer Application

- W4A3 - Extractive Question Answering - Transformer Application

I’ve uploaded these solutions here, only for being used as a help by those who get stuck somewhere. It may help them to save some time. I strongly recommend everyone to not directly copy any part of the code (from here or anywhere else) while doing the assignments of this specialization. The assignments are fairly easy and one learns a great deal of things upon doing these. Thanks to the deeplearning.ai team for giving this treasure to us.

Connect with me

Name: Abdur Rahman

Institution: Indian Institute of Technology Delhi

Find me on:

Deep-Learning-Specialization

Coursera deep learning specialization, neural networks and deep learning.

In this course, you will learn the foundations of deep learning. When you finish this class, you will:

- Understand the major technology trends driving Deep Learning.

- Be able to build, train and apply fully connected deep neural networks.

- Know how to implement efficient (vectorized) neural networks.

- Understand the key parameters in a neural network’s architecture.

Week 1: Introduction to deep learning

Be able to explain the major trends driving the rise of deep learning, and understand where and how it is applied today.

- Quiz 1: Introduction to deep learning

Week 2: Neural Networks Basics

Learn to set up a machine learning problem with a neural network mindset. Learn to use vectorization to speed up your models.

- Quiz 2: Neural Network Basics

- Programming Assignment: Python Basics With Numpy

- Programming Assignment: Logistic Regression with a Neural Network mindset

Week 3: Shallow neural networks

Learn to build a neural network with one hidden layer, using forward propagation and backpropagation.

- Quiz 3: Shallow Neural Networks

- Programming Assignment: Planar Data Classification with Onehidden Layer

Week 4: Deep Neural Networks

Understand the key computations underlying deep learning, use them to build and train deep neural networks, and apply it to computer vision.

- Quiz 4: Key concepts on Deep Neural Networks

- Programming Assignment: Building your Deep Neural Network Step by Step

- Programming Assignment: Deep Neural Network Application

Course Certificate

- 🌐 All Sites

- _APDaga DumpBox

- _APDaga Tech

- _APDaga Invest

- _APDaga Videos

- 🗃️ Categories

- _Free Tutorials

- __Python (A to Z)

- __Internet of Things

- __Coursera (ML/DL)

- __HackerRank (SQL)

- __Interview Q&A

- _Artificial Intelligence

- __Machine Learning

- __Deep Learning

- _Internet of Things

- __Raspberry Pi

- __Coursera MCQs

- __Linkedin MCQs

- __Celonis MCQs

- _Handwriting Analysis

- __Graphology

- _Investment Ideas

- _Open Diary

- _Troubleshoots

- _Freescale/NXP

- 📣 Mega Menu

- _Logo Maker

- _Youtube Tumbnail Downloader

- 🕸️ Sitemap

Coursera: Neural Networks and Deep Learning (Week 4A) [Assignment Solution] - deeplearning.ai

▸ building your deep neural network: step by step. i have recently completed the neural networks and deep learning course from coursera by deeplearning.ai while doing the course we have to go through various quiz and assignments in python. here, i am sharing my solutions for the weekly assignments throughout the course. these solutions are for reference only. > it is recommended that you should solve the assignments by yourself honestly then only it makes sense to complete the course. > but, in case you stuck in between, feel free to refer to the solutions provided by me., don't just copy paste the code for the sake of completion. even if you copy the code, make sure you understand the code first. click here : coursera: neural networks & deep learning (week 3) click here: coursera: neural networks & deep learning (week 4b) scroll down for coursera: neural networks & deep learning (week 4a) assignments . (adsbygoogle = window.adsbygoogle || []).push({}); recommended machine learning courses: coursera: machine learning coursera: deep learning specialization coursera: machine learning with python coursera: advanced machine learning specialization udemy: machine learning linkedin: machine learning eduonix: machine learning edx: machine learning fast.ai: introduction to machine learning for coders, building your deep neural network: step by step, in this notebook, you will implement all the functions required to build a deep neural network. in the next assignment, you will use these functions to build a deep neural network for image classification. after this assignment you will be able to: use non-linear units like relu to improve your model build a deeper neural network (with more than 1 hidden layer) implement an easy-to-use neural network class notation : superscript [ l ] denotes a quantity associated with the l t h layer. example: a [ l ] is the l t h layer activation. w [ l ] and b [ l ] are the l t h layer parameters. superscript ( i ) denotes a quantity associated with the i t h example. example: x ( i ) is the i t h training example. lowerscript i i denotes the i t h entry of a vector. example: a [ l ] i denotes the i t h entry of the l t h layer's activations). let's get started, 1 - packages, let's first import all the packages that you will need during this assignment. numpy is the main package for scientific computing with python. matplotlib is a library to plot graphs in python. dnn_utils provides some necessary functions for this notebook. testcases provides some test cases to assess the correctness of your functions np.random.seed(1) is used to keep all the random function calls consistent. it will help us grade your work. please don't change the seed., 2 - outline of the assignment.

- Initialize the parameters for a two-layer network and for an L -layer neural network.

- Complete the LINEAR part of a layer's forward propagation step (resulting in Z [ l ] ).

- We give you the ACTIVATION function (relu/sigmoid).

- Combine the previous two steps into a new [LINEAR->ACTIVATION] forward function.

- Stack the [LINEAR->RELU] forward function L-1 time (for layers 1 through L-1) and add a [LINEAR->SIGMOID] at the end (for the final layer L ). This gives you a new L_model_forward function.

- Compute the loss.

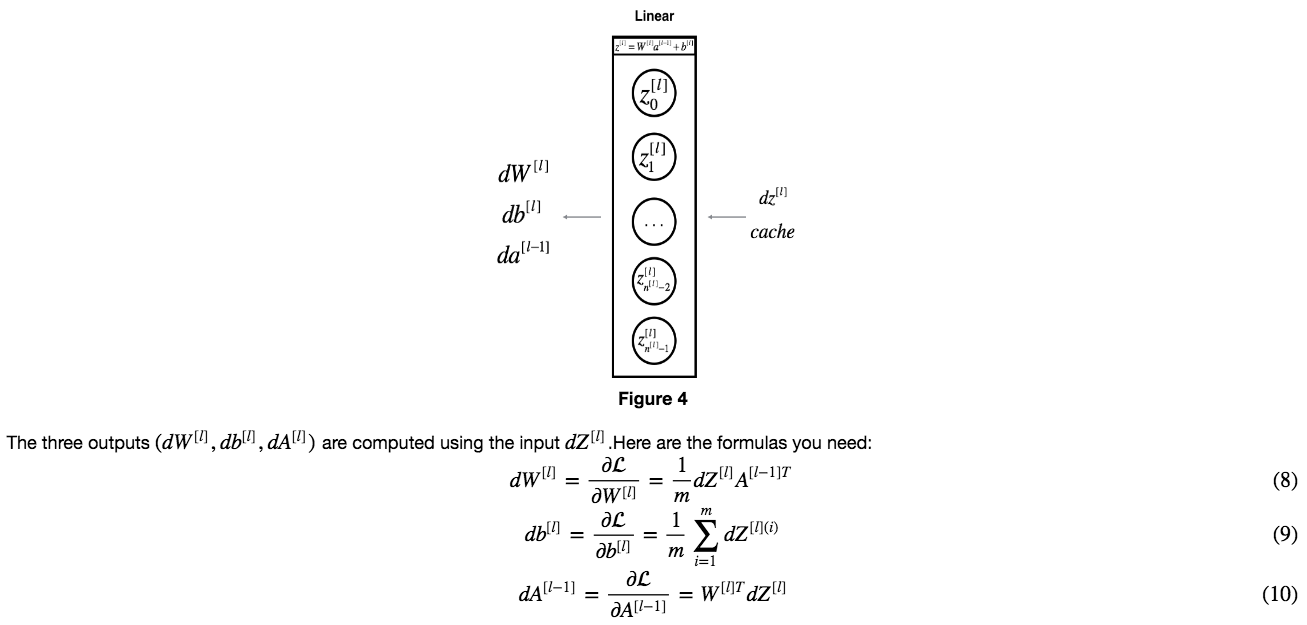

- Complete the LINEAR part of a layer's backward propagation step.

- We give you the gradient of the ACTIVATE function (relu_backward/sigmoid_backward)

- Combine the previous two steps into a new [LINEAR->ACTIVATION] backward function.

- Stack [LINEAR->RELU] backward L-1 times and add [LINEAR->SIGMOID] backward in a new L_model_backward function

- Finally update the parameters.

Check-out our free tutorials on IOT (Internet of Things):

3 - Initialization

3.1 - 2-layer neural network.

- The model's structure is: LINEAR -> RELU -> LINEAR -> SIGMOID .

- Use random initialization for the weight matrices. Use np.random.randn(shape)*0.01 with the correct shape.

- Use zero initialization for the biases. Use np.zeros(shape) .

3.2 - L-layer Neural Network

- The model's structure is [LINEAR -> RELU] × × (L-1) -> LINEAR -> SIGMOID . I.e., it has L − 1 L − 1 layers using a ReLU activation function followed by an output layer with a sigmoid activation function.

- Use random initialization for the weight matrices. Use np.random.randn(shape) * 0.01 .

- Use zeros initialization for the biases. Use np.zeros(shape) .

- We will store n [ l ] n [ l ] , the number of units in different layers, in a variable layer_dims . For example, the layer_dims for the "Planar Data classification model" from last week would have been [2,4,1]: There were two inputs, one hidden layer with 4 hidden units, and an output layer with 1 output unit. Thus means W1 's shape was (4,2), b1 was (4,1), W2 was (1,4) and b2 was (1,1). Now you will generalize this to L L layers!

- Here is the implementation for L = 1 L = 1 (one layer neural network). It should inspire you to implement the general case (L-layer neural network).

4 - Forward propagation module

4.1 - linear forward.

- LINEAR -> ACTIVATION where ACTIVATION will be either ReLU or Sigmoid.

- [LINEAR -> RELU] × × (L-1) -> LINEAR -> SIGMOID (whole model)

4.2 - Linear-Activation Forward

- Sigmoid : σ ( Z ) = σ ( W A + b ) = 1 1 + e − ( W A + b ) . We have provided you with the sigmoid function. This function returns two items: the activation value " a " and a " cache " that contains " Z " (it's what we will feed in to the corresponding backward function). To use it you could just call: A , activation_cache = sigmoid ( Z )

- ReLU : The mathematical formula for ReLu is A = R E L U ( Z ) = m a x ( 0 , Z ) A = R E L U ( Z ) = m a x ( 0 , Z ) . We have provided you with the relu function. This function returns two items: the activation value " A " and a " cache " that contains " Z " (it's what we will feed in to the corresponding backward function). To use it you could just call: A , activation_cache = relu ( Z )

d) L-Layer Model

- Use the functions you had previously written

- Use a for loop to replicate [LINEAR->RELU] (L-1) times

- Don't forget to keep track of the caches in the "caches" list. To add a new value c to a list , you can use list.append(c) .

5 - Cost function

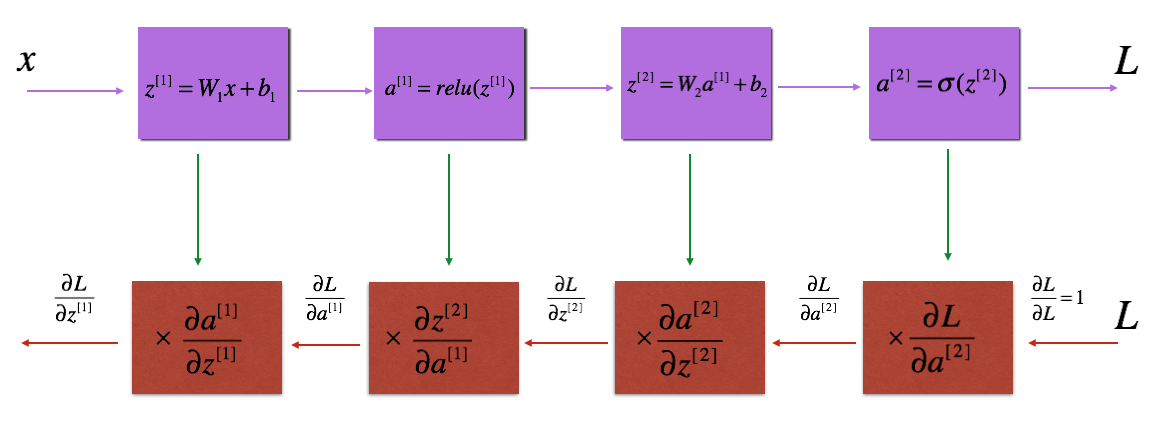

6 - backward propagation module.

- LINEAR backward

- LINEAR -> ACTIVATION backward where ACTIVATION computes the derivative of either the ReLU or sigmoid activation

- [LINEAR -> RELU] × × (L-1) -> LINEAR -> SIGMOID backward (whole model)

6.1 - Linear backward

6.2 - Linear-Activation backward

- sigmoid_backward : Implements the backward propagation for SIGMOID unit. You can call it as follows:

- relu_backward : Implements the backward propagation for RELU unit. You can call it as follows:

6.3 - L-Model Backward

6.4 - Update Parameters

7 - conclusion.

- A two-layer neural network

- An L-layer neural network

hi bro...i was working on the week 4 assignment .i am getting an assertion error on cost_compute function.help me with this..but the same function is working for the l layer model AssertionError Traceback (most recent call last) in () ----> 1 parameters = two_layer_model(train_x, train_y, layers_dims = (n_x, n_h, n_y), num_iterations = 2500, print_cost= True) in two_layer_model(X, Y, layers_dims, learning_rate, num_iterations, print_cost) 46 # Compute cost 47 ### START CODE HERE ### (≈ 1 line of code) ---> 48 cost = compute_cost(A2, Y) 49 ### END CODE HERE ### 50 /home/jovyan/work/Week 4/Deep Neural Network Application: Image Classification/dnn_app_utils_v3.py in compute_cost(AL, Y) 265 266 cost = np.squeeze(cost) # To make sure your cost's shape is what we expect (e.g. this turns [[17]] into 17). --> 267 assert(cost.shape == ()) 268 269 return cost AssertionError:

Hey,I am facing problem in linear activation forward function of week 4 assignment Building Deep Neural Network. I think I have implemented it correctly and the output matches with the expected one. I also cross check it with your solution and both were same. But the grader marks it, and all the functions in which this function is called as incorrect. I am unable to find any error in its coding as it was straightforward in which I used built in functions of SIGMOID and RELU. Please guide.

hi bro iam always getting the grading error although iam getting the crrt o/p for all

Our website uses cookies to improve your experience. Learn more

Contact form

Week 2 : Programming Assignment: Residual Networks

Week 2 : programming assignment: residual networks facing this error. please guide..

NameError Traceback (most recent call last) Input In [27], in <cell line: 13>() 9 X3 = np.ones((1, 4, 4, 3)) * 3 11 X = np.concatenate((X1, X2, X3), axis = 0).astype(np.float32) —> 13 A3 = identity_block(X, f=2, filters=[4, 4, 3], 14 initializer=lambda seed=0:constant(value=1)) 15 print(‘\033[1mWith training=False\033[0m\n’) 16 A3np = A3.numpy()

Input In [26], in identity_block(X, f, filters, initializer) 24 # First component of main path 25 X = Conv2D(filters = F1, kernel_size = 1, strides = (1,1), padding = ‘valid’, kernel_initializer = initializer(seed=0))(X) —> 26 X = BatchNormalization(axis = 3)(X, training = training) # Default axis 27 X = Activation(‘relu’)(X) 29 ### START CODE HERE 30 ## Second component of main path (≈3 lines)

NameError: name ‘training’ is not defined

Does this help?

I am adding training = True but giving error.

Hi @fayyaz9191 ,

Your code in the first post is correct, because it is the value in the input argument ‘training’ needs to be passed to BatchNormalization(). The error reported could be due to the execution environment is not up-to-date, ie. you notebook has been inactive. Just rerun your code from start should help.

The notebook makes use of tf.keras.backend.set_learning_phase to set the training phase. Why use training flag inside the method def identity_block ?

Hi @balaji.ambresh ,

Unless I am on the wrong course. I am referring to C4 week2 ResNets ex1 where the function identity_block() is defined

This is the function signature:

training is not part of the parameter list.

You are right about. I was using the assignment on github repo. Will try to locate that file again later.

IMAGES

VIDEO

COMMENTS

Notes, programming assignments and quizzes from all courses within the Coursera Deep Learning specialization offered by deeplearning.ai: (i) Neural Networks and Deep Learning; (ii) Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization; (iii) Structuring Machine Learning Projects; (iv) Convolutional Neural Networks; (v) Sequence Models - amanchadha/coursera-deep ...

I have recently completed the Neural Networks and Deep Learning course from Coursera by deeplearning.ai. While doing the course we have to go through various quiz and assignments in Python. Here, I am sharing my solutions for the weekly assignments throughout the course. These solutions are for reference only.

27 #(≈ 2 lines of code) 28 # compute activation —> 29 A = sigmoid(np.dot(w.T,X) + b) 30 # compute cost by using np.dot to perform multiplication. 31 # And don't use loops for the sum. <array_function internals> in dot(*args, **kwargs) ValueError: shapes (1,4) and (2,3) not aligned: 4 (dim 1) != 2 (dim 0)

This Specialization was updated in April 2021 to include developments in deep learning and programming frameworks. One of the most major changes was shifting from Tensorflow 1 to Tensorflow 2. Also, new materials were added. However, Most of the old online repositories still don't have old codes. This repo contains updated versions of the ...

Welcome to this week's programming assignment! Up until now, you've always used Numpy to build neural networks, but this week you'll explore a deep learning framework that allows you to build neural networks more easily. Machine learning frameworks like TensorFlow, PaddlePaddle, Torch, Caffe, Keras, and many others can speed up your machine ...

Hi everyone! I don't understand why this code: train_set_x_flatten = train_set_x_orig.reshape(-1, m_train) won't return the correct results as: train_set_x_flatten = train_set_x_orig.reshape( m_train, -1).T I wanted to see if there was a way to implement this without using transpose. My reasoning was that we just want the resulting dataset to have m_train columns and some number of rows we ...

Programming Assignment: Convolutional Model: application; Week 2: Deep convolutional models Key Concepts of Week 2. Understand multiple foundational papers of convolutional neural networks; Analyze the dimensionality reduction of a volume in a very deep network; Understand and Implement a Residual network; Build a deep neural network using Keras

Week 4: Deep Neural Networks. Understand the key computations underlying deep learning, use them to build and train deep neural networks, and apply it to computer vision. Quiz 4: Key concepts on Deep Neural Networks; Programming Assignment: Building your Deep Neural Network Step by Step; Programming Assignment: Deep Neural Network Application ...

• Build and train deep neural networks, implement vectorized neural networks, identify architecture parameters, and apply DL to your applications • Use best practices to train and develop test sets and analyze bias/variance for building DL applications, use standard NN techniques, apply optimization algorithms, and implement a neural ...

I've finished almost the whole rest of the assignment, but this function is upsetting me, because np.zeros() seems like such a basic numpy function that I need to be able to use correctly!! SECONDLY,

\\n\""," ]"," },"," {"," \"cell_type\": \"markdown\","," \"metadata\": {},"," \"source\": ["," \"For convenience, you should now reshape images of shape (num_px, num ...

Week 2 , programming assignment. Course Q&A. Deep Learning Specialization. Convolutional Neural Networks. Moneet_Mohan_Devadig January 16, 2022, 2:36pm 1. I am getting the assertion error, 'AssertionError: Wrong values when training=True.'. ... Week 2 - assignement 1: convolutional block Convolutional Neural Networks. @dexcrew I too have ...

Click here to see solutions for all Machine Learning Coursera Assignments. Click here to see more codes for Raspberry Pi 3 and similar Family. Click here to see more codes for NodeMCU ESP8266 and similar Family. Click here to see more codes for Arduino Mega (ATMega 2560) and similar Family. Feel free to ask doubts in the comment section. I will try my best to answer it.

Programming Assignments. Course 1: Neural Networks and Deep Learning. Week 2 - PA 1 - Logistic Regression with a Neural Network mindset. Week 3 - PA 2 - Planar data classification with one hidden layer. Week 4 - PA 3 - Building your Deep Neural Network: Step by Step¶. Week 4 - PA 4 - Deep Neural Network for Image Classification: Application.

In the next assignment, you will use these functions to build a deep neural network for image classification. After this assignment you will be able to: Use non-linear units like ReLU to improve your model. Build a deeper neural network (with more than 1 hidden layer) Implement an easy-to-use neural network class.

Hello all, Actually i didnt understand what is the problem in my program. I am new one. [Week 2 Assignment Problem.pdf|attachment] Hello all, Actually i didnt understand what is the problem in my program. I am new one. ... Neural Networks and Deep Learning. week-2. asikulhaque April 30, 2024, 5:48am 1. Hello all, Actually i didnt understand ...

Week 2 Week 3 Week 4 Neural Networks: A Review - Part 1 Neural Networks: A Review - 2 ... Quiz Programming Assignment 2 Quiz : Weekly Quiz 4 Deep Learning for Computer Vision: Week 4 Feedback Form ... A deep classifier network with a fully connected layer at the end can handle input images of varying size

\""," ]"," },"," {"," \"cell_type\": \"markdown\","," \"metadata\": {},"," \"source\": ["," \"**Note**:\\n\","," \"In normalizeRows(), you can try to print the shapes ...

When creating a post, please add: Week 2 must be added in the tags option of the post. Link to the classroom item you are referring to: Coursera | Online Courses & Credentials From Top Educators. ... Question about Course 1 Week 2 programming assignment cell 11. Course Q&A. Deep Learning Specialization. Neural Networks and Deep Learning. week-2 ...

The notebook makes use of tf.keras.backend.set_learning_phase to set the training phase. Why use training flag inside the method def identity_block ? Kic February 14, 2024, 6:14pm

Saved searches Use saved searches to filter your results more quickly