How to write a hypothesis for marketing experimentation

- Apr 11, 2021

- 5 minute read

- Creating your strongest marketing hypothesis

The potential for your marketing improvement depends on the strength of your testing hypotheses.

But where are you getting your test ideas from? Have you been scouring competitor sites, or perhaps pulling from previous designs on your site? The web is full of ideas and you’re full of ideas – there is no shortage of inspiration, that’s for sure.

Coming up with something you want to test isn’t hard to do. Coming up with something you should test can be hard to do.

Hard – yes. Impossible? No. Which is good news, because if you can’t create hypotheses for things that should be tested, your test results won’t mean mean much, and you probably shouldn’t be spending your time testing.

Taking the time to write your hypotheses correctly will help you structure your ideas, get better results, and avoid wasting traffic on poor test designs.

With this post, we’re getting advanced with marketing hypotheses, showing you how to write and structure your hypotheses to gain both business results and marketing insights!

By the time you finish reading, you’ll be able to:

- Distinguish a solid hypothesis from a time-waster, and

- Structure your solid hypothesis to get results and insights

To make this whole experience a bit more tangible, let’s track a sample idea from…well…idea to hypothesis.

Let’s say you identified a call-to-action (CTA)* while browsing the web, and you were inspired to test something similar on your own lead generation landing page. You think it might work for your users! Your idea is:

“My page needs a new CTA.”

*A call-to-action is the point where you, as a marketer, ask your prospect to do something on your page. It often includes a button or link to an action like “Buy”, “Sign up”, or “Request a quote”.

The basics: The correct marketing hypothesis format

Level up: moving from a good to great hypothesis, it’s based on a science, building marketing hypotheses to create insights, what makes a great hypothesis.

A well-structured hypothesis provides insights whether it is proved, disproved, or results are inconclusive.

You should never phrase a marketing hypothesis as a question. It should be written as a statement that can be rejected or confirmed.

Further, it should be a statement geared toward revealing insights – with this in mind, it helps to imagine each statement followed by a reason :

- Changing _______ into ______ will increase [conversion goal], because:

- Changing _______ into ______ will decrease [conversion goal], because:

- Changing _______ into ______ will not affect [conversion goal], because:

Each of the above sentences ends with ‘because’ to set the expectation that there will be an explanation behind the results of whatever you’re testing.

It’s important to remember to plan ahead when you create a test, and think about explaining why the test turned out the way it did when the results come in.

Understanding what makes an idea worth testing is necessary for your optimization team.

If your tests are based on random ideas you googled or were suggested by a consultant, your testing process still has its training wheels on. Great hypotheses aren’t random. They’re based on rationale and aim for learning.

Hypotheses should be based on themes and analysis that show potential conversion barriers.

At Conversion, we call this investigation phase the “Explore Phase” where we use frameworks like the LIFT Model to understand the prospect’s unique perspective. (You can read more on the the full optimization process here).

A well-founded marketing hypothesis should also provide you with new, testable clues about your users regardless of whether or not the test wins, loses or yields inconclusive results.

These new insights should inform future testing: a solid hypothesis can help you quickly separate worthwhile ideas from the rest when planning follow-up tests.

“Ultimately, what matters most is that you have a hypothesis going into each experiment and you design each experiment to address that hypothesis.” – Nick So, VP of Delivery

Here’s a quick tip :

If you’re about to run a test that isn’t going to tell you anything new about your users and their motivations, it’s probably not worth investing your time in.

Let’s take this opportunity to refer back to your original idea:

Ok, but what now ? To get actionable insights from ‘a new CTA’, you need to know why it behaved the way it did. You need to ask the right question.

To test the waters, maybe you changed the copy of the CTA button on your lead generation form from “Submit” to “Send demo request”. If this change leads to an increase in conversions, it could mean that your users require more clarity about what their information is being used for.

That’s a potential insight.

Based on this insight, you could follow up with another test that adds copy around the CTA about next steps: what the user should anticipate after they have submitted their information.

For example, will they be speaking to a specialist via email? Will something be waiting for them the next time they visit your site? You can test providing more information, and see if your users are interested in knowing it!

That’s the cool thing about a good hypothesis: the results of the test, while important (of course) aren’t the only component driving your future test ideas. The insights gleaned lead to further hypotheses and insights in a virtuous cycle.

The term “hypothesis” probably isn’t foreign to you. In fact, it may bring up memories of grade-school science class; it’s a critical part of the scientific method .

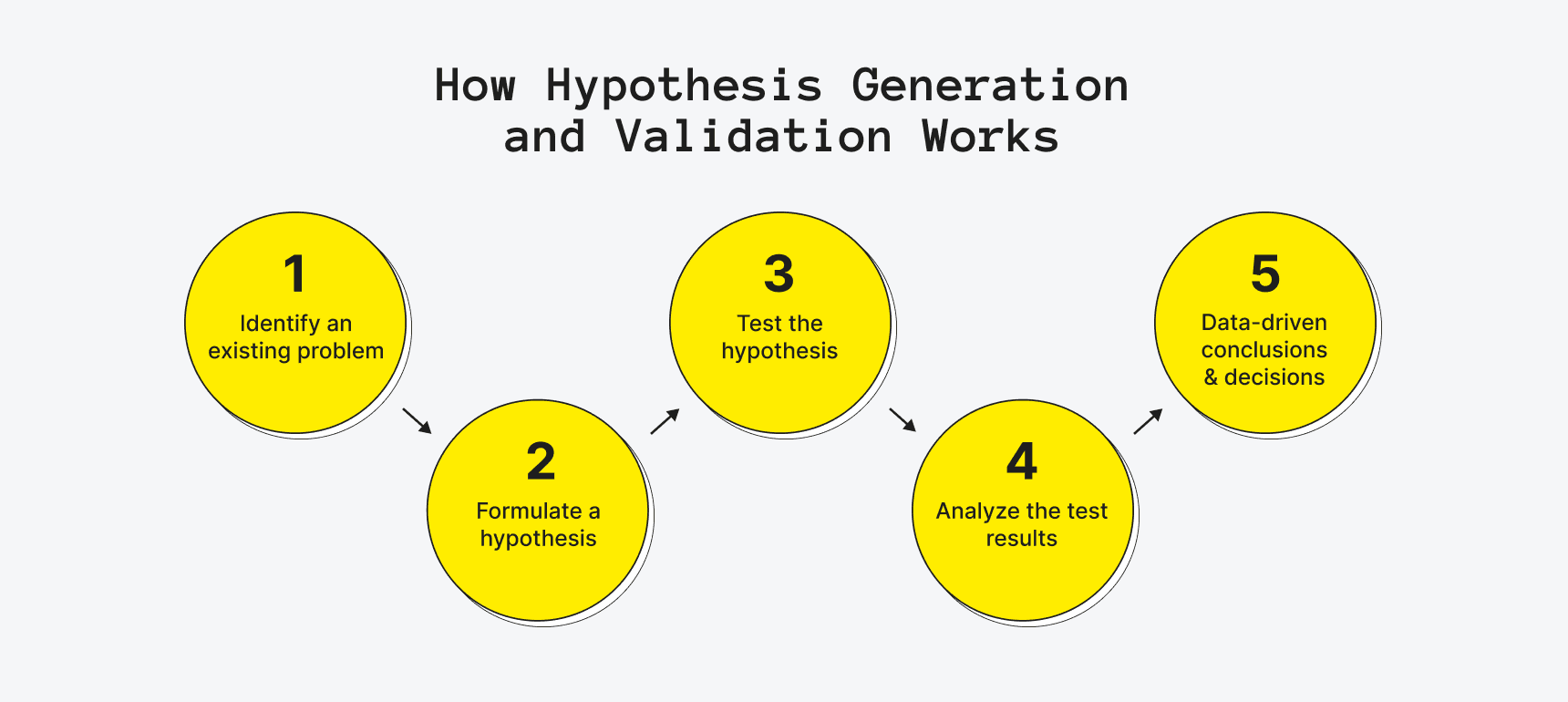

The scientific method in testing follows a systematic routine that sets ideation up to predict the results of experiments via:

- Collecting data and information through observation

- Creating tentative descriptions of what is being observed

- Forming hypotheses that predict different outcomes based on these observations

- Testing your hypotheses

- Analyzing the data, drawing conclusions and insights from the results

Don’t worry! Hypothesizing may seem ‘sciency’, but it doesn’t have to be complicated in practice.

Hypothesizing simply helps ensure the results from your tests are quantifiable, and is necessary if you want to understand how the results reflect the change made in your test.

A strong marketing hypothesis allows testers to use a structured approach in order to discover what works, why it works, how it works, where it works, and who it works on.

“My page needs a new CTA.” Is this idea in its current state clear enough to help you understand what works? Maybe. Why it works? No. Where it works? Maybe. Who it works on? No.

Your idea needs refining.

Let’s pull back and take a broader look at the lead generation landing page we want to test.

Imagine the situation: you’ve been diligent in your data collection and you notice several recurrences of Clarity pain points – meaning that there are many unclear instances throughout the page’s messaging.

Rather than focusing on the CTA right off the bat, it may be more beneficial to deal with the bigger clarity issue.

Now you’re starting to think about solving your prospects conversion barriers rather than just testing random ideas!

If you believe the overall page is unclear, your overarching theme of inquiry might be positioned as:

- “Improving the clarity of the page will reduce confusion and improve [conversion goal].”

By testing a hypothesis that supports this clarity theme, you can gain confidence in the validity of it as an actionable marketing insight over time.

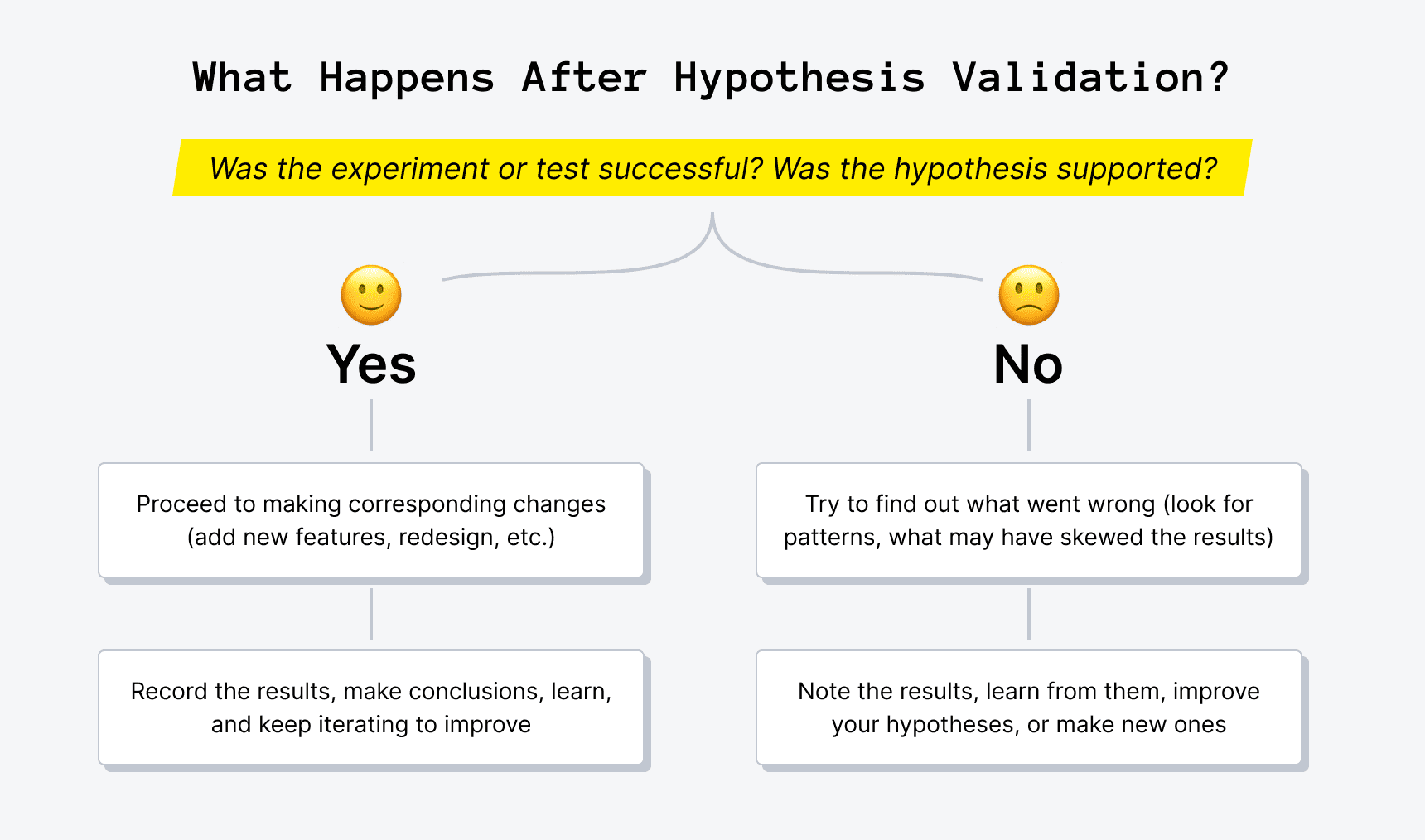

If the test results are negative : It may not be worth investigating this motivational barrier any further on this page. In this case, you could return to the data and look at the other motivational barriers that might be affecting user behavior.

If the test results are positive : You might want to continue to refine the clarity of the page’s message with further testing.

Typically, a test will start with a broad idea — you identify the changes to make, predict how those changes will impact your conversion goal, and write it out as a broad theme as shown above. Then, repeated tests aimed at that theme will confirm or undermine the strength of the underlying insight.

You believe you’ve identified an overall problem on your landing page (there’s a problem with clarity). Now you want to understand how individual elements contribute to the problem, and the effect these individual elements have on your users.

It’s game time – now you can start designing a hypothesis that will generate insights.

You believe your users need more clarity. You’re ready to dig deeper to find out if that’s true!

If a specific question needs answering, you should structure your test to make a single change. This isolation might ask: “What element are users most sensitive to when it comes to the lack of clarity?” and “What changes do I believe will support increasing clarity?”

At this point, you’ll want to boil down your overarching theme…

- Improving the clarity of the page will reduce confusion and improve [conversion goal].

…into a quantifiable hypothesis that isolates key sections:

- Changing the wording of this CTA to set expectations for users (from “submit” to “send demo request”) will reduce confusion about the next steps in the funnel and improve order completions.

Does this answer what works? Yes: changing the wording on your CTA.

Does this answer why it works? Yes: reducing confusion about the next steps in the funnel.

Does this answer where it works? Yes: on this page, before the user enters this theoretical funnel.

Does this answer who it works on? No, this question demands another isolation. You might structure your hypothesis more like this:

- Changing the wording of the CTA to set expectations for users (from “submit” to “send demo request”) will reduce confusion for visitors coming from my email campaign about the next steps in the funnel and improve order completions.

Now we’ve got a clear hypothesis. And one worth testing!

1. It’s testable.

2. It addresses conversion barriers.

3. It aims at gaining marketing insights.

Let’s compare:

The original idea : “My page needs a new CTA.”

Following the hypothesis structure : “A new CTA on my page will increase [conversion goal]”

The first test implied a problem with clarity, provides a potential theme : “Improving the clarity of the page will reduce confusion and improve [conversion goal].”

The potential clarity theme leads to a new hypothesis : “Changing the wording of the CTA to set expectations for users (from “submit” to “send demo request”) will reduce confusion about the next steps in the funnel and improve order completions.”

Final refined hypothesis : “Changing the wording of the CTA to set expectations for users (from “submit” to “send demo request”) will reduce confusion for visitors coming from my email campaign about the next steps in the funnel and improve order completions.”

Which test would you rather your team invest in?

Before you start your next test, take the time to do a proper analysis of the page you want to focus on. Do preliminary testing to define bigger issues, and use that information to refine and pinpoint your marketing hypothesis to give you forward-looking insights.

Doing this will help you avoid time-wasting tests, and enable you to start getting some insights for your team to keep testing!

Share this post

Other articles you might like

Mixed Methods Experimentation

The Conversion Methodology: an internal training case study

Why CRO should (probably) be a priority

- Resources Home 🏠

- Try SciSpace Copilot

- Search research papers

- Add Copilot Extension

- Try AI Detector

- Try Paraphraser

- Try Citation Generator

- April Papers

- June Papers

- July Papers

The Craft of Writing a Strong Hypothesis

Table of Contents

Writing a hypothesis is one of the essential elements of a scientific research paper. It needs to be to the point, clearly communicating what your research is trying to accomplish. A blurry, drawn-out, or complexly-structured hypothesis can confuse your readers. Or worse, the editor and peer reviewers.

A captivating hypothesis is not too intricate. This blog will take you through the process so that, by the end of it, you have a better idea of how to convey your research paper's intent in just one sentence.

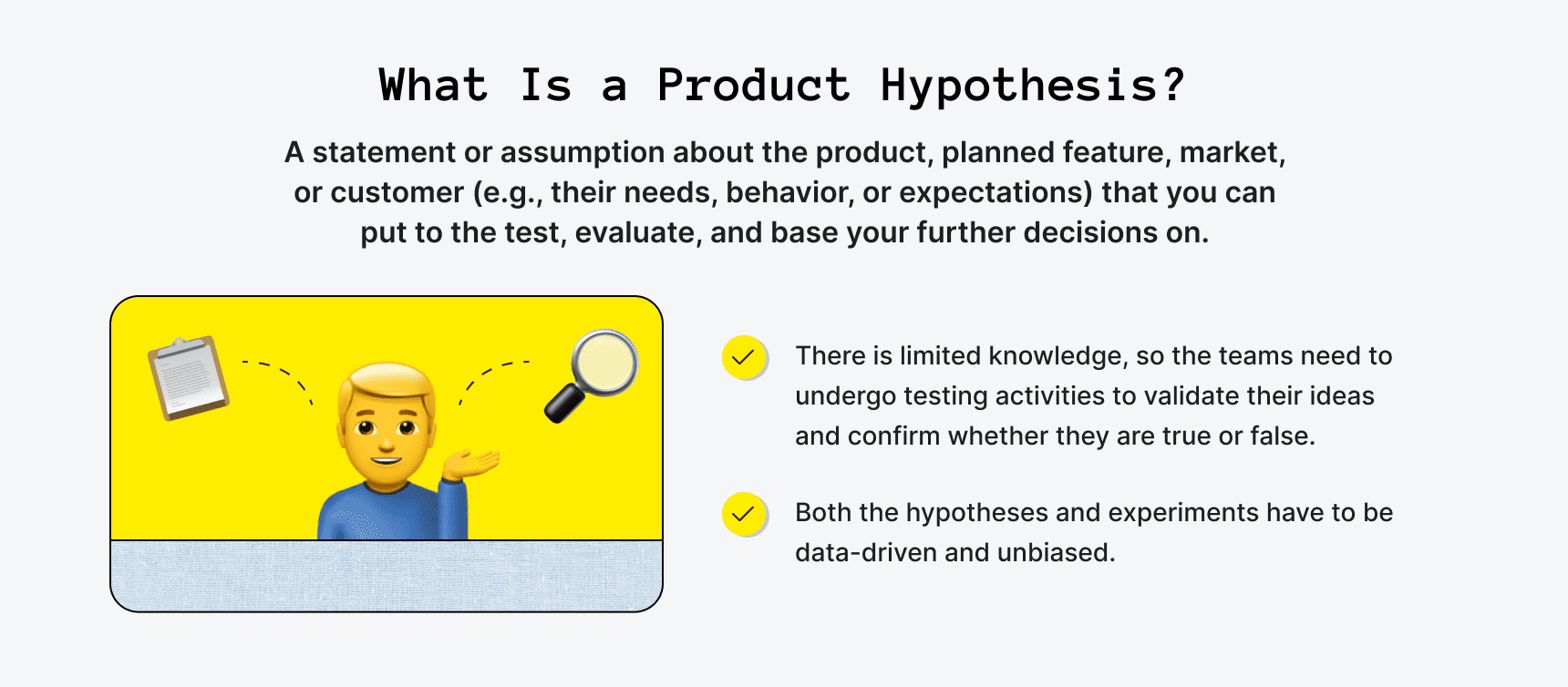

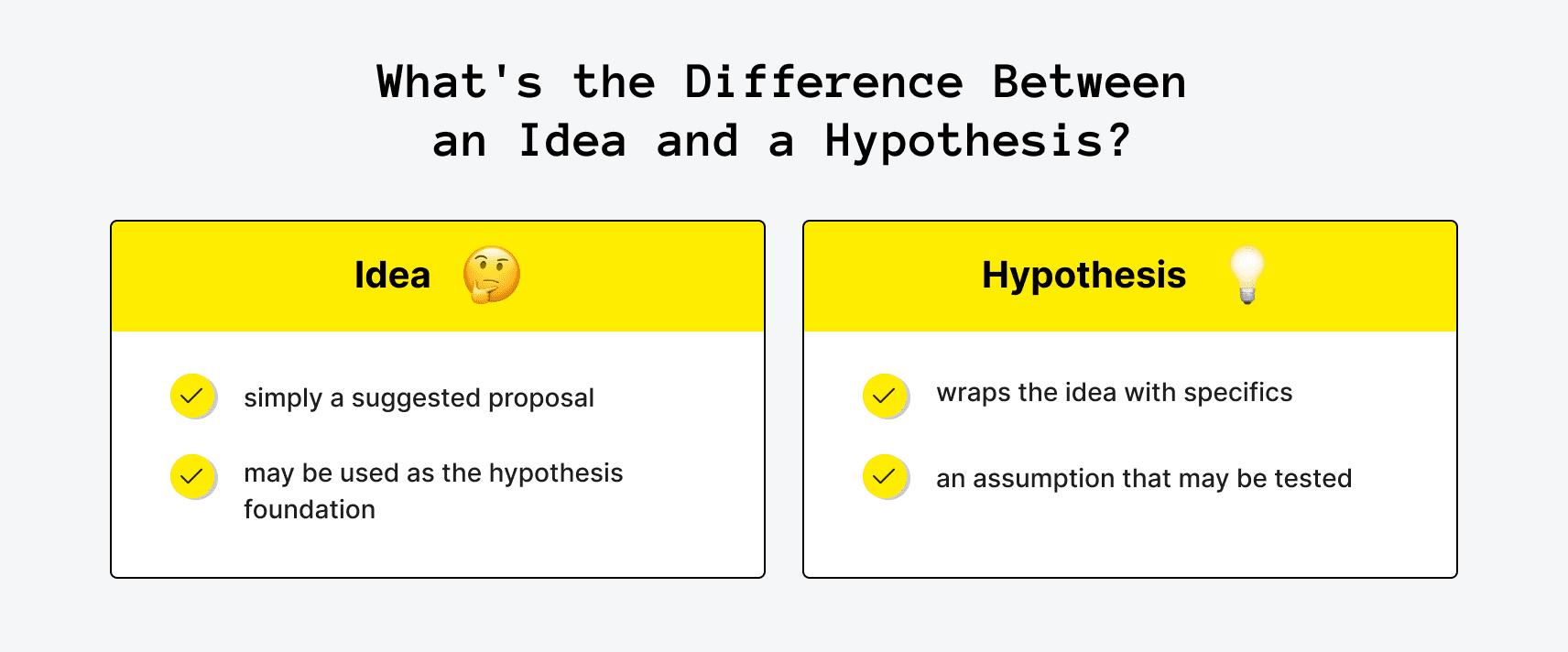

What is a Hypothesis?

The first step in your scientific endeavor, a hypothesis, is a strong, concise statement that forms the basis of your research. It is not the same as a thesis statement , which is a brief summary of your research paper .

The sole purpose of a hypothesis is to predict your paper's findings, data, and conclusion. It comes from a place of curiosity and intuition . When you write a hypothesis, you're essentially making an educated guess based on scientific prejudices and evidence, which is further proven or disproven through the scientific method.

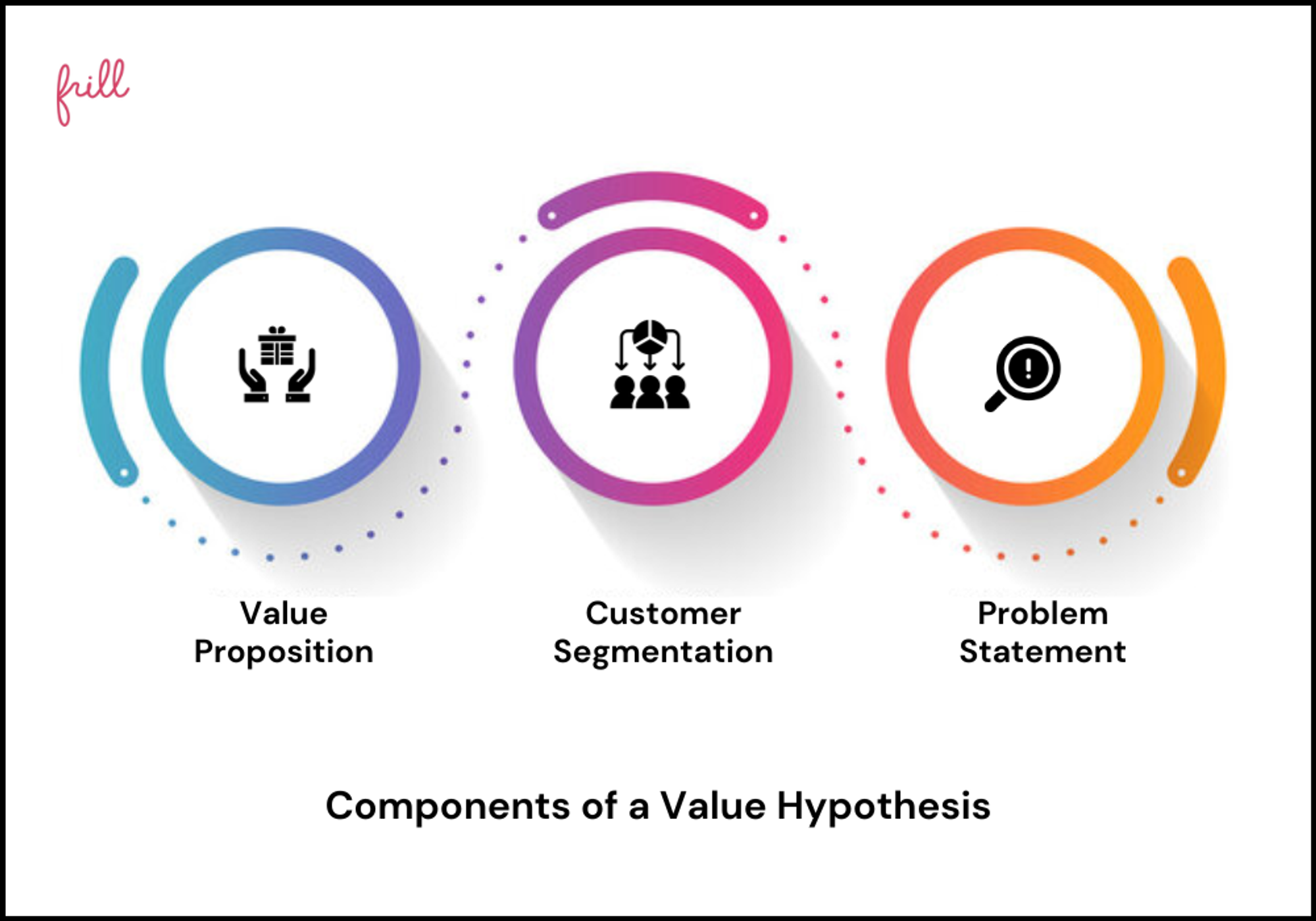

The reason for undertaking research is to observe a specific phenomenon. A hypothesis, therefore, lays out what the said phenomenon is. And it does so through two variables, an independent and dependent variable.

The independent variable is the cause behind the observation, while the dependent variable is the effect of the cause. A good example of this is “mixing red and blue forms purple.” In this hypothesis, mixing red and blue is the independent variable as you're combining the two colors at your own will. The formation of purple is the dependent variable as, in this case, it is conditional to the independent variable.

Different Types of Hypotheses

Types of hypotheses

Some would stand by the notion that there are only two types of hypotheses: a Null hypothesis and an Alternative hypothesis. While that may have some truth to it, it would be better to fully distinguish the most common forms as these terms come up so often, which might leave you out of context.

Apart from Null and Alternative, there are Complex, Simple, Directional, Non-Directional, Statistical, and Associative and casual hypotheses. They don't necessarily have to be exclusive, as one hypothesis can tick many boxes, but knowing the distinctions between them will make it easier for you to construct your own.

1. Null hypothesis

A null hypothesis proposes no relationship between two variables. Denoted by H 0 , it is a negative statement like “Attending physiotherapy sessions does not affect athletes' on-field performance.” Here, the author claims physiotherapy sessions have no effect on on-field performances. Even if there is, it's only a coincidence.

2. Alternative hypothesis

Considered to be the opposite of a null hypothesis, an alternative hypothesis is donated as H1 or Ha. It explicitly states that the dependent variable affects the independent variable. A good alternative hypothesis example is “Attending physiotherapy sessions improves athletes' on-field performance.” or “Water evaporates at 100 °C. ” The alternative hypothesis further branches into directional and non-directional.

- Directional hypothesis: A hypothesis that states the result would be either positive or negative is called directional hypothesis. It accompanies H1 with either the ‘<' or ‘>' sign.

- Non-directional hypothesis: A non-directional hypothesis only claims an effect on the dependent variable. It does not clarify whether the result would be positive or negative. The sign for a non-directional hypothesis is ‘≠.'

3. Simple hypothesis

A simple hypothesis is a statement made to reflect the relation between exactly two variables. One independent and one dependent. Consider the example, “Smoking is a prominent cause of lung cancer." The dependent variable, lung cancer, is dependent on the independent variable, smoking.

4. Complex hypothesis

In contrast to a simple hypothesis, a complex hypothesis implies the relationship between multiple independent and dependent variables. For instance, “Individuals who eat more fruits tend to have higher immunity, lesser cholesterol, and high metabolism.” The independent variable is eating more fruits, while the dependent variables are higher immunity, lesser cholesterol, and high metabolism.

5. Associative and casual hypothesis

Associative and casual hypotheses don't exhibit how many variables there will be. They define the relationship between the variables. In an associative hypothesis, changing any one variable, dependent or independent, affects others. In a casual hypothesis, the independent variable directly affects the dependent.

6. Empirical hypothesis

Also referred to as the working hypothesis, an empirical hypothesis claims a theory's validation via experiments and observation. This way, the statement appears justifiable and different from a wild guess.

Say, the hypothesis is “Women who take iron tablets face a lesser risk of anemia than those who take vitamin B12.” This is an example of an empirical hypothesis where the researcher the statement after assessing a group of women who take iron tablets and charting the findings.

7. Statistical hypothesis

The point of a statistical hypothesis is to test an already existing hypothesis by studying a population sample. Hypothesis like “44% of the Indian population belong in the age group of 22-27.” leverage evidence to prove or disprove a particular statement.

Characteristics of a Good Hypothesis

Writing a hypothesis is essential as it can make or break your research for you. That includes your chances of getting published in a journal. So when you're designing one, keep an eye out for these pointers:

- A research hypothesis has to be simple yet clear to look justifiable enough.

- It has to be testable — your research would be rendered pointless if too far-fetched into reality or limited by technology.

- It has to be precise about the results —what you are trying to do and achieve through it should come out in your hypothesis.

- A research hypothesis should be self-explanatory, leaving no doubt in the reader's mind.

- If you are developing a relational hypothesis, you need to include the variables and establish an appropriate relationship among them.

- A hypothesis must keep and reflect the scope for further investigations and experiments.

Separating a Hypothesis from a Prediction

Outside of academia, hypothesis and prediction are often used interchangeably. In research writing, this is not only confusing but also incorrect. And although a hypothesis and prediction are guesses at their core, there are many differences between them.

A hypothesis is an educated guess or even a testable prediction validated through research. It aims to analyze the gathered evidence and facts to define a relationship between variables and put forth a logical explanation behind the nature of events.

Predictions are assumptions or expected outcomes made without any backing evidence. They are more fictionally inclined regardless of where they originate from.

For this reason, a hypothesis holds much more weight than a prediction. It sticks to the scientific method rather than pure guesswork. "Planets revolve around the Sun." is an example of a hypothesis as it is previous knowledge and observed trends. Additionally, we can test it through the scientific method.

Whereas "COVID-19 will be eradicated by 2030." is a prediction. Even though it results from past trends, we can't prove or disprove it. So, the only way this gets validated is to wait and watch if COVID-19 cases end by 2030.

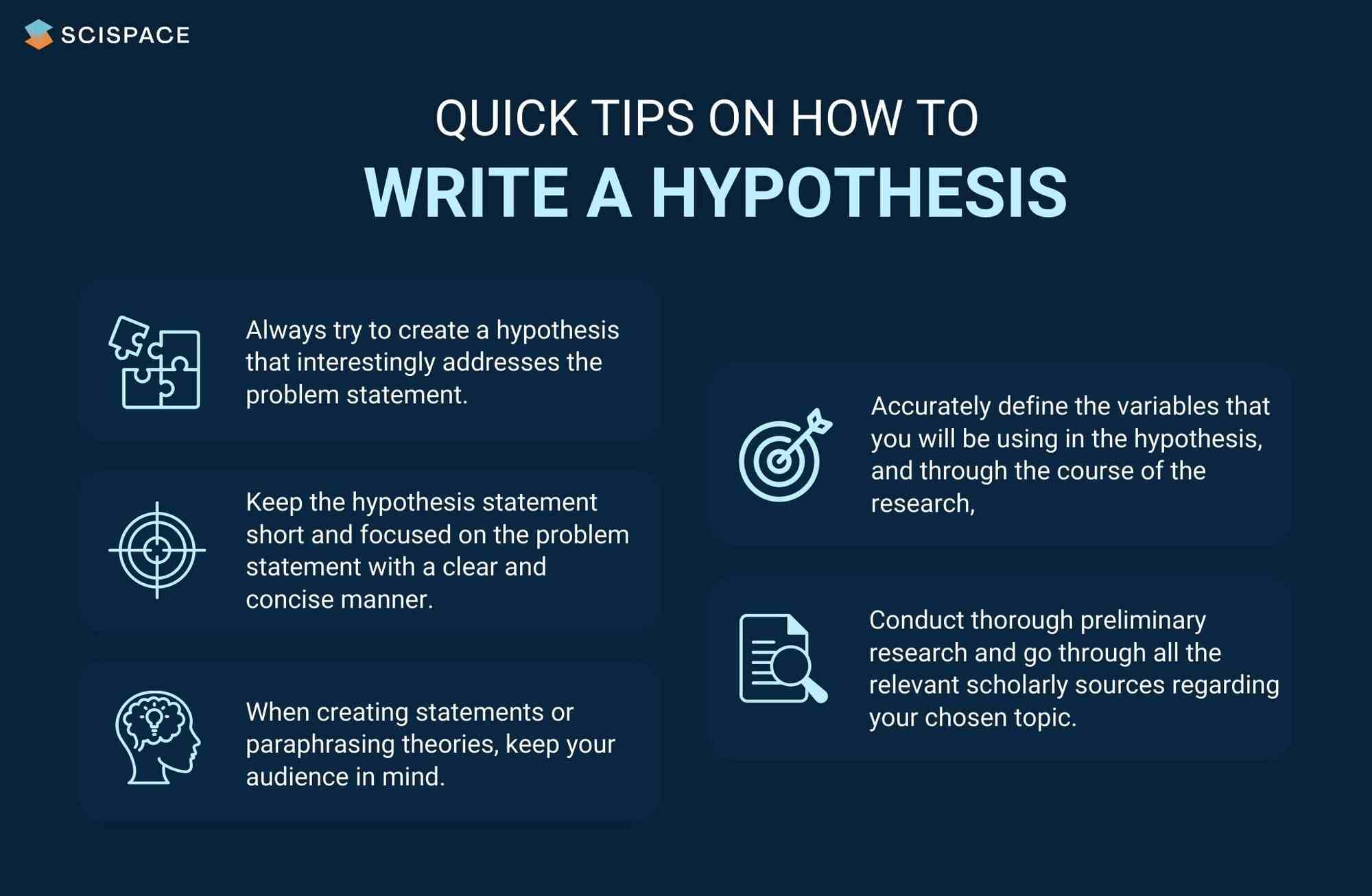

Finally, How to Write a Hypothesis

Quick tips on writing a hypothesis

1. Be clear about your research question

A hypothesis should instantly address the research question or the problem statement. To do so, you need to ask a question. Understand the constraints of your undertaken research topic and then formulate a simple and topic-centric problem. Only after that can you develop a hypothesis and further test for evidence.

2. Carry out a recce

Once you have your research's foundation laid out, it would be best to conduct preliminary research. Go through previous theories, academic papers, data, and experiments before you start curating your research hypothesis. It will give you an idea of your hypothesis's viability or originality.

Making use of references from relevant research papers helps draft a good research hypothesis. SciSpace Discover offers a repository of over 270 million research papers to browse through and gain a deeper understanding of related studies on a particular topic. Additionally, you can use SciSpace Copilot , your AI research assistant, for reading any lengthy research paper and getting a more summarized context of it. A hypothesis can be formed after evaluating many such summarized research papers. Copilot also offers explanations for theories and equations, explains paper in simplified version, allows you to highlight any text in the paper or clip math equations and tables and provides a deeper, clear understanding of what is being said. This can improve the hypothesis by helping you identify potential research gaps.

3. Create a 3-dimensional hypothesis

Variables are an essential part of any reasonable hypothesis. So, identify your independent and dependent variable(s) and form a correlation between them. The ideal way to do this is to write the hypothetical assumption in the ‘if-then' form. If you use this form, make sure that you state the predefined relationship between the variables.

In another way, you can choose to present your hypothesis as a comparison between two variables. Here, you must specify the difference you expect to observe in the results.

4. Write the first draft

Now that everything is in place, it's time to write your hypothesis. For starters, create the first draft. In this version, write what you expect to find from your research.

Clearly separate your independent and dependent variables and the link between them. Don't fixate on syntax at this stage. The goal is to ensure your hypothesis addresses the issue.

5. Proof your hypothesis

After preparing the first draft of your hypothesis, you need to inspect it thoroughly. It should tick all the boxes, like being concise, straightforward, relevant, and accurate. Your final hypothesis has to be well-structured as well.

Research projects are an exciting and crucial part of being a scholar. And once you have your research question, you need a great hypothesis to begin conducting research. Thus, knowing how to write a hypothesis is very important.

Now that you have a firmer grasp on what a good hypothesis constitutes, the different kinds there are, and what process to follow, you will find it much easier to write your hypothesis, which ultimately helps your research.

Now it's easier than ever to streamline your research workflow with SciSpace Discover . Its integrated, comprehensive end-to-end platform for research allows scholars to easily discover, write and publish their research and fosters collaboration.

It includes everything you need, including a repository of over 270 million research papers across disciplines, SEO-optimized summaries and public profiles to show your expertise and experience.

If you found these tips on writing a research hypothesis useful, head over to our blog on Statistical Hypothesis Testing to learn about the top researchers, papers, and institutions in this domain.

Frequently Asked Questions (FAQs)

1. what is the definition of hypothesis.

According to the Oxford dictionary, a hypothesis is defined as “An idea or explanation of something that is based on a few known facts, but that has not yet been proved to be true or correct”.

2. What is an example of hypothesis?

The hypothesis is a statement that proposes a relationship between two or more variables. An example: "If we increase the number of new users who join our platform by 25%, then we will see an increase in revenue."

3. What is an example of null hypothesis?

A null hypothesis is a statement that there is no relationship between two variables. The null hypothesis is written as H0. The null hypothesis states that there is no effect. For example, if you're studying whether or not a particular type of exercise increases strength, your null hypothesis will be "there is no difference in strength between people who exercise and people who don't."

4. What are the types of research?

• Fundamental research

• Applied research

• Qualitative research

• Quantitative research

• Mixed research

• Exploratory research

• Longitudinal research

• Cross-sectional research

• Field research

• Laboratory research

• Fixed research

• Flexible research

• Action research

• Policy research

• Classification research

• Comparative research

• Causal research

• Inductive research

• Deductive research

5. How to write a hypothesis?

• Your hypothesis should be able to predict the relationship and outcome.

• Avoid wordiness by keeping it simple and brief.

• Your hypothesis should contain observable and testable outcomes.

• Your hypothesis should be relevant to the research question.

6. What are the 2 types of hypothesis?

• Null hypotheses are used to test the claim that "there is no difference between two groups of data".

• Alternative hypotheses test the claim that "there is a difference between two data groups".

7. Difference between research question and research hypothesis?

A research question is a broad, open-ended question you will try to answer through your research. A hypothesis is a statement based on prior research or theory that you expect to be true due to your study. Example - Research question: What are the factors that influence the adoption of the new technology? Research hypothesis: There is a positive relationship between age, education and income level with the adoption of the new technology.

8. What is plural for hypothesis?

The plural of hypothesis is hypotheses. Here's an example of how it would be used in a statement, "Numerous well-considered hypotheses are presented in this part, and they are supported by tables and figures that are well-illustrated."

9. What is the red queen hypothesis?

The red queen hypothesis in evolutionary biology states that species must constantly evolve to avoid extinction because if they don't, they will be outcompeted by other species that are evolving. Leigh Van Valen first proposed it in 1973; since then, it has been tested and substantiated many times.

10. Who is known as the father of null hypothesis?

The father of the null hypothesis is Sir Ronald Fisher. He published a paper in 1925 that introduced the concept of null hypothesis testing, and he was also the first to use the term itself.

11. When to reject null hypothesis?

You need to find a significant difference between your two populations to reject the null hypothesis. You can determine that by running statistical tests such as an independent sample t-test or a dependent sample t-test. You should reject the null hypothesis if the p-value is less than 0.05.

You might also like

Consensus GPT vs. SciSpace GPT: Choose the Best GPT for Research

Literature Review and Theoretical Framework: Understanding the Differences

Types of Essays in Academic Writing - Quick Guide (2024)

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- How to Write a Strong Hypothesis | Steps & Examples

How to Write a Strong Hypothesis | Steps & Examples

Published on May 6, 2022 by Shona McCombes . Revised on November 20, 2023.

A hypothesis is a statement that can be tested by scientific research. If you want to test a relationship between two or more variables, you need to write hypotheses before you start your experiment or data collection .

Example: Hypothesis

Daily apple consumption leads to fewer doctor’s visits.

Table of contents

What is a hypothesis, developing a hypothesis (with example), hypothesis examples, other interesting articles, frequently asked questions about writing hypotheses.

A hypothesis states your predictions about what your research will find. It is a tentative answer to your research question that has not yet been tested. For some research projects, you might have to write several hypotheses that address different aspects of your research question.

A hypothesis is not just a guess – it should be based on existing theories and knowledge. It also has to be testable, which means you can support or refute it through scientific research methods (such as experiments, observations and statistical analysis of data).

Variables in hypotheses

Hypotheses propose a relationship between two or more types of variables .

- An independent variable is something the researcher changes or controls.

- A dependent variable is something the researcher observes and measures.

If there are any control variables , extraneous variables , or confounding variables , be sure to jot those down as you go to minimize the chances that research bias will affect your results.

In this example, the independent variable is exposure to the sun – the assumed cause . The dependent variable is the level of happiness – the assumed effect .

Here's why students love Scribbr's proofreading services

Discover proofreading & editing

Step 1. Ask a question

Writing a hypothesis begins with a research question that you want to answer. The question should be focused, specific, and researchable within the constraints of your project.

Step 2. Do some preliminary research

Your initial answer to the question should be based on what is already known about the topic. Look for theories and previous studies to help you form educated assumptions about what your research will find.

At this stage, you might construct a conceptual framework to ensure that you’re embarking on a relevant topic . This can also help you identify which variables you will study and what you think the relationships are between them. Sometimes, you’ll have to operationalize more complex constructs.

Step 3. Formulate your hypothesis

Now you should have some idea of what you expect to find. Write your initial answer to the question in a clear, concise sentence.

4. Refine your hypothesis

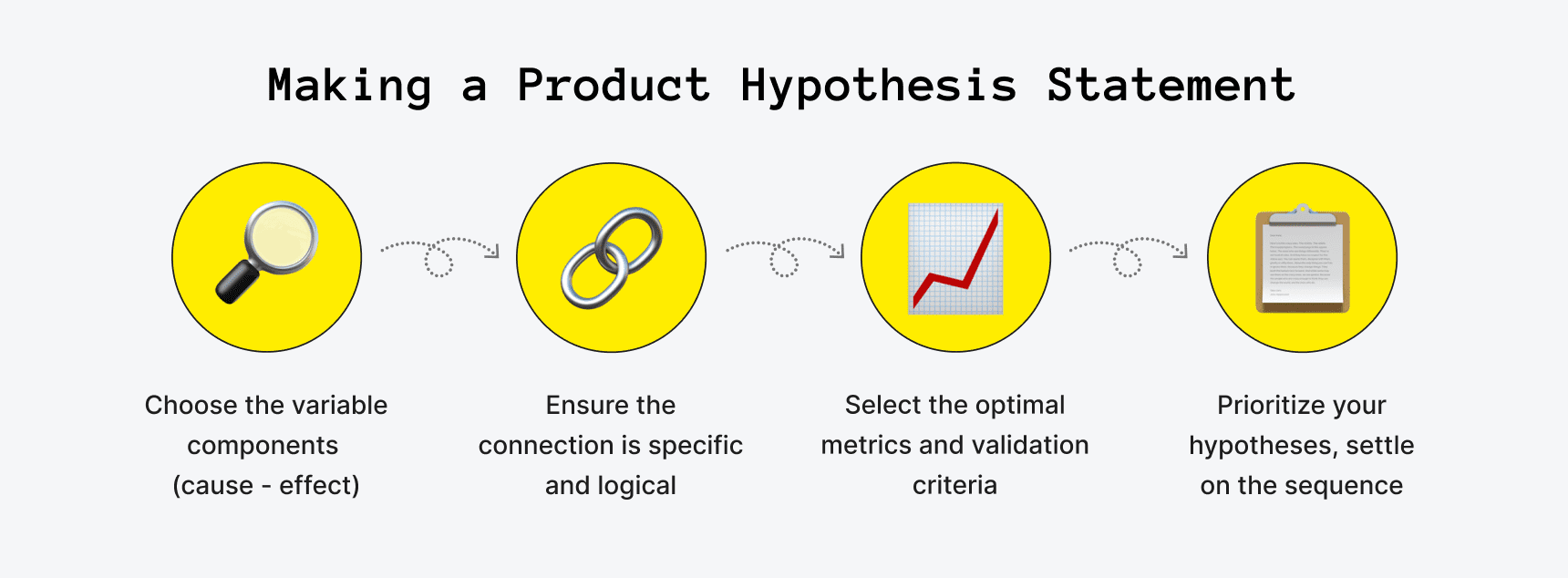

You need to make sure your hypothesis is specific and testable. There are various ways of phrasing a hypothesis, but all the terms you use should have clear definitions, and the hypothesis should contain:

- The relevant variables

- The specific group being studied

- The predicted outcome of the experiment or analysis

5. Phrase your hypothesis in three ways

To identify the variables, you can write a simple prediction in if…then form. The first part of the sentence states the independent variable and the second part states the dependent variable.

In academic research, hypotheses are more commonly phrased in terms of correlations or effects, where you directly state the predicted relationship between variables.

If you are comparing two groups, the hypothesis can state what difference you expect to find between them.

6. Write a null hypothesis

If your research involves statistical hypothesis testing , you will also have to write a null hypothesis . The null hypothesis is the default position that there is no association between the variables. The null hypothesis is written as H 0 , while the alternative hypothesis is H 1 or H a .

- H 0 : The number of lectures attended by first-year students has no effect on their final exam scores.

- H 1 : The number of lectures attended by first-year students has a positive effect on their final exam scores.

| Research question | Hypothesis | Null hypothesis |

|---|---|---|

| What are the health benefits of eating an apple a day? | Increasing apple consumption in over-60s will result in decreasing frequency of doctor’s visits. | Increasing apple consumption in over-60s will have no effect on frequency of doctor’s visits. |

| Which airlines have the most delays? | Low-cost airlines are more likely to have delays than premium airlines. | Low-cost and premium airlines are equally likely to have delays. |

| Can flexible work arrangements improve job satisfaction? | Employees who have flexible working hours will report greater job satisfaction than employees who work fixed hours. | There is no relationship between working hour flexibility and job satisfaction. |

| How effective is high school sex education at reducing teen pregnancies? | Teenagers who received sex education lessons throughout high school will have lower rates of unplanned pregnancy teenagers who did not receive any sex education. | High school sex education has no effect on teen pregnancy rates. |

| What effect does daily use of social media have on the attention span of under-16s? | There is a negative between time spent on social media and attention span in under-16s. | There is no relationship between social media use and attention span in under-16s. |

If you want to know more about the research process , methodology , research bias , or statistics , make sure to check out some of our other articles with explanations and examples.

- Sampling methods

- Simple random sampling

- Stratified sampling

- Cluster sampling

- Likert scales

- Reproducibility

Statistics

- Null hypothesis

- Statistical power

- Probability distribution

- Effect size

- Poisson distribution

Research bias

- Optimism bias

- Cognitive bias

- Implicit bias

- Hawthorne effect

- Anchoring bias

- Explicit bias

Prevent plagiarism. Run a free check.

A hypothesis is not just a guess — it should be based on existing theories and knowledge. It also has to be testable, which means you can support or refute it through scientific research methods (such as experiments, observations and statistical analysis of data).

Null and alternative hypotheses are used in statistical hypothesis testing . The null hypothesis of a test always predicts no effect or no relationship between variables, while the alternative hypothesis states your research prediction of an effect or relationship.

Hypothesis testing is a formal procedure for investigating our ideas about the world using statistics. It is used by scientists to test specific predictions, called hypotheses , by calculating how likely it is that a pattern or relationship between variables could have arisen by chance.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

McCombes, S. (2023, November 20). How to Write a Strong Hypothesis | Steps & Examples. Scribbr. Retrieved September 4, 2024, from https://www.scribbr.com/methodology/hypothesis/

Is this article helpful?

Shona McCombes

Other students also liked, construct validity | definition, types, & examples, what is a conceptual framework | tips & examples, operationalization | a guide with examples, pros & cons, "i thought ai proofreading was useless but..".

I've been using Scribbr for years now and I know it's a service that won't disappoint. It does a good job spotting mistakes”

Expert Advice on Developing a Hypothesis for Marketing Experimentation

- Conversion Rate Optimization

Simbar Dube

Every marketing experimentation process has to have a solid hypothesis.

That’s a must – unless you want to be roaming in the dark and heading towards a dead-end in your experimentation program.

Hypothesizing is the second phase of our SHIP optimization process here at Invesp.

It comes after we have completed the research phase.

This is an indication that we don’t just pull a hypothesis out of thin air. We always make sure that it is based on research data.

But having a research-backed hypothesis doesn’t mean that the hypothesis will always be correct. In fact, tons of hypotheses bear inconclusive results or get disproved.

The main idea of having a hypothesis in marketing experimentation is to help you gain insights – regardless of the testing outcome.

By the time you finish reading this article, you’ll know:

- The essential tips on what to do when crafting a hypothesis for marketing experiments

- How a marketing experiment hypothesis works

How experts develop a solid hypothesis

The basics: marketing experimentation hypothesis.

A hypothesis is a research-based statement that aims to explain an observed trend and create a solution that will improve the result. This statement is an educated, testable prediction about what will happen.

It has to be stated in declarative form and not as a question.

“ If we add magnification info, product video and making virtual mirror buttons, will that improve engagement? ” is not declarative, but “ Improving the experience of product pages by adding magnification info, product video and making virtual mirror buttons will increase engagement ” is.

Here’s a quick example of how a hypothesis should be phrased:

- Replacing ___ with __ will increase [conversion goal] by [%], because:

- Removing ___ and __ will decrease [conversion goal] by [%], because:

- Changing ___ into __ will not affect [conversion goal], because:

- Improving ___ by ___will increase [conversion goal], because:

As you can see from the above sentences, a good hypothesis is written in clear and simple language. Reading your hypothesis should tell your team members exactly what you thought was going to happen in an experiment.

Another important element of a good hypothesis is that it defines the variables in easy-to-measure terms, like who the participants are, what changes during the testing, and what the effect of the changes will be:

Example : Let’s say this is our hypothesis:

Displaying full look items on every “continue shopping & view your bag” pop-up and highlighting the value of having a full look will improve the visibility of a full look, encourage visitors to add multiple items from the same look and that will increase the average order value, quantity with cross-selling by 3% .

Who are the participants :

Visitors.

What changes during the testing :

Displaying full look items on every “continue shopping & view your bag” pop-up and highlighting the value of having a full look…

What the effect of the changes will be:

Will improve the visibility of a full look, encourage visitors to add multiple items from the same look and that will increase the average order value, quantity with cross-selling by 3% .

Don’t bite off more than you can chew! Answering some scientific questions can involve more than one experiment, each with its own hypothesis. so, you have to make sure your hypothesis is a specific statement relating to a single experiment.

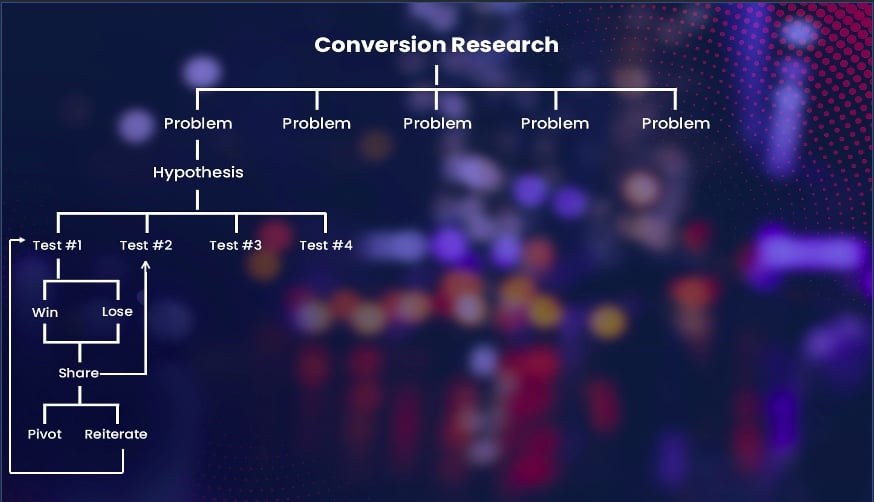

How a Marketing Experimentation Hypothesis Works

Assuming that you have done conversion research and you have identified a list of issues ( UX or conversion-related problems) and potential revenue opportunities on the site. The next thing you’d want to do is to prioritize the issues and determine which issues will most impact the bottom line.

Having ranked the issues you need to test them to determine which solution works best. At this point, you don’t have a clear solution for the problems identified. So, to get better results and avoid wasting traffic on poor test designs, you need to make sure that your testing plan is guided.

This is where a hypothesis comes into play.

For each and every problem you’re aiming to address, you need to craft a hypothesis for it – unless the problem is a technical issue that can be solved right away without the need to hypothesize or test.

One important thing you should note about an experimentation hypothesis is that it can be implemented in different ways.

This means that one hypothesis can have four or five different tests as illustrated in the image above. Khalid Saleh , the Invesp CEO, explains:

“There are several ways that can be used to support one single hypothesis. Each and every way is a possible test scenario. And that means you also have to prioritize the test design you want to start with. Ultimately the name of the game is you want to find the idea that has the biggest possible impact on the bottom line with the least amount of effort. We use almost 18 different metrics to score all of those.”

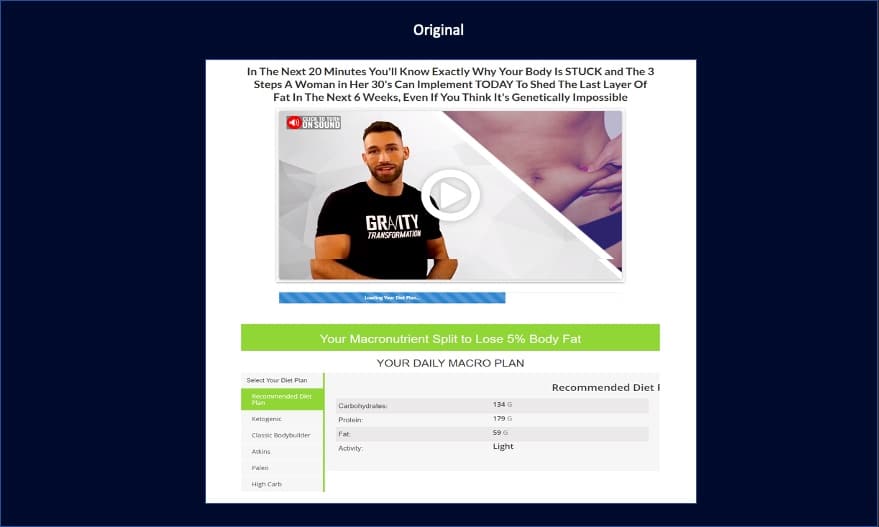

In one of the recent tests we launched after watching video recordings, viewing heatmaps, and conducting expert reviews, we noticed that:

- Visitors were scrolling to the bottom of the page to fill out a calculator so as to get a free diet plan.

- Brand is missing

- Too many free diet plans – and this made it hard for visitors to choose and understand.

- No value proposition on the page

- The copy didn’t mention the benefits of the paid program

- There was no clear CTA for the next action

To help you understand, let’s have a look at how the original page looked like before we worked on it:

So our aim was to make the shopping experience seamless for visitors, make the page more appealing and not confusing. In order to do that, here is how we phrased the hypothesis for the page above:

Improving the experience of optin landing pages by making the free offer accessible above the fold and highlighting the next action with a clear CTA and will increase the engagement on the offer and increase the conversion rate by 1%.

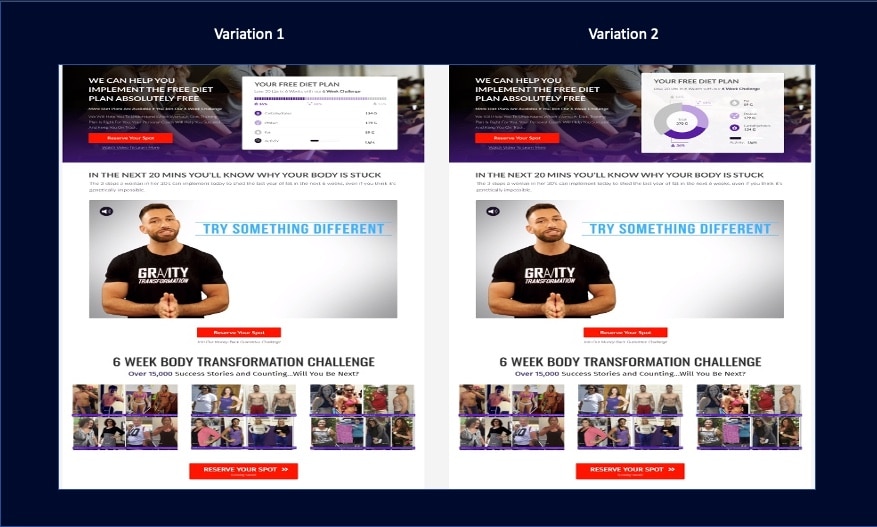

For this particular hypothesis, we had two design variations aligned to it:

The two above designs are different, but they are aligned to one hypothesis. This goes on to show how one hypothesis can be implemented in different ways. Looking at the two variations above – which one do you think won?

Yes, you’re right, V2 was the winner.

Considering that there are many ways you can implement one hypothesis, so when you launch a test and it fails, it doesn’t necessarily mean that the hypothesis was wrong. Khalid adds:

“A single failure of a test doesn’t mean that the hypothesis is incorrect. Nine times out of ten it’s because of the way you’ve implemented the hypothesis. Look at the way you’ve coded and look at the copy you’ve used – you are more likely going to find something wrong with it. Always be open.”

So there are three things you should keep in mind when it comes to marketing experimentation hypotheses:

- It takes a while for this hypothesis to really fully test it.

- A single failure doesn’t necessarily mean that the hypothesis is incorrect.

- Whether a hypothesis is proved or disproved, you can still learn something about your users.

I know it’s never easy to develop a hypothesis that informs future testing – I mean it takes a lot of intense research behind the scenes, and tons of ideas to begin with. So, I reached out to six CRO experts for tips and advice to help you understand more about developing a solid hypothesis and what to include in it.

Maurice says that a solid hypothesis should have not more than one goal:

Maurice Beerthuyzen – CRO/CXO Lead at ClickValue “Creating a hypothesis doesn’t begin at the hypothesis itself. It starts with research. What do you notice in your data, customer surveys, and other sources? Do you understand what happens on your website? When you notice an opportunity it is tempting to base one single A/B test on one hypothesis. Create hypothesis A and run a single test, and then move forward to the next test. With another hypothesis. But it is very rare that you solve your problem with only one hypothesis. Often a test provides several other questions. Questions which you can solve with running other tests. But based on that same hypothesis! We should not come up with a new hypothesis for every test. Another mistake that often happens is that we fill the hypothesis with multiple goals. Then we expect that the hypothesis will work on conversion rate, average order value, and/or Click Through Ratio. Of course, this is possible, but when you run your test, your hypothesis can only have one goal at once. And what if you have two goals? Just split the hypothesis then create a secondary hypothesis for your second goal. Every test has one primary goal. What if you find a winner on your secondary hypothesis? Rerun the test with the second hypothesis as the primary one.”

Jon believes that a strong hypothesis is built upon three pillars:

Jon MacDonald – President and Founder of The Good Respond to an established challenge – The challenge must have a strong background based on data, and the background should state an established challenge that the test is looking to address. Example: “Sign up form lacks proof of value, incorrectly assuming if users are on the page, they already want the product.” Propose a specific solution – What is the one, the single thing that is believed will address the stated challenge? Example: “Adding an image of the dashboard as a background to the signup form…”. State the assumed impact – The assumed impact should reference one specific, measurable optimization goal that was established prior to forming a hypothesis. Example: “…will increase signups.” So, if your hypothesis doesn’t have a specific, measurable goal like “will increase signups,” you’re not really stating a test hypothesis!”

Matt uses his own hypothesis builder to collate important data points into a single hypothesis.

Matt Beischel – Founder of Corvus CRO Like Jon, Matt also breaks down his hypothesis writing process into three sections. Unlike Jon, Matt sections are: Comprehension Response Outcome I set it up so that the names neatly match the “CRO.” It’s a sort of “mad-libs” style fill-in-the-blank where each input is an important piece of information for building out a robust hypothesis. I consider these the minimum required data points for a good hypothesis; if you can’t completely fill out the form, then you don’t have a good hypothesis. Here’s a breakdown of each data point: Comprehension – Identifying something that can be improved upon Problem: “What is a problem we have?” Observation Method: “How did we identify the problem?” Response – Change that can cause improvement Variation: “What change do we think could solve the problem?” Location: “Where should the change occur?” Scope: “What are the conditions for the change?” Audience: “Who should the change affect?” Outcome – Measurable result of the change that determines the success Behavior Change : “What change in behavior are we trying to affect?” Primary KPI: “What is the important metric that determines business impact?” Secondary KPIs: “Other metrics that will help reinforce/refute the Primary KPI” Something else to consider is that I have a “user first” approach to formulating hypotheses. My process above is always considered within the context of how it would first benefit the user. Now, I do feel that a successful experiment should satisfy the needs of BOTH users and businesses, but always be in favor of the user. Notice that “Behavior Change” is the first thing listed in Outcome, not primary business KPI. Sure, at the end of the day you are working for the business’s best interests (both strategically and financially), but placing the user first will better inform your decision making and prioritization; there’s a reason that things like personas, user stories, surveys, session replays, reviews, etc. exist after all. A business-first ideology is how you end up with dark patterns and damaging brand credibility.”

One of the many mistakes that CROs make when writing a hypothesis is that they are focused on wins and not on insights. Shiva advises against this mindset:

Shiva Manjunath – Marketing Manager and CRO at Gartner “Test to learn, not test to win. It’s a very simple reframe of hypotheses but can have a magnitude of difference. Here’s an example: Test to Win Hypothesis: If I put a product video in the middle of the product page, I will improve add to cart rates and improve CVR. Test to Learn Hypothesis: If I put a product video on the product page, there will be high engagement with the video and it will positively influence traffic What you’re doing is framing your hypothesis, and test, in a particular way to learn as much as you can. That is where you gain marketing insights. The more you run ‘marketing insight’ tests, the more you will win. Why? The more you compound marketing insight learnings, your win velocity will start to increase as a proxy of the learnings you’ve achieved. Then, you’ll have a higher chance of winning in your tests – and the more you’ll be able to drive business results.”

Lorenzo says it’s okay to focus on achieving a certain result as long as you are also getting an answer to: “Why is this event happening or not happening?”

Lorenzo Carreri – CRO Consultant “When I come up with a hypothesis for a new or iterative experiment, I always try to find an answer to a question. It could be something related to a problem people have or an opportunity to achieve a result or a way to learn something. The main question I want to answer is “Why is this event happening or not happening?” The question is driven by data, both qualitative and quantitative. The structure I use for stating my hypothesis is: From [data source], I noticed [this problem/opportunity] among [this audience of users] on [this page or multiple pages]. So I believe that by [offering this experiment solution], [this KPI] will [increase/decrease/stay the same].

Jakub Linowski says that hypotheses are meant to hold researchers accountable:

Jakub Linowski – Chief Editor of GoodUI “They do this by making your change and prediction more explicit. A typical hypothesis may be expressed as: If we change (X), then it will have some measurable effect (A). Unfortunately, this oversimplified format can also become a heavy burden to your experiment design with its extreme reductionism. However you decide to format your hypotheses, here are three suggestions for more flexibility to avoid limiting yourself. One Or More Changes To break out of the first limitation, we have to admit that our experiments may contain a single or multiple changes. Whereas the classic hypothesis encourages a single change or isolated variable, it’s not the only way we can run experiments. In the real world, it’s quite normal to see multiple design changes inside a single variation. One valid reason for doing this is when wishing to optimize a section of a website while aiming for a greater effect. As more positive changes compound together, there are times when teams decide to run bigger experiments. An experiment design (along with your hypotheses) therefore should allow for both single or multiple changes. One Or More Metrics A second limitation of many hypotheses is that they often ask us to only make a single prediction at a time. There are times when we might like to make multiple guesses or predictions to a set of metrics. A simple example of this might be a trade-off experiment with a guess of increased sales but decreased trial signups. Being able to express single or multiple metrics in our experimental designs should therefore be possible. Estimates, Directional Predictions, Or Unknowns Finally, traditional hypotheses also tend to force very simple directional predictions by asking us to guess whether something will increase or decrease. In reality, however, the fidelity of predictions can be higher or lower. On one hand, I’ve seen and made experiment estimations that contain specific numbers from prior data (ex: increase sales by 14%). While at other times it should also be acceptable to admit the unknown and leave the prediction blank. One example of this is when we are testing a completely novel idea without any prior data in a highly exploratory type of experiment. In such cases, it might be dishonest to make any sort of predictions and we should allow ourselves to express the unknown comfortably.”

Conclusion

So there you have it! Before you jump on launching a test, start by making sure that your hypothesis is solid and backed by research. Ask yourself the questions below when crafting a hypothesis for marketing experimentation:

- Is the hypothesis backed by research?

- Can the hypothesis be tested?

- Does the hypothesis provide insights?

- Does the hypothesis set the expectation that there will be an explanation behind the results of whatever you’re testing?

Don’t worry! Hypothesizing may seem like a very complicated process, but it’s not complicated in practice especially when you have done proper research.

If you enjoyed reading this article and you’d love to get the best CRO content – delivered by the best experts in the industry – straight to your inbox, every week. Please subscribe here .

Share This Article

Join 25,000+ marketing professionals.

Subscribe to Invesp’s blog feed for future articles delivered to receive weekly updates by email.

Discover Similar Topics

Net Promoter Score: A Quick Guide for E-commerce Businesses

8 Ways to Optimize Your Ecommerce Customer Journey

Our Services

- Conversion Optimization Training

- Conversion Rate Optimization Professional Services

- Landing Page Optimization

- Conversion Rate Audit

- Design for Growth

- Conversion Research & Discovery

- End to End Digital Optimization

By Industry

- E-commerce CRO Services

- Lead Generation CRO Services

- SaaS CRO Services

- Startup CRO Program

- Case Studies

- Privacy Policy

- © 2006-2020 All rights reserved. Invesp

Subscribe with us

- US office: Chicago, IL

- European office: Istanbul, Turkey

- +1.248.270.3325

- [email protected]

- Conversion Rate Optimization Services

- © 2006-2023 All rights reserved. Invesp

- Popular Topics

- A/B Testing

- Business & Growth

- Copywriting

- Infographics

- Landing Pages

- Sales & Marketing

Marketing Analytics Lab

Hypothesis Testing in Marketing Research

Introduction to Hypothesis Testing in Marketing Research

Hypothesis testing is a critical component of marketing research that allows marketers to draw conclusions about the effectiveness of their strategies. In essence, hypothesis testing involves making an educated guess about a population parameter and then using data to determine if the hypothesis is supported or rejected. In the context of marketing, hypotheses can be formulated about consumer behavior, product preferences, advertising effectiveness, and many other aspects of the marketing mix. By conducting hypothesis tests, marketers can make informed decisions based on empirical evidence rather than intuition or guesswork.

A hypothesis test in marketing research typically follows a structured process that involves defining a null hypothesis (H0) and an alternative hypothesis (HA), collecting and analyzing data, determining the appropriate statistical test to use, setting a significance level, and interpreting the results to either accept or reject the null hypothesis. The null hypothesis represents the status quo or the assumption that there is no significant difference or relationship between variables, while the alternative hypothesis suggests that there is a significant effect or relationship. By rigorously testing hypotheses, marketers can evaluate the impact of their marketing strategies and make data-driven decisions to optimize their campaigns and initiatives.

The results of hypothesis testing in marketing research provide valuable insights that can inform strategic decision-making and help marketers achieve their business objectives. Whether testing the effectiveness of a new product launch, evaluating the impact of a promotional campaign, or analyzing consumer preferences, hypothesis testing enables marketers to quantify the impact of their actions and make evidence-based recommendations. By employing statistical techniques and hypothesis testing in marketing research, organizations can gain a deeper understanding of consumer behavior, identify market trends, and refine their marketing strategies to drive business growth and success.

Key Steps and Considerations for Hypothesis Testing in Marketing Analysis

When conducting hypothesis testing in marketing research, there are several key steps and considerations that marketers should keep in mind to ensure the validity and reliability of their findings. Firstly, it is essential to clearly define the research question and formulate testable hypotheses that are specific, measurable, and relevant to the marketing objectives. By articulating clear hypotheses, marketers can establish a framework for data collection and analysis that aligns with the research objectives.

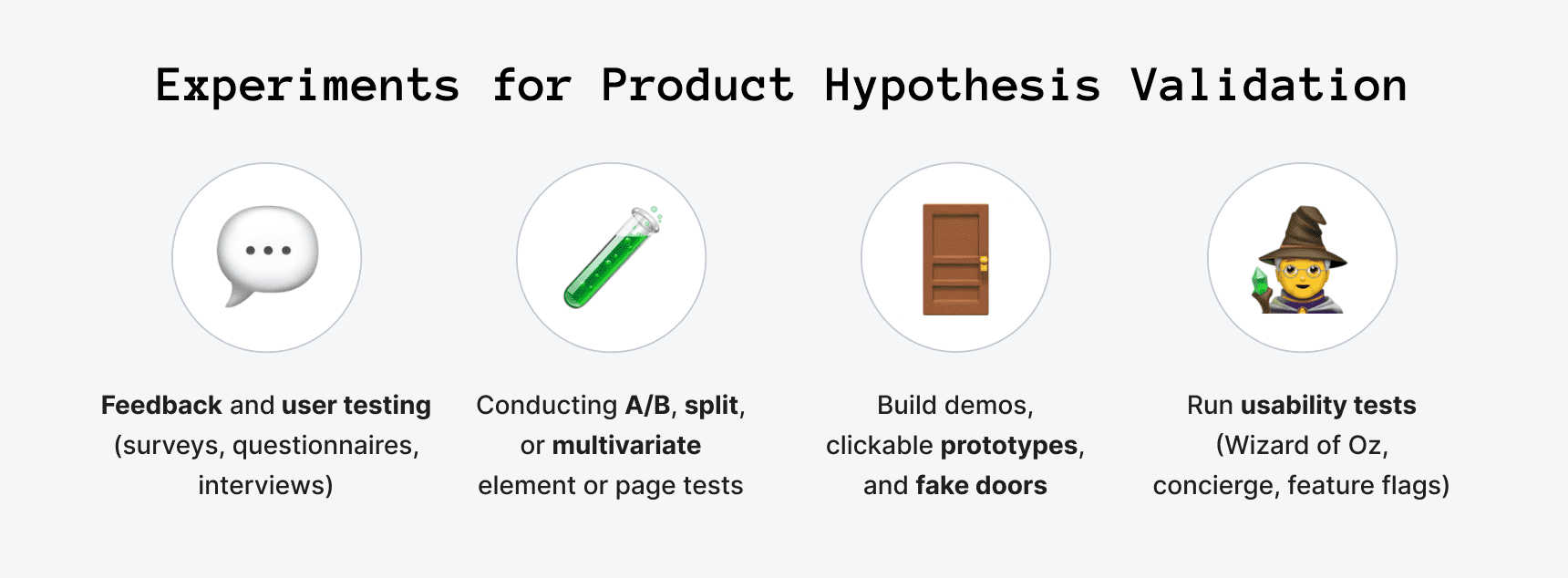

Once the hypotheses have been formulated, the next step is to determine the appropriate research design and methodology for data collection. Depending on the nature of the research question and the variables involved, marketers may choose to conduct experiments, surveys, observational studies, or other research methods to gather data. It is crucial to ensure that the data collected is representative of the target population and is collected in a systematic and unbiased manner to generate reliable results.

After collecting the data, marketers can perform statistical analysis to test the hypotheses using techniques such as t-tests, ANOVA, regression analysis, or chi-square tests, among others. It is important to select the appropriate statistical test based on the type of data and the research question being investigated. Additionally, setting a significance level (alpha) is crucial for determining the threshold for accepting or rejecting the null hypothesis. By interpreting the results in the context of the significance level, marketers can make informed decisions about the implications of the findings and their impact on marketing strategies.

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

- Business Essentials

- Leadership & Management

- Credential of Leadership, Impact, and Management in Business (CLIMB)

- Entrepreneurship & Innovation

- Digital Transformation

- Finance & Accounting

- Business in Society

- For Organizations

- Support Portal

- Media Coverage

- Founding Donors

- Leadership Team

- Harvard Business School →

- HBS Online →

- Business Insights →

Business Insights

Harvard Business School Online's Business Insights Blog provides the career insights you need to achieve your goals and gain confidence in your business skills.

- Career Development

- Communication

- Decision-Making

- Earning Your MBA

- Negotiation

- News & Events

- Productivity

- Staff Spotlight

- Student Profiles

- Work-Life Balance

- AI Essentials for Business

- Alternative Investments

- Business Analytics

- Business Strategy

- Business and Climate Change

- Creating Brand Value

- Design Thinking and Innovation

- Digital Marketing Strategy

- Disruptive Strategy

- Economics for Managers

- Entrepreneurship Essentials

- Financial Accounting

- Global Business

- Launching Tech Ventures

- Leadership Principles

- Leadership, Ethics, and Corporate Accountability

- Leading Change and Organizational Renewal

- Leading with Finance

- Management Essentials

- Negotiation Mastery

- Organizational Leadership

- Power and Influence for Positive Impact

- Strategy Execution

- Sustainable Business Strategy

- Sustainable Investing

- Winning with Digital Platforms

A Beginner’s Guide to Hypothesis Testing in Business

- 30 Mar 2021

Becoming a more data-driven decision-maker can bring several benefits to your organization, enabling you to identify new opportunities to pursue and threats to abate. Rather than allowing subjective thinking to guide your business strategy, backing your decisions with data can empower your company to become more innovative and, ultimately, profitable.

If you’re new to data-driven decision-making, you might be wondering how data translates into business strategy. The answer lies in generating a hypothesis and verifying or rejecting it based on what various forms of data tell you.

Below is a look at hypothesis testing and the role it plays in helping businesses become more data-driven.

Access your free e-book today.

What Is Hypothesis Testing?

To understand what hypothesis testing is, it’s important first to understand what a hypothesis is.

A hypothesis or hypothesis statement seeks to explain why something has happened, or what might happen, under certain conditions. It can also be used to understand how different variables relate to each other. Hypotheses are often written as if-then statements; for example, “If this happens, then this will happen.”

Hypothesis testing , then, is a statistical means of testing an assumption stated in a hypothesis. While the specific methodology leveraged depends on the nature of the hypothesis and data available, hypothesis testing typically uses sample data to extrapolate insights about a larger population.

Hypothesis Testing in Business

When it comes to data-driven decision-making, there’s a certain amount of risk that can mislead a professional. This could be due to flawed thinking or observations, incomplete or inaccurate data , or the presence of unknown variables. The danger in this is that, if major strategic decisions are made based on flawed insights, it can lead to wasted resources, missed opportunities, and catastrophic outcomes.

The real value of hypothesis testing in business is that it allows professionals to test their theories and assumptions before putting them into action. This essentially allows an organization to verify its analysis is correct before committing resources to implement a broader strategy.

As one example, consider a company that wishes to launch a new marketing campaign to revitalize sales during a slow period. Doing so could be an incredibly expensive endeavor, depending on the campaign’s size and complexity. The company, therefore, may wish to test the campaign on a smaller scale to understand how it will perform.

In this example, the hypothesis that’s being tested would fall along the lines of: “If the company launches a new marketing campaign, then it will translate into an increase in sales.” It may even be possible to quantify how much of a lift in sales the company expects to see from the effort. Pending the results of the pilot campaign, the business would then know whether it makes sense to roll it out more broadly.

Related: 9 Fundamental Data Science Skills for Business Professionals

Key Considerations for Hypothesis Testing

1. alternative hypothesis and null hypothesis.

In hypothesis testing, the hypothesis that’s being tested is known as the alternative hypothesis . Often, it’s expressed as a correlation or statistical relationship between variables. The null hypothesis , on the other hand, is a statement that’s meant to show there’s no statistical relationship between the variables being tested. It’s typically the exact opposite of whatever is stated in the alternative hypothesis.

For example, consider a company’s leadership team that historically and reliably sees $12 million in monthly revenue. They want to understand if reducing the price of their services will attract more customers and, in turn, increase revenue.

In this case, the alternative hypothesis may take the form of a statement such as: “If we reduce the price of our flagship service by five percent, then we’ll see an increase in sales and realize revenues greater than $12 million in the next month.”

The null hypothesis, on the other hand, would indicate that revenues wouldn’t increase from the base of $12 million, or might even decrease.

Check out the video below about the difference between an alternative and a null hypothesis, and subscribe to our YouTube channel for more explainer content.

2. Significance Level and P-Value

Statistically speaking, if you were to run the same scenario 100 times, you’d likely receive somewhat different results each time. If you were to plot these results in a distribution plot, you’d see the most likely outcome is at the tallest point in the graph, with less likely outcomes falling to the right and left of that point.

With this in mind, imagine you’ve completed your hypothesis test and have your results, which indicate there may be a correlation between the variables you were testing. To understand your results' significance, you’ll need to identify a p-value for the test, which helps note how confident you are in the test results.

In statistics, the p-value depicts the probability that, assuming the null hypothesis is correct, you might still observe results that are at least as extreme as the results of your hypothesis test. The smaller the p-value, the more likely the alternative hypothesis is correct, and the greater the significance of your results.

3. One-Sided vs. Two-Sided Testing

When it’s time to test your hypothesis, it’s important to leverage the correct testing method. The two most common hypothesis testing methods are one-sided and two-sided tests , or one-tailed and two-tailed tests, respectively.

Typically, you’d leverage a one-sided test when you have a strong conviction about the direction of change you expect to see due to your hypothesis test. You’d leverage a two-sided test when you’re less confident in the direction of change.

4. Sampling

To perform hypothesis testing in the first place, you need to collect a sample of data to be analyzed. Depending on the question you’re seeking to answer or investigate, you might collect samples through surveys, observational studies, or experiments.

A survey involves asking a series of questions to a random population sample and recording self-reported responses.

Observational studies involve a researcher observing a sample population and collecting data as it occurs naturally, without intervention.

Finally, an experiment involves dividing a sample into multiple groups, one of which acts as the control group. For each non-control group, the variable being studied is manipulated to determine how the data collected differs from that of the control group.

Learn How to Perform Hypothesis Testing

Hypothesis testing is a complex process involving different moving pieces that can allow an organization to effectively leverage its data and inform strategic decisions.

If you’re interested in better understanding hypothesis testing and the role it can play within your organization, one option is to complete a course that focuses on the process. Doing so can lay the statistical and analytical foundation you need to succeed.

Do you want to learn more about hypothesis testing? Explore Business Analytics —one of our online business essentials courses —and download our Beginner’s Guide to Data & Analytics .

About the Author

- Subscribers

- How To Use a New AI App and AI Agents To Build Your Best Landing Page

- The MECLABS AI Guild in Action: Teamwork in Crafting Their Optimal Landing Page

- How MECLABS AI Is Being Used To Build the AI Guild

- MECLABS AI’s Problem Solver in Action

- MECLABS AI: Harness AI With the Power of Your Voice

- Harnessing MECLABS AI: Transform Your Copywriting and Landing Pages

- MECLABS AI: Overcome the ‘Almost Trap’ and Get Real Answers

- MECLABS AI: A brief glimpse into what is coming!

- Transforming Marketing with MECLABS AI: A New Paradigm

- Creative AI Marketing: Escaping the ‘Vending Machine Mentality’

Designing Hypotheses that Win: A four-step framework for gaining customer wisdom and generating marketing results

There are smart marketers everywhere testing many smart ideas — and bad ones. The problem with ideas is that they are unreliable and unpredictable . Knowing how to test is only half of the equation. As marketing tools and technology evolve rapidly offering new, more powerful ways to measure consumer behavior and conduct more sophisticated testing, it is becoming more important than ever to have a reliable system for deciding what to test .

Without a guiding framework, we are left to draw ideas almost arbitrarily from competitors, brainstorms, colleagues, books and any other sources without truly understanding what makes them good, bad or successful. Ideas are unpredictable because until you can articulate a forceful “because” statement to why your ideas will work, regardless of how good, they are nothing more than a guess , albeit educated, but most often not by the customer.

20+ years of in-depth research, testing, optimization and over 20,000+ sales path experiments have taught us that there is an answer to this problem, and that answer involves rethinking how we view testing and optimization. This short article touches on the keynote message MECLABS Institute’s founder Flint McGlaughlin will give at the upcoming 2018 A/B Testing Summit virtual conference on December 12-13 th . You can register for free at the link above.

Marketers don’t need better ideas; they need a better understanding of their customer.

So if understanding your customer is the key to efficient and effective optimization and ideas aren’t reliable or predictable, what then? We begin with the process of intensively analyzing existing data, metrics, reports and research to construct our best Customer Theory , which is the articulation of our understanding of our customer and their behavior toward our offer.

Then, as we identify problems/focus areas for higher performance in our funnel, we transform our ideas for solving them into a hypothesis containing four key parts :

- If [we achieve this in the mind of the consumer]

- By [adding, subtracting or changing these elements]

- Then [this result will occur]

- Because [that will confirm or deny this belief/hypothesis about the customer]

By transforming ideas into hypotheses, we orient our test to learn about our customer rather than merely trying out an idea. The hypothesis grounds our thinking in the psychology of the customer by providing a framework that forces the right questions into the equation of what to test . “The goal of a test is not to get a lift, but to get a learning,” says Flint McGlaughlin, “and learning compounds over time.”

Let’s look at some examples of what to avoid in your testing, along with good examples of hypotheses.

“Let’s advertise our top products in our rotating banner — that’s what Competitor X is doing.”

“We need more attractive imagery … Let’s place a big, powerful hero image as our banner. Everyone is doing it.”

“We should go minimalist … It’s modern, sleek and sexy, and customers love it. It’ll be good for our brand. Less is more.”

“If we emphasize and sample the diversity of our product line by grouping our top products from various categories in a slowly rotating banner, we will increase clickthrough and engagement from the homepage because customers want to understand the range of what we have to offer (versus some other value, e.g., quality, style, efficacy, affordability, etc.).”

“If we reinforce the clarity of the value proposition by using more relevant imagery to draw attention to the most important information, we will increase clickthrough and ultimately conversion because the customer wants to quickly understand why we’re different in such a competitive space.”

“If we better emphasize the primary message be reducing unnecessary, less-relevant page elements and changing to a simpler, clearer more readable design, we will increase clickthrough and engagement on the homepage because customers are currently overwhelmed by too much friction on this page.”

The golden rule of optimization is “Specificity converts . ” The more specific/relevant you can be to the individual wants and needs of your ideal customer, the more likely the probability of conversion. To be as specific and relevant as possible to a consumer, we use testing not as merely an idea-trial hoping for positive results, but as a mechanism to fill in the gaps of our understanding that existing data can’t answer. Our understanding of the customer is what powers the efficiency and efficacy of our testing .

In Summary …

Smart ideas only work sometimes, but a framework based on understanding your customer will yield more consistent, more rewarding results that only improve over time. The first key to rethinking your approach to optimization is to construct a robust customer theory articulating your best understanding of your customer. From this, you can transform your ideas into hypotheses that will begin producing invaluable insights to lay the groundwork for how you communicate with your customer.

Looking for ideas to inform your hypotheses? We have created and compiled a 60-page guide that contains 21 crafted tools and concepts, and outlines the unique methodology we have used and tested with our partners for 20+ years. You can download the guide for free here: A Model of Your Customer’s Mind

You might also like …

A/B Testing Summit free online conference – Research your seat to see Flint McGlaughlin’s keynote Design Hypotheses that Win: A 4-step framework for gaining customer wisdom and generating significant results

The Hypothesis and the Modern-Day Marketer

Customer Theory: How we learned from a previous test to drive a 40% increase in CTR

Quin McGlaughlin is currently a Senior Optimization Analyst at MECLABS Institute and full-time distance student at Harvard University, studying psychology and business. He has worked on projects for some of MECLABS’ largest clients ranging from Fortune 50 companies to defense contractors, not-for-profits, major ecommerce organizations and others. He has also served as Vice Chair of Digital Strategy for the Harvard Extension Student Association and provided marketing consulting for small and mid-size businesses.

Low-Hanging Fruit for the Holiday Season: Four simple marketing changes with significant impact

Most Popular MarketingExperiments Articles of 2018

How to Discover Exactly What the Customer Wants to See on the Next Click: 3 critical…

The 21 Psychological Elements that Power Effective Web Design (Part 3)

The 21 Psychological Elements that Power Effective Web Design (Part 2)

The 21 Psychological Elements that Power Effective Web Design (Part 1)

Awesome work.Just wanted to drop a comment and say I am new to your blog and really like what I am reading.Thanks for the share

Leave A Reply Cancel Reply

Your email address will not be published.

Save my name, email, and website in this browser for the next time I comment.

- Quick Win Clinics

- Research Briefs

- A/B Testing

- Conversion Marketing

- Copywriting

- Digital Advertising

- Digital Analytics

- Digital Subscriptions

- E-commerce Marketing

- Email Marketing

- Lead Generation

- Social Marketing

- Value Proposition

- Research Services

- Video – Transparent Marketing

- Video – 15 years of marketing research in 11 minutes

- Lecture – The Web as a Living Laboratory

- Featured Research

Welcome, Login to your account.

Recover your password.

A password will be e-mailed to you.

Educational resources and simple solutions for your research journey

What is a Research Hypothesis: How to Write it, Types, and Examples

Any research begins with a research question and a research hypothesis . A research question alone may not suffice to design the experiment(s) needed to answer it. A hypothesis is central to the scientific method. But what is a hypothesis ? A hypothesis is a testable statement that proposes a possible explanation to a phenomenon, and it may include a prediction. Next, you may ask what is a research hypothesis ? Simply put, a research hypothesis is a prediction or educated guess about the relationship between the variables that you want to investigate.

It is important to be thorough when developing your research hypothesis. Shortcomings in the framing of a hypothesis can affect the study design and the results. A better understanding of the research hypothesis definition and characteristics of a good hypothesis will make it easier for you to develop your own hypothesis for your research. Let’s dive in to know more about the types of research hypothesis , how to write a research hypothesis , and some research hypothesis examples .

Table of Contents

What is a hypothesis ?

A hypothesis is based on the existing body of knowledge in a study area. Framed before the data are collected, a hypothesis states the tentative relationship between independent and dependent variables, along with a prediction of the outcome.

What is a research hypothesis ?

Young researchers starting out their journey are usually brimming with questions like “ What is a hypothesis ?” “ What is a research hypothesis ?” “How can I write a good research hypothesis ?”

A research hypothesis is a statement that proposes a possible explanation for an observable phenomenon or pattern. It guides the direction of a study and predicts the outcome of the investigation. A research hypothesis is testable, i.e., it can be supported or disproven through experimentation or observation.

Characteristics of a good hypothesis